@Mira - Trust Layer of AI Why “cross-chain” suddenly feels like the real test

I’ve noticed something shift in the last year: teams stopped arguing about whether AI is useful, and started arguing about whether it’s safe to rely on when the stakes are messy. Not “can it write,” but “can I defend this decision when a user complains, when a regulator asks, or when a partner chain disputes what happened.”

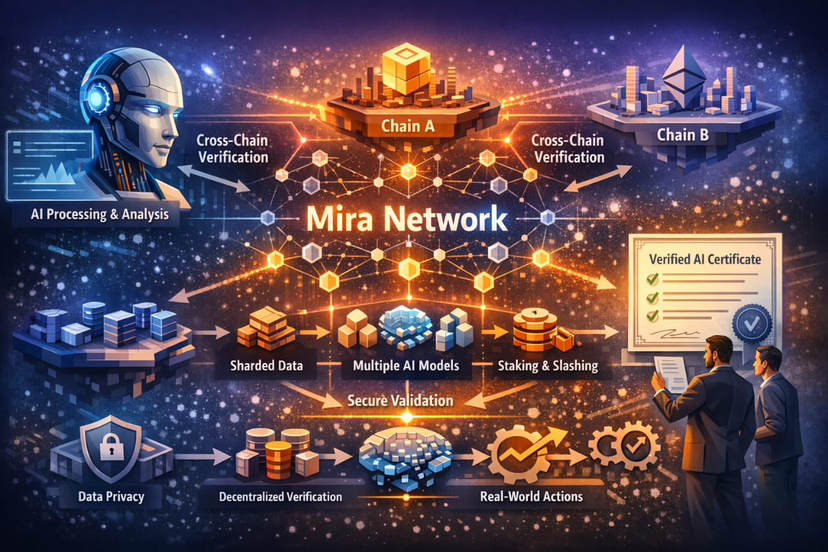

That’s why the idea of Mira leaning into cross-chain AI functionality is showing up at the right moment. Because the failure mode isn’t usually inside one app on one chain. It’s in the handoff. A model reads something from Chain A, triggers an action on Chain B, and the human operator is left holding an explanation made of vibes.

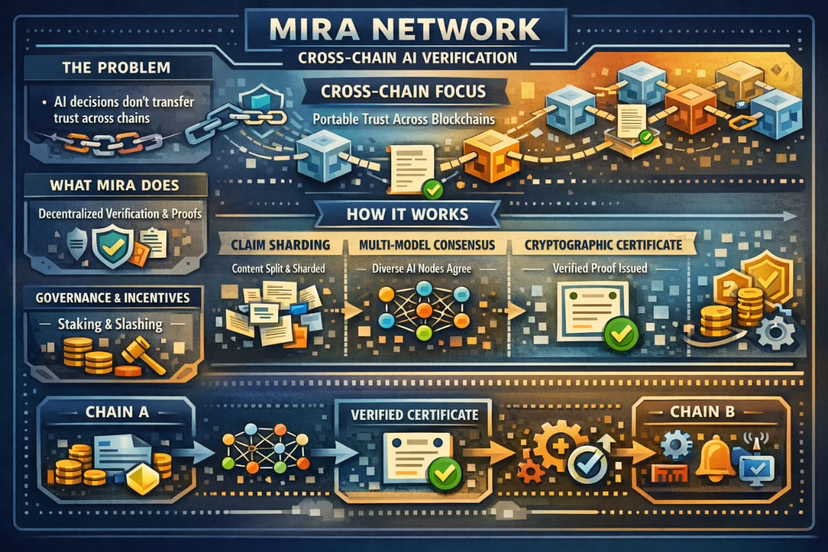

Mira’s core claim, at least as laid out in its own materials, is that reliability doesn’t come from a single smarter model. It comes from turning outputs into smaller claims and making verification a networked, incentive-aware process that ends in a certificate you can carry elsewhere.

The piece people miss: cross-chain isn’t “bridging,” it’s accountability transport

When most people say cross-chain, they picture assets moving. I think the more interesting movement is accountability. You want to move the reason a system acted, not just the result of the action.

Mira’s whitepaper frames the network as a way to verify AI-generated output “without relying on a single trusted entity,” by using decentralized consensus across “diverse AI models,” and then issuing “cryptographic certificates” that attest to what reached consensus.That certificate is the thing that naturally wants to be cross-chain.

In other words, you don’t need every chain to run the same AI stack. You need a portable artifact that says: here are the claims, here is what the verifier set agreed on, and here is the proof that agreement happened under incentives.

How the network’s workflow translates into a cross-chain pattern

The whitepaper describes a pretty specific flow. A customer submits content and can specify verification requirements like domain and a consensus threshold. The network splits the content into small, checkable statements. It sends those statements to different nodes to verify, combines their answers into a final agreement, and then returns the result with a cryptographic proof showing which models agreed on each statement. If you’re thinking cross-chain, it’s basically the same idea—only the wiring changes depending on the chain.

On Chain A, an app or agent generates a candidate statement or plan. Mira verifies it off-chain through its networked process and produces a certificate. On Chain B, a contract or service doesn’t need to “trust the model.” It needs to verify the certificate format and policy rules your application sets. The trust anchor becomes the certificate and its linkage to a verification event, not a brand name model.

That’s the difference between “AI that talks across chains” and “AI whose decisions survive across chains.”

Security, governance, and the boring economics that make cross-chain credible

Cross-chain systems get attacked at the seams. So if the verification layer is gameable, portability just spreads the damage faster.

Mira’s security model is explicit about the weakness of standardized verification questions: if a task becomes multiple-choice, random guessing can look profitable. The mitigation they describe is staking plus slashing: nodes must stake value, and if they consistently deviate from consensus or look like they’re guessing, their stake can be slashed.

I like that they don’t pretend incentives are optional. Cross-chain reliability is basically incentives under stress. Someone will try to spoof “truth” because the downstream value on another chain is higher.

There’s also a governance arc implied in the “network evolution” section: early phases involve careful vetting of node operators, then decentralization phases that introduce duplication of verifier models, and later sharding of requests across nodes.That’s not governance in the token-voting sense, but it is governance in the operational sense: who gets to verify, how the network reduces collusion, and how it scales without losing the plot.

Privacy and selective exposure, which matters more when chains disagree

Cross-chain coordination often forces uncomfortable tradeoffs: you either leak too much data so everyone can audit, or you hide too much and nobody trusts the outcome.

Mira’s whitepaper describes a privacy approach where content is broken into entity-claim pairs and “randomly sharded across nodes,” so no single node can reconstruct the complete candidate content.That matters in cross-chain settings because disputes frequently involve sensitive context: user data, proprietary strategies, compliance flags, internal risk notes.

Portability is easier when the verified artifact is minimized, and when the verification process itself didn’t require exposing the full payload to every participant.

Ecosystem and community: the developer surface area tells you what’s real

I’m skeptical of “ecosystem” claims unless there’s a usable surface for builders. Mira’s official docs show they’re leaning into developer workflows with an SDK that presents itself as a “unified interface” to multiple language models, with routing, load balancing, and flow management.

That matters for cross-chain not because routing is trendy, but because cross-chain apps are operationally annoying. You don’t want every team reinventing model selection, fallbacks, streaming, error handling, and then separately duct-taping verification.

Even small details reveal seriousness. Their API token docs call out key handling and monitoring, and note that API keys follow a consistent prefix format. In isolation that’s mundane. In aggregate it’s what lets a community build repeatable systems instead of one-off demos.

Real use cases where “cross-chain verified AI” actually earns its keep

The most obvious real use case isn’t a chatbot. It’s an agent that triggers actions.Imagine a system that watches an update on one blockchain, turns it into a short explanation, judges how risky it looks, and then either moves funds, pauses a feature, or warns the team on another blockchain. Without verification, it’s just a persuasive model acting like a judge.

Mira’s framing is that the network can handle content from “simple factual statements” up to complex forms like technical documentation and code, by standardizing outputs into verifiable claims.If that holds up in production, you can build cross-chain agents where the question is no longer “is the model confident,” but “did the verifier set converge, and under what threshold did we allow action.”

That’s a very different kind of utility. It turns AI from a suggestion engine into something closer to an accountable subsystem.

Conclusion: the data points that make this direction feel grounded

I don’t think cross-chain is the headline. I think the headline is portable justification.

The whitepaper’s probability table is a quiet but important credibility signal: if verification is multiple-choice, guessing can be tempting, but the odds collapse as you chain multiple verifications. For example, with four answer options, random success is 25% for one verification, but drops to 0.0977% after five verifications. That’s the kind of detail you include when you’re thinking about adversaries, not just demos.

Add to that the explicit staking-and-slashing posture against lazy or malicious nodes, and the privacy-by-sharding approach where no single node can reconstruct full content,and you get a picture of a system that’s at least designed to survive pressure.

So when I hear “Mira will focus on cross-chain AI functionality,” I translate it as: can the network’s certificates, incentives, and privacy model hold up when the same decision has consequences in multiple places at once. If they can, the value won’t be flashy. It’ll be the boring ability to say, across chains, “here’s why we acted,” and to have that answer still stand when someone tries to break it.