There’s a strange pattern in technology markets: the most important systems rarely look exciting while they’re being built. The loud projects get attention first. They trend, circulate through social feeds, and generate the kind of excitement that feels like momentum. Meanwhile, the systems that eventually become indispensable tend to move quietly in the background.

That’s the lens through which I keep returning to Mira Network.

Not because it’s dominating headlines. Not because everyone is talking about it. But because it’s trying to address a problem that becomes more uncomfortable the longer artificial intelligence spreads into real decision-making environments.

The problem isn’t that AI sometimes makes mistakes. That part is expected. Every technology has margins of error.

The deeper issue is that AI systems often produce confident answers without leaving a clear trail explaining how those answers came to exist. You receive an output, but the reasoning behind it can be difficult to reconstruct. The process that generated the result often disappears the moment the result appears.

In low-stakes environments, that ambiguity is tolerable. If a chatbot recommends the wrong restaurant or summarizes an article poorly, the consequences are minor. But AI systems are no longer confined to casual interactions.

They are being woven into decision pipelines.

They review documents.

They analyze contracts.

They screen applicants.

They flag suspicious financial activity.

They draft policy language and operational reports.

When systems like that generate conclusions, the question “Where did this come from?” stops being philosophical. It becomes operational.

And that is precisely the gap Mira Network is trying to address.

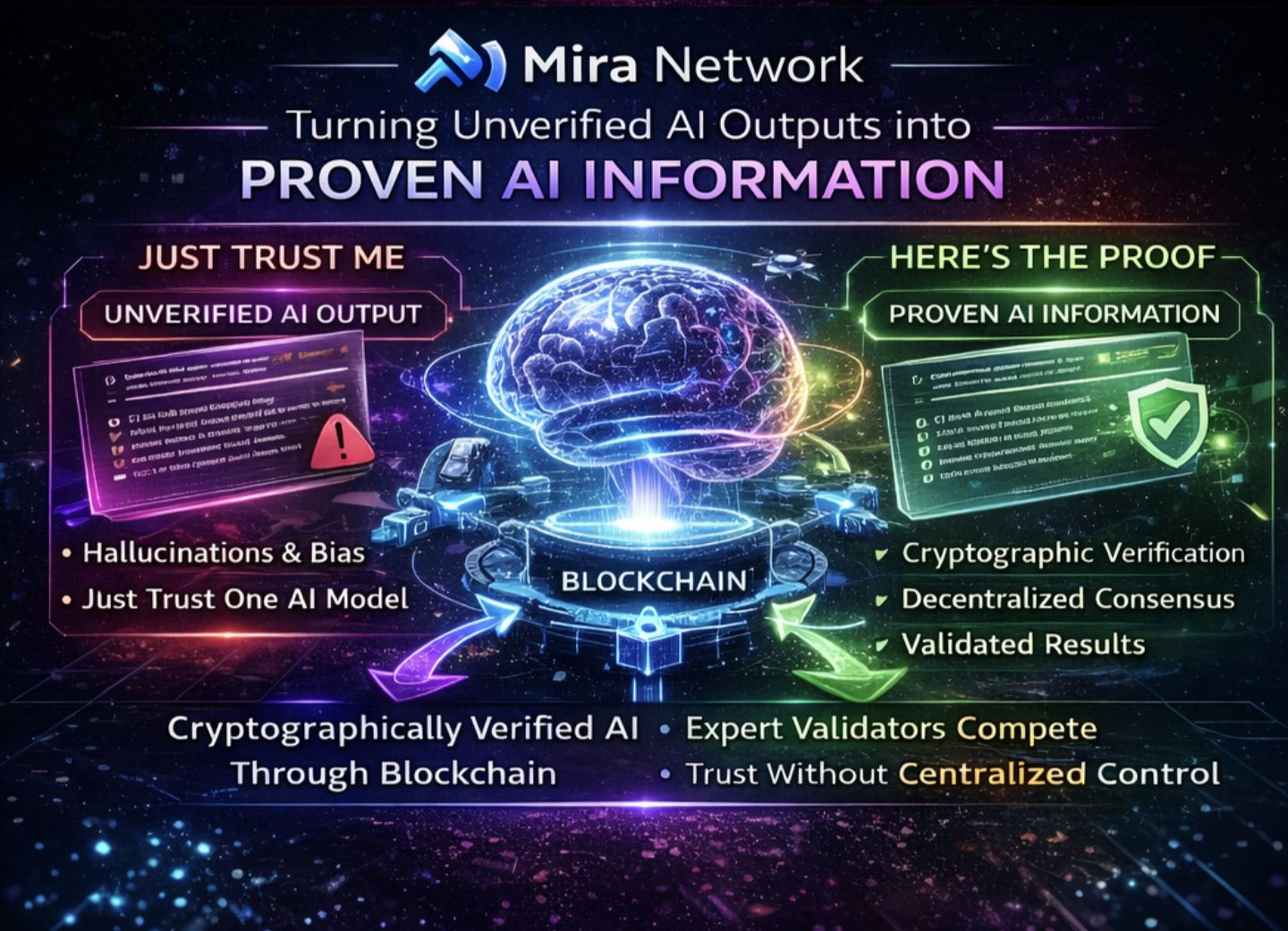

At its core, the idea is straightforward: create a layer that verifies AI outputs and records their lineage. Instead of answers appearing as isolated artifacts, they would carry receipts—traceable proof showing how a result was generated, validated, or checked.

In theory, that transforms AI output from a disposable message into something closer to documented evidence.

Conceptually, it makes sense.

But the part that gives me pause isn’t the idea itself. It’s the environment in which the idea has to survive.

Anyone who has spent time watching emerging technology ecosystems recognizes the cycle. A project launches with a compelling narrative. Early participants arrive because incentives reward participation. Activity metrics climb. Social feeds fill with optimism and declarations about being “early.”

Then reality appears.

Scaling challenges surface. Incentives distort behavior. Engagement proves shallower than expected. Attention shifts to the next promising narrative.

The cycle repeats.

The uncomfortable truth is that markets often reward velocity far more than they reward durability. Visibility travels faster than reliability. A concept that trends for three weeks can appear more successful than a system that quietly works for three years.

That tension is why Mira’s approach feels unusual.

Verification, provenance, and audit trails are not naturally viral concepts. They are infrastructure ideas. They belong to the same category as logging systems, compliance frameworks, and security architecture things people rarely celebrate until the moment they’re missing.

No one wakes up excited about auditability.

They wake up excited about growth charts and adoption metrics.

Which raises the central question surrounding Mira: can something deliberately unglamorous survive in a market addicted to spectacle?

Because verification is inherently slow work.

It requires defining standards, integrating with existing tools, building systems that remain reliable under stress, and proving their value in moments when the stakes are high. None of those milestones translate easily into viral announcements.

They look boring from the outside.

Ironically, that might be exactly what makes them important.

Still, another layer of complexity emerges when incentives enter the picture. Many emerging networks use token rewards, reputation points, or participation systems to accelerate activity. In the early stages, those incentives can help bootstrap communities and encourage experimentation.

But they can also create misleading signals.

If participants are rewarded simply for interacting with a verification system, the network can accumulate impressive volumes of activity without demonstrating meaningful impact. Large numbers of verified outputs might exist, yet none of them may correspond to decisions that actually matter.

For a verification network, that scenario carries a strange irony.

Imagine constructing a courthouse and spending the entire day processing parking tickets. Technically, the system is active. Cases are moving through the pipeline. But nothing consequential is being resolved.

When I evaluate a project like Mira Network, raw activity doesn’t tell me much. What matters is whether verification becomes necessary rather than optional.

That means watching for moments when the absence of proof would create real problems.

Situations where money moves based on an AI analysis.

Where contractual decisions depend on algorithmic interpretation.

Where regulatory or legal consequences hinge on the accuracy of a system’s output.

Those are the environments where provenance stops being theoretical.

It becomes defensive infrastructure.

If Mira manages to anchor itself to use cases like that, it could transition from “interesting technology” to “required component.” But if the network remains focused primarily on low-stakes verification driven by incentives, it risks drifting into the same attention cycle that has absorbed many other promising projects.

Trust is another pressure point.

A platform built around verification carries a heavier burden than most systems. If a social application experiences outages, users complain and move on. If a meme platform misbehaves, the damage is minimal.

But when a system designed to provide certainty fails, the implications spread much further.

Verification infrastructure must behave differently under pressure. Errors cannot simply be acknowledged and forgotten. They must be documented, explained, and corrected with transparency.

Trust, in that context, accumulates through incident reports rather than marketing.

Every serious system eventually experiences stress tests. Edge cases appear. Incentives get manipulated. Infrastructure encounters scale limits. The real signal isn’t whether those moments occur—it’s how the team responds when they do.

Quiet transparency matters more than narrative control.

That’s why my perspective on Mira remains cautious rather than enthusiastic. Not because the concept lacks merit, but because the ecosystem surrounding it has a history of bending strong ideas toward short-term visibility.

I’ve watched many infrastructure projects begin with disciplined intentions and gradually drift toward engagement metrics once growth slows. The pressure to maintain attention can reshape priorities in subtle ways.

And verification cannot afford that drift.

If Mira Network is going to succeed, it will likely happen in a way that doesn’t look dramatic. There won’t be a single moment when everyone suddenly realizes the system is essential.

Instead, adoption would emerge gradually.

Organizations would begin requesting verified outputs by default. Decision pipelines would incorporate provenance checks automatically. Teams would treat undocumented AI results with skepticism.

Over time, verification would become habit.

That kind of transformation rarely produces explosive headlines. It resembles the slow normalization of other invisible technologies—protocols, encryption standards, authentication layers that eventually become fundamental to digital systems.

The challenge is surviving long enough to reach that stage.

Because patience is expensive in markets conditioned to expect rapid proof of relevance.

That’s why I’m still watching Mira rather than celebrating it.

The instinct behind the project prioritizing proof over noise feels correct. But instincts alone don’t determine outcomes. Execution, incentives, and timing will ultimately shape whether the network becomes foundational infrastructure or simply another thoughtful idea that struggled to compete with louder narratives.

Verification, after all, isn’t a volume game.

It’s a credibility game.

And credibility grows slowly.

If Mira can endure the quiet phase the unglamorous integrations, the incremental improvements, the accumulation of high-stakes use cases then it has a chance to change how people interact with AI systems.

If it can’t, the concept may remain trapped in the realm of theory.

For now, the most honest position is observation.

Because proof, when it’s working properly, shouldn’t look exciting.

Receipts should feel normal.

Audit trails should feel routine.

The fact that they don’t yet is exactly why systems like Mira Network matter and exactly why their path forward will be difficult.

Whether the market allows something necessary to grow without demanding spectacle first is still an open question.

And until that question is answered, watching carefully might be the most reasonable response.