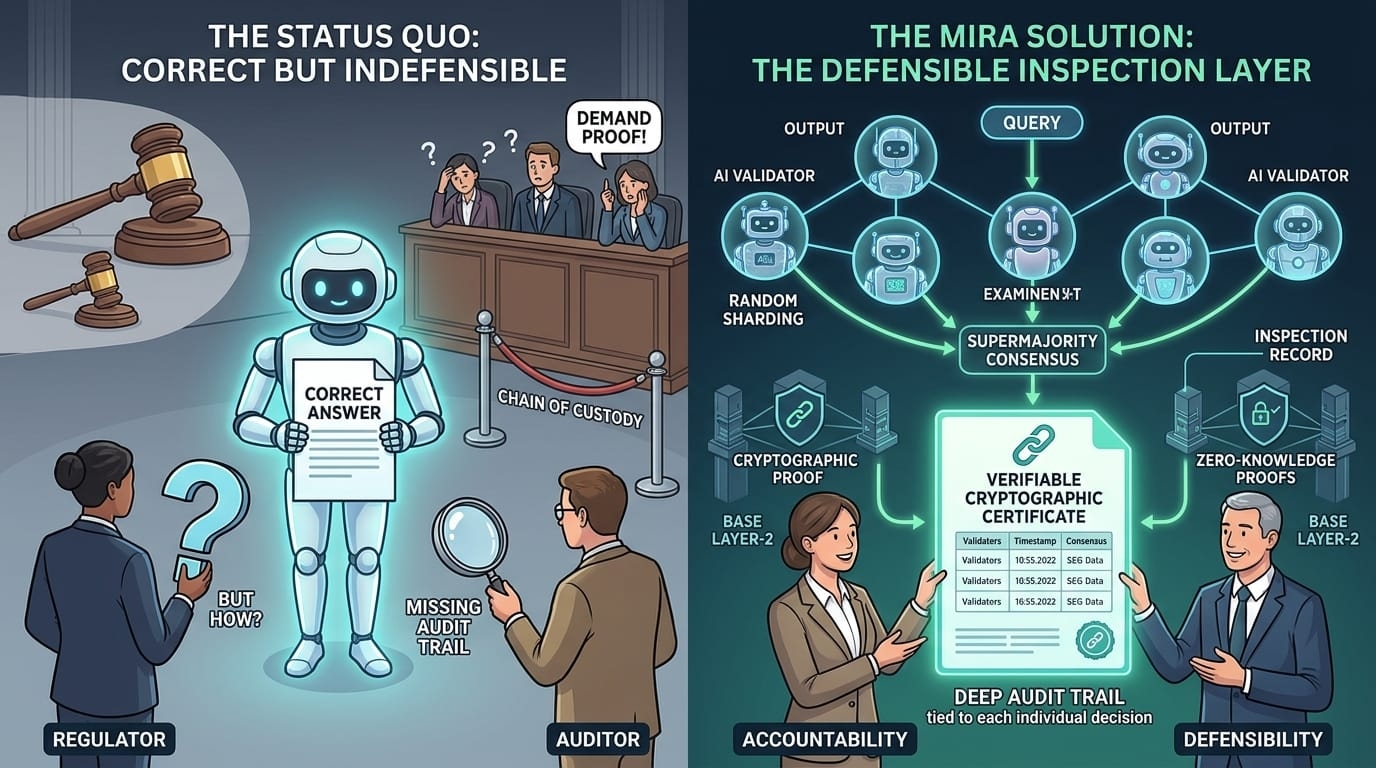

There is a quiet failure mode in artificial intelligence that rarely appears in research papers or benchmark leaderboards. It is not the kind of failure where a model produces nonsense or invents facts. In this situation, the system works. The answer is technically correct. The process functions as designed. Yet the organization that relied on the output still ends up explaining itself to regulators, auditors, or sometimes even a court.

The problem is not accuracy. The problem is accountability.

For years, the AI conversation has focused on whether models can produce correct answers. But institutions that actually deploy AI systems are discovering that correctness alone is not enough. A correct answer without a verifiable process behind it is still difficult to defend when something goes wrong. If a bank, hospital, or government agency relies on an AI output, the question regulators eventually ask is not simply whether the answer was accurate. They want to know what happened in that exact moment. Who checked the result. What validation occurred. And whether there is a record proving the process took place.

That gap between correct output and defensible decision is where Mira Network enters the picture.

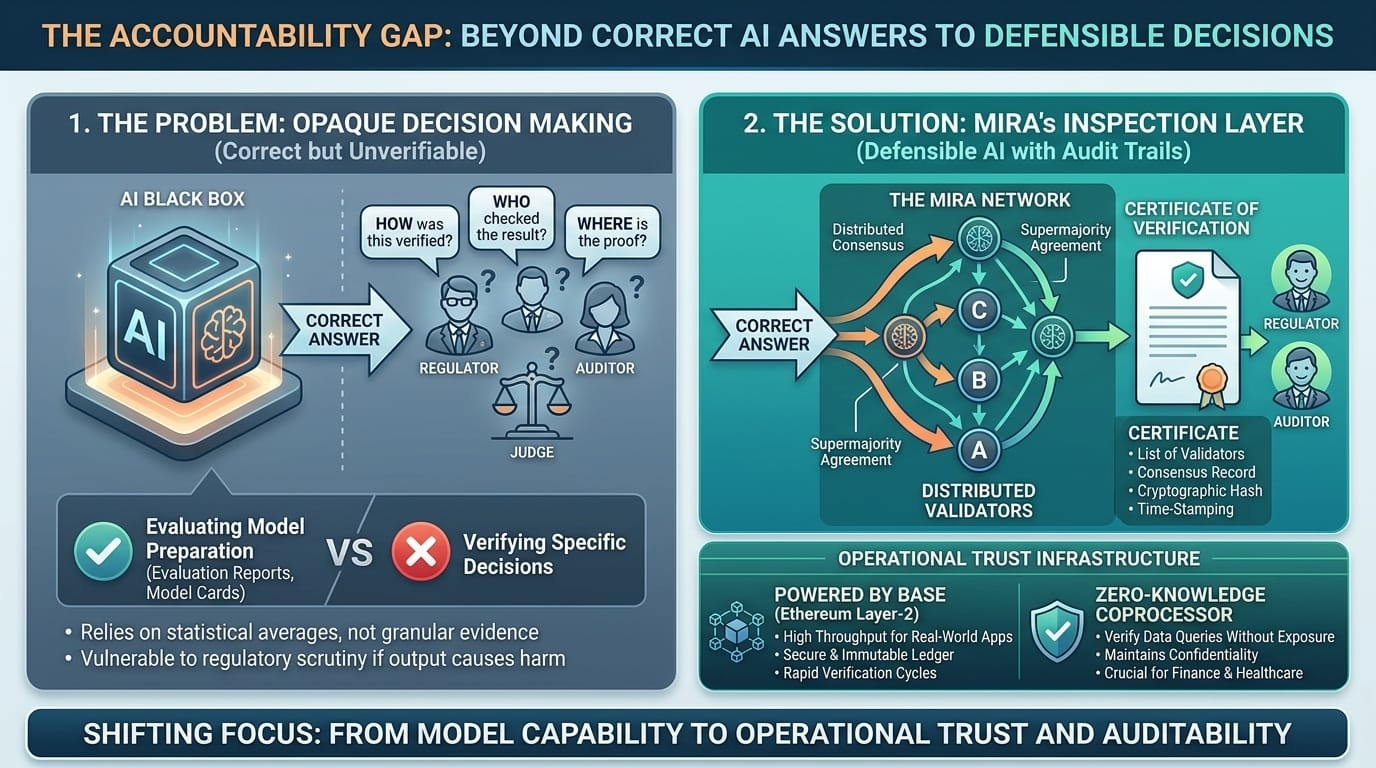

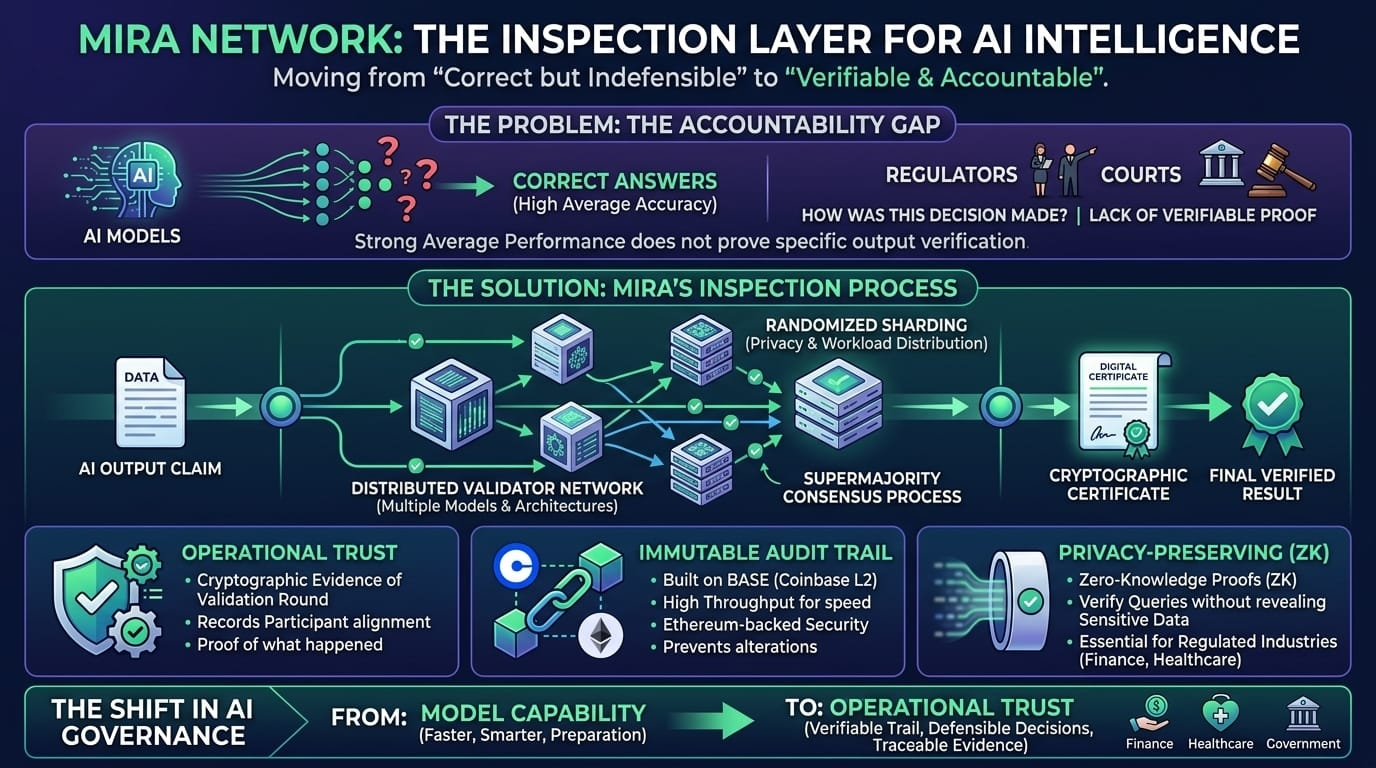

At first glance, Mira Network looks like another system designed to improve AI reliability. Instead of trusting the judgment of a single model, it routes outputs through a distributed network of validators. Multiple models, often trained on different architectures and datasets, examine the same claim before a result is finalized. The logic is straightforward: an error that slips past one model may not survive several independent evaluations. In practice, this dramatically reduces hallucinations and pushes reliability far beyond what a single model can deliver on its own.

But accuracy is only the surface-level story.

The deeper idea behind Mira is not simply about making AI answers better. It is about turning every AI output into something closer to an inspection record.

To understand why that matters, it helps to look at how other industries handle trust. In manufacturing, a company does not defend product quality by saying its machines are usually calibrated correctly. Instead, each item leaving the production line can be traced through a documented inspection process. If a defect appears later, investigators can examine the record and reconstruct exactly what happened.

Artificial intelligence systems rarely work this way today. When an AI model generates an output, most organizations can only point to general evidence that the model performs well on average. They may have evaluation reports, model cards, or compliance documentation showing that the system was tested before deployment. These documents prove preparation, but they do not prove that a specific output was verified before someone acted on it.

That difference is becoming increasingly important.

Regulators around the world are beginning to demand more granular accountability for automated decision-making. Courts are also starting to ask how organizations verify AI outputs before they influence real-world outcomes. In many cases, companies that believed strong average performance metrics would satisfy oversight requirements are discovering that regulators want something much more concrete.

They want proof tied to individual decisions.

Mira Network attempts to provide that proof by transforming AI verification into a cryptographic process. Every output that moves through the network can produce a certificate that records what happened during the validation round. The record shows which validators participated, how their responses aligned, and which result ultimately reached consensus. Instead of relying on statistical claims about model performance, the system generates a verifiable artifact tied to a specific moment in time.

The architectural choices behind Mira reflect this focus on operational trust. The network is built on Base, Coinbase’s Ethereum Layer-2 infrastructure. This decision is less about branding and more about practicality. Verification systems need to operate fast enough to support real-world applications while still anchoring their records in a secure environment. Base provides the throughput required for rapid verification cycles, while Ethereum’s security model ensures that the resulting certificates cannot easily be altered after they are recorded.

A verification record stored on a fragile chain would defeat the entire purpose. If the underlying ledger can be reorganized or rewritten, the record becomes little more than a temporary note rather than a permanent audit trail.

Beyond the blockchain layer, Mira introduces mechanisms designed to preserve both reliability and privacy. Requests entering the system are standardized before reaching validators so that small contextual differences do not distort the evaluation process. Tasks are then distributed across nodes using randomized sharding, which prevents any single participant from seeing the entire picture while also spreading workload across the network.

When validators submit their assessments, the system aggregates the responses using a supermajority consensus process. The final certificate represents agreement across the network rather than a narrow vote. In effect, the network functions like a distributed inspection team examining each AI-generated claim.

Another piece of the system quietly pushes Mira closer to enterprise infrastructure. The network includes a zero-knowledge coprocessor designed to verify database queries without revealing the underlying data. This capability matters far more to institutions than it does to casual developers. Organizations operating under privacy laws or strict confidentiality rules cannot expose sensitive datasets simply to prove that an AI-generated answer was correct. Zero-knowledge verification allows them to demonstrate accuracy while keeping the original information hidden.

For sectors such as finance, healthcare, and government administration, that difference can determine whether an AI system is merely an experiment or something that can be deployed at scale.

Still, Mira Network does not remove every challenge surrounding AI governance. Verification adds an additional step to the decision process, and that inevitably introduces some latency. In environments where milliseconds matter, any system requiring distributed consensus must balance speed with reliability. There are also unresolved legal questions. If a network of validators approves an output that later causes harm, the question of liability does not disappear simply because the verification process was decentralized.

Technology can enforce transparency, but it cannot replace legal frameworks.

Even with those limitations, the direction Mira represents reflects a broader shift in how institutions are beginning to approach artificial intelligence. The early era of AI adoption focused heavily on model capability. Organizations wanted systems that were smarter, faster, and more accurate than previous generations.

The next phase is about something different.

As AI systems become more powerful, the scrutiny surrounding their decisions increases. Institutions that want to rely on automated intelligence must be able to explain not just what their systems do, but how every important output was verified before it influenced an action.

In that environment, the winners will not necessarily be the companies with the most confident models. They will be the ones capable of producing a clear trail of evidence showing what was checked, when it was checked, and how the final decision emerged.

Accuracy may begin the conversation about artificial intelligence.

But accountability is what ultimately determines whether anyone is willing to trust it.