Over the few years AI has made incredible progress. Models can write essays generate code and even create images or videos. But the more I explore how these systems work the more I realize that intelligence without reliability is a foundation. AI is like a building that needs a base to stand.

While studying the ideas presented in the Mira Whitepaper one theme kept coming to my mind: AI does not fail because it lacks knowledge it fails because it lacks verification. I kept thinking about this idea and how it relates to AI.

Large models generate responses based on probabilities learned from datasets. That means they can produce answers that sound convincing even when they are wrong. This can appear as hallucination, where the model invents information or bias where the output reflects patterns in training data than objective truth. No matter how large the model becomes these issues never completely disappear.

For me this highlights a problem: we have spent years improving AI generation but we have not invested enough in AI verification. We need to focus on making AI more reliable.

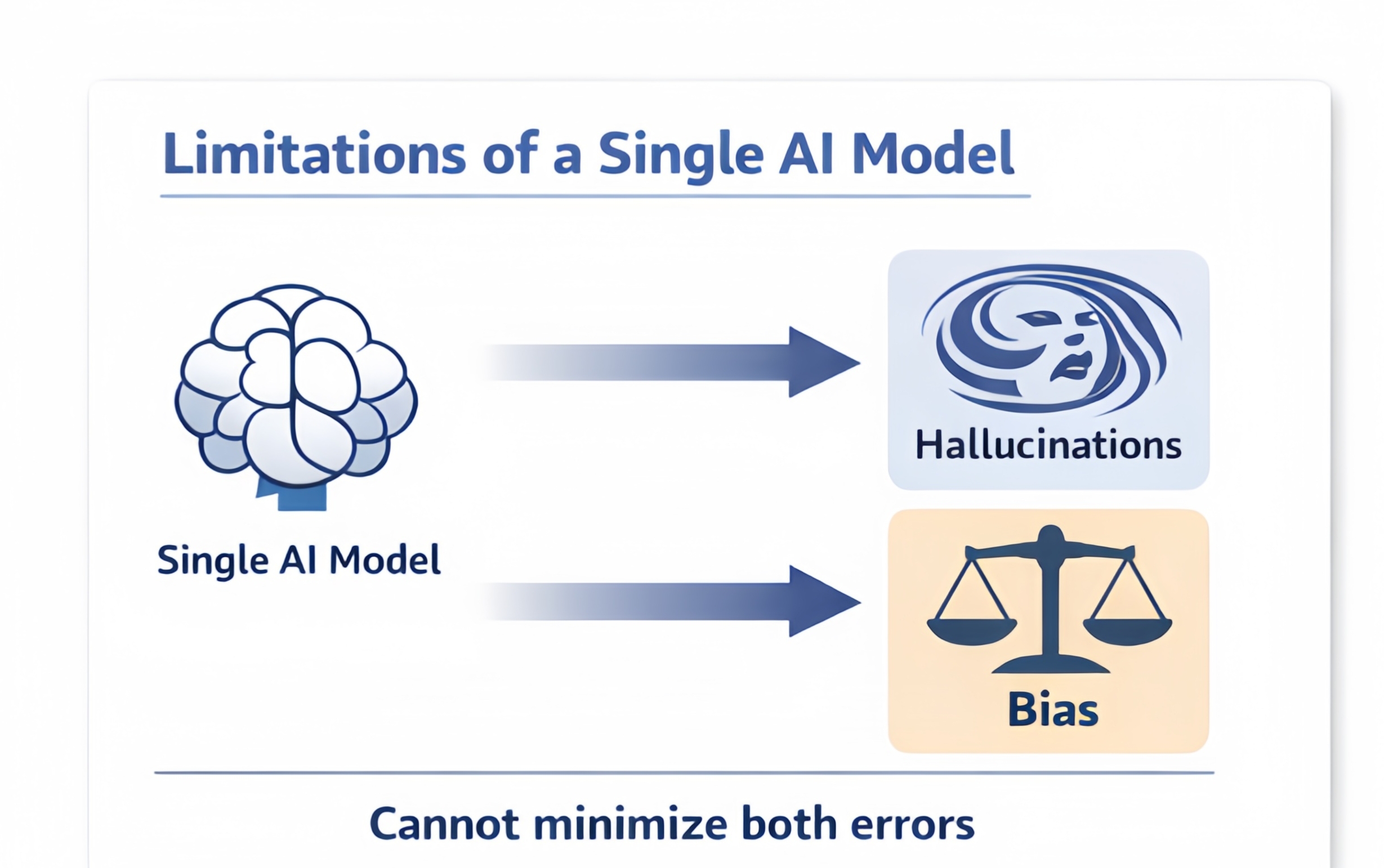

The Problem With Relying on a Single Model

* One of the insights I found interesting is that a single AI system has an error boundary.

* Even the advanced model cannot completely eliminate both hallucination and bias at the same time.

If developers try to reduce hallucinations by narrowing the training data they often introduce bias. If they expand the data to reduce bias inconsistencies start to appear in the models answers. It becomes a balancing act with no solution.

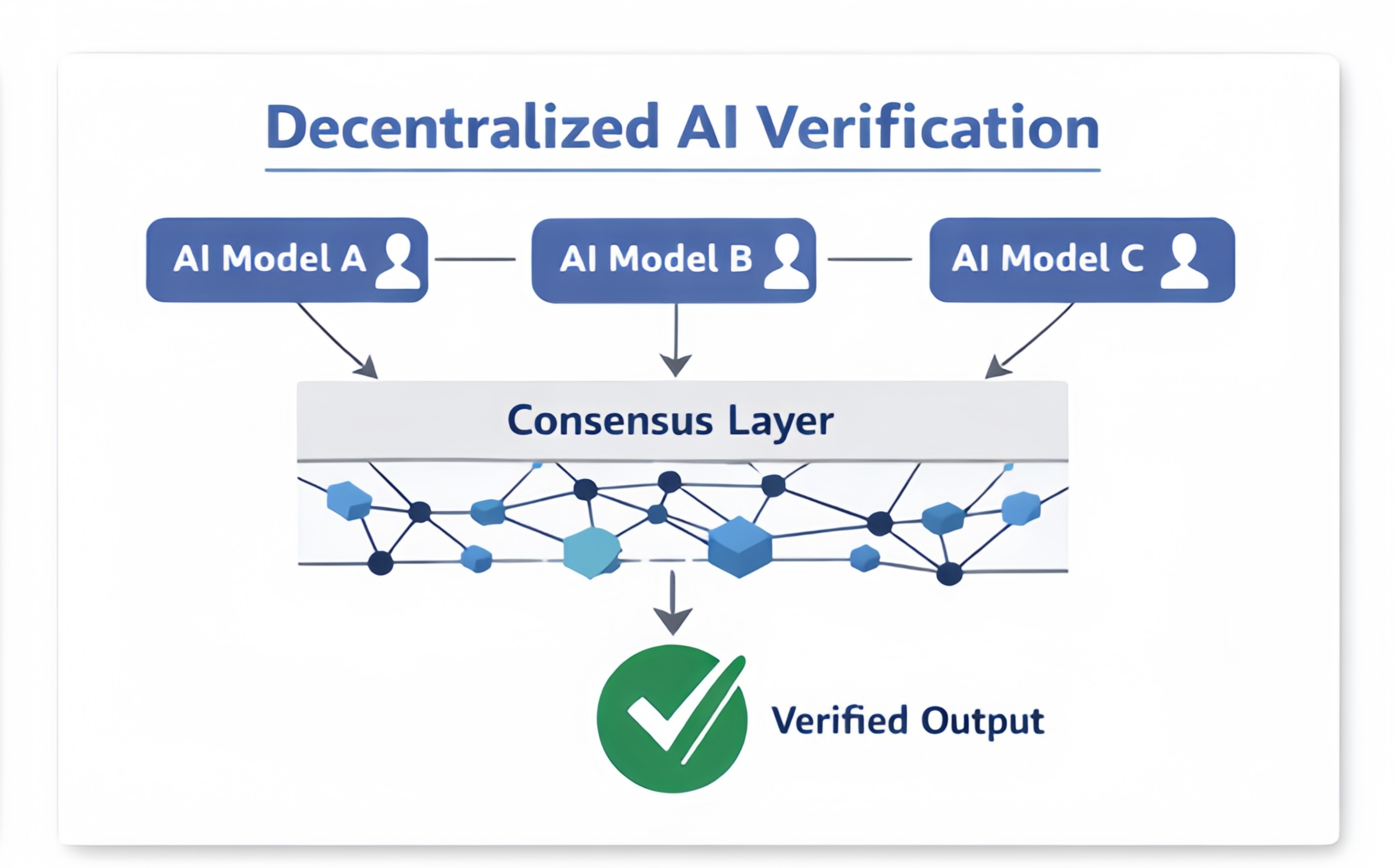

This is why I think collective validation is so important. Of trusting one model to decide whether something is correct Mira introduces a system where multiple independent AI models evaluate the same claim. The final outcome comes from consensus than authority.

That shift feels similar to how distributed systems improved trust in finance and data infrastructure. It's like a group of people verifying information together.

Breaking Information Into Pieces

Another concept that caught my attention is how the system transforms content before verification happens.

* than asking models to judge an entire article or paragraph the network breaks information into small clear claims.

* Each claim becomes a question that verifier models can evaluate independently.

This solves a technical problem. Different models interpret text differently. By converting content into claims the network ensures that every verifier is analyzing the exact same statement.

To me this design shows that reliability in AI is not about better models; it's also about better problem framing.

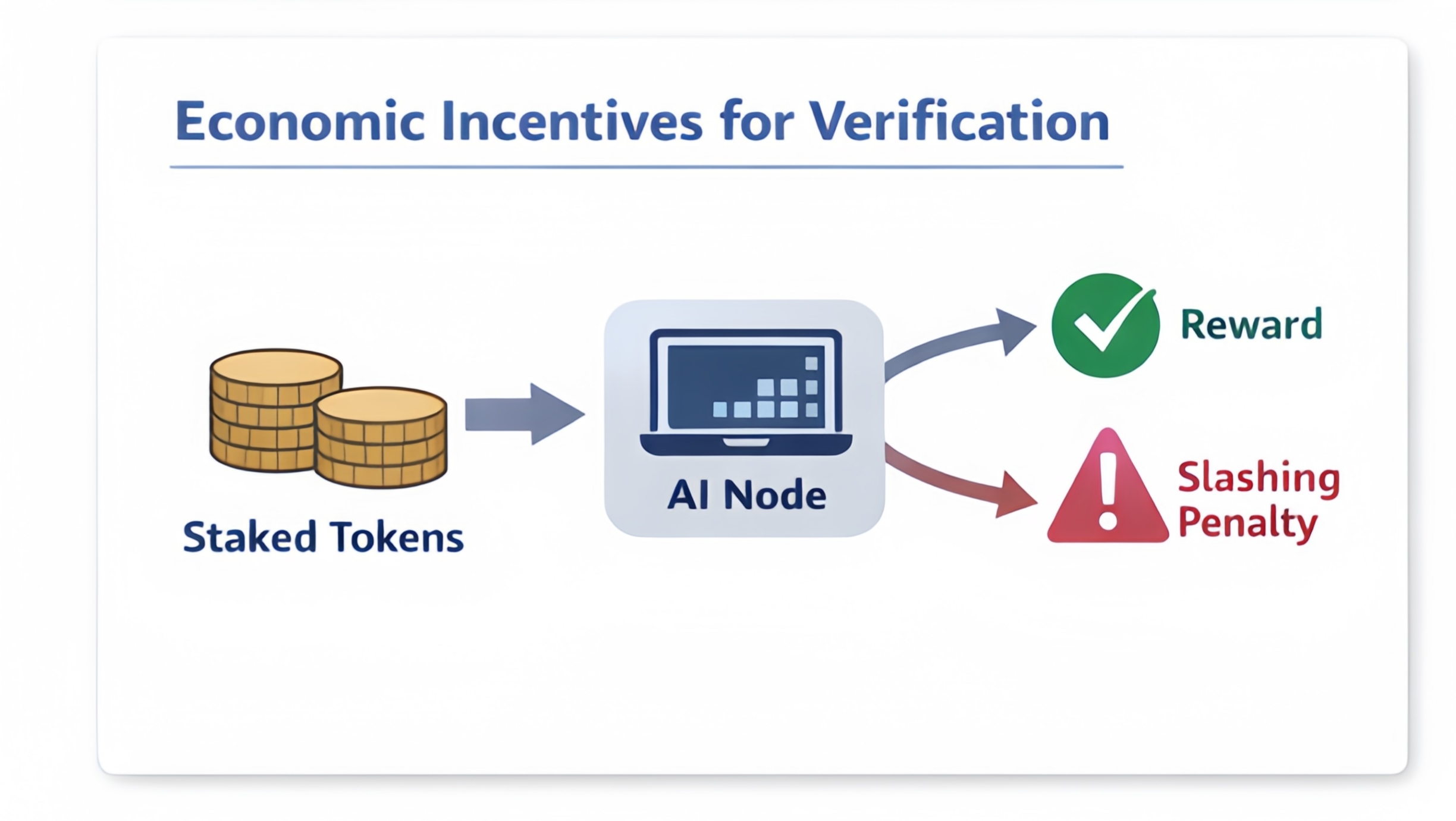

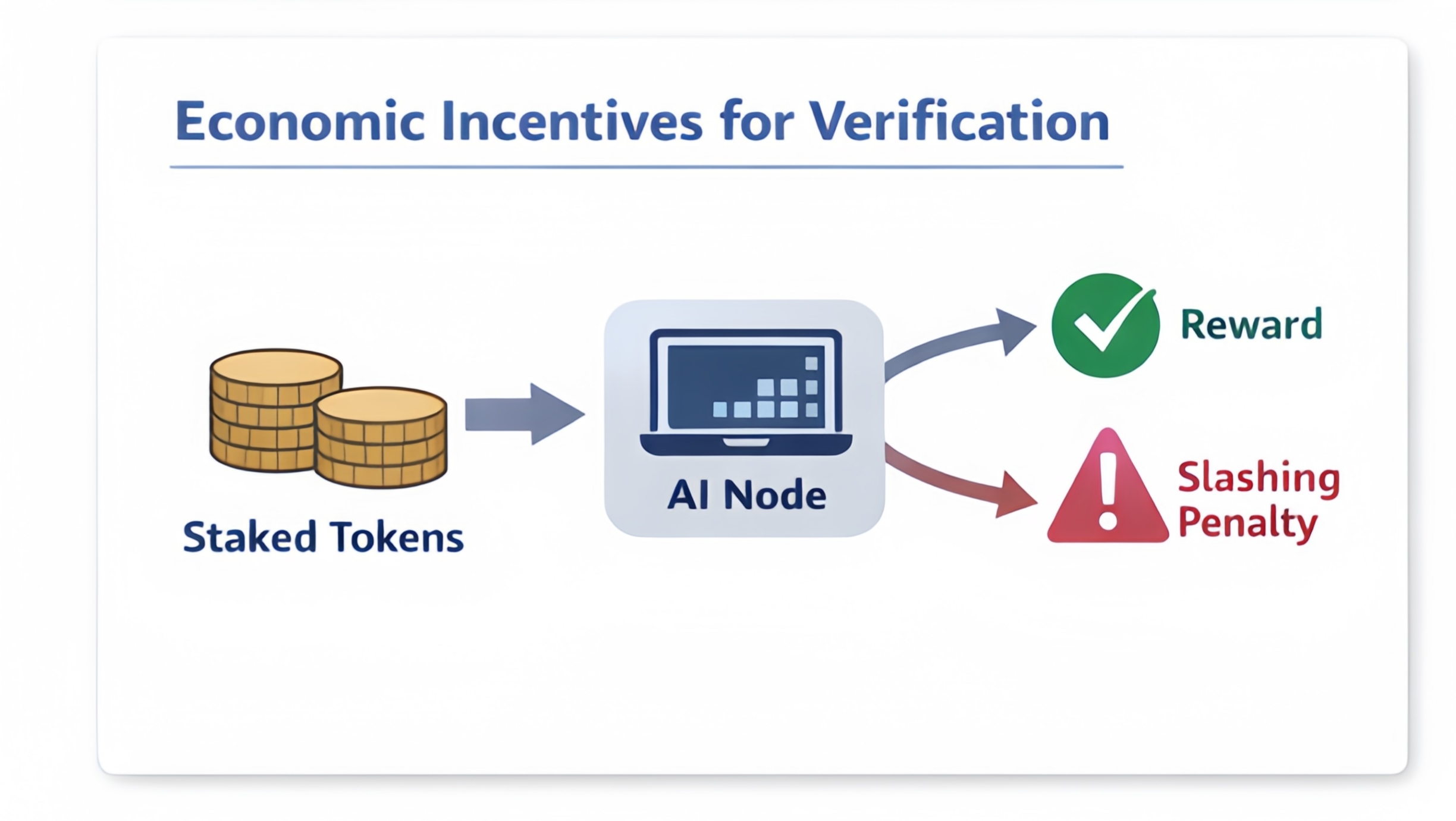

Incentives That Encourage Behavior

What also makes this system interesting is the economic layer behind it. Nodes that verify claims must stake value before participating. If their answers consistently deviate from consensus or show signs of manipulation they risk losing their stake. This creates an incentive for operators to perform real verification instead of guessing.

Traditional Proof-of-Work systems reward effort, even if that effort has no practical meaning. In contrast this network requires computation: AI inference used to validate information.

The Role of Model Diversity

Another thing I appreciate is the emphasis on diversity. Different AI models are trained on datasets, architectures and optimization strategies. These differences can introduce bias individually. When combined they can also balance each other.

A decentralized network naturally allows this diversity to emerge. Independent operators run verifier models, which reduces the risk of a single perspective dominating the verification process.

A Step Toward Reliable Autonomous AI

What excites me most, about this approach is its long-term implication. Today AI systems still require human supervision because their outputs cannot always be trusted. If a robust verification layer becomes part of AI infrastructure that dynamic could change.

Of humans constantly checking AI outputs machines could verify each other through decentralized consensus.

My Final Reflection

After going through these ideas my biggest takeaway is simple: the future of AI may depend much on verification networks as it does on better models. Generation made AI powerful. Verification might be what makes AI trustworthy.

If that vision succeeds we could eventually see AI systems that not produce information quickly but also provide cryptographic proof that the information has been verified.. In a world increasingly driven by machine-generated knowledge that kind of trust infrastructure could become incredibly valuable.

#Mira @Mira - Trust Layer of AI $MIRA