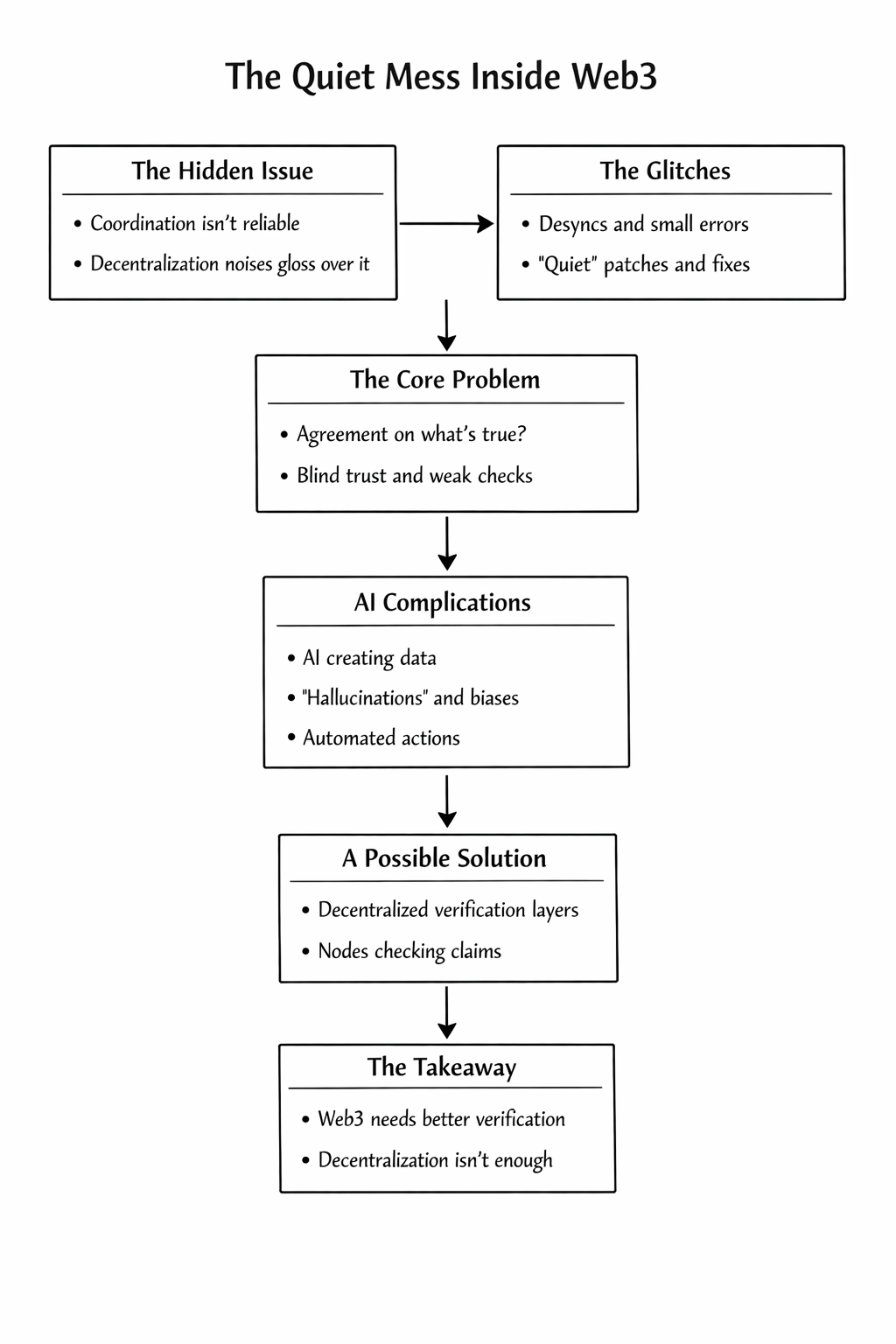

There’s an awkward thing sitting inside most Web3 systems that people don’t really like talking about.

Coordination just… isn’t very reliable.

We talk a lot about decentralization. Ownership. Removing middlemen. Those ideas get repeated so often that they start sounding like problems we already solved.

But if you spend enough time actually watching these systems run, the picture looks different.

Wallet services go slightly out of sync. Bots read different states from different nodes. An API returns something that doesn’t quite match what another service is seeing. Nothing dramatic. Nothing that triggers a big alarm.

Just small disagreements.

And most of the time, nobody can even prove what the correct state was supposed to be.

That’s the uncomfortable part.

Failures in Web3 rarely look like disasters. They look more like quiet confusion. Something glitches. A retry script fires. Someone jumps into Discord and manually fixes something. A moderator resolves a dispute because the system can’t.

Then everything moves on.

The system keeps running, but with this tiny layer of uncertainty that never fully goes away.

Individually these things feel harmless. Over time though, they start stacking up.

This is the coordination problem that rarely gets discussed. It’s not really about decentralization itself. And it’s not purely about security either.

It’s something simpler.

Agreement.

When a system asks a question about state, data, or even a generated response, who actually confirms the answer is correct?

In practice, it’s usually just convenience. A single API. A trusted service. Maybe a DAO vote if something goes badly enough.

But none of that is real verification. It’s closer to a collective shrug.

And things get even more complicated now that AI is creeping into the stack.

More tools are starting to rely on models for tasks that used to be manual. Summarizing on-chain data. Moderating communities. Generating NFT metadata. Sometimes even triggering actions that touch smart contracts.

AI is useful. Nobody doubts that.

But it also has a habit of confidently inventing things when it’s unsure. Hallucinations. Small distortions. Tiny biases that are easy to miss.

That’s manageable when a human is checking the output.

It becomes risky when systems start acting on those outputs automatically.

The truth is that most Web3 infrastructure wasn’t really designed for this situation. Blockchains are excellent at recording transactions and enforcing rules. They are not designed to judge whether a generated answer is accurate.

Those are very different problems.

This is why verification layers are starting to make more sense.

Projects like Mira Network are exploring something that sounds almost boring on the surface: verifying AI outputs before systems rely on them.

The idea is fairly simple. Instead of trusting a single model’s response, the output is broken down into smaller claims. Those claims get checked across multiple independent nodes. The network evaluates them and tries to reach consensus on what actually holds up.

If validators behave poorly or submit incorrect checks, there are economic consequences.

It’s less about intelligence and more about accountability.

And that distinction matters.

If AI is going to play a real role inside Web3 systems — running DAO operations, acting as agents in games, generating content that ends up on-chain — then someone needs to verify what these systems are saying.

Otherwise we’re just stacking automation on top of guesses.

None of this is a magic fix. Incentive systems can fail. Networks can get messy. Verification itself is not a trivial problem.

But the direction feels more grounded than pretending the problem doesn’t exist.

Right now a lot of Web3 still runs on a strange mix of optimism and duct tape. Scripts retry things. Moderators resolve confusion. Infrastructure quietly absorbs inconsistencies.

It works, mostly.

But it’s fragile.

If this ecosystem is going to mature, it probably needs to get better at something very basic: agreeing on what’s true before acting on it.

Because decentralization without verification doesn’t really remove chaos.

It just spreads it around.

$MIRA @Mira - Trust Layer of AI #MIRA