Recently, I have been examining Mira Network and the $MIRA token from a technological and infrastructure perspective rather than focusing only on its market price. What interests me most is how the network is designed, how its internal systems function, and what role the token plays within the broader ecosystem.

Artificial intelligence is evolving at an incredible pace. AI systems today can generate impressive insights, automate tasks, and support complex decision-making processes. However, alongside these advances, a serious issue continues to exist: reliability.

AI systems can sometimes produce hallucinations, biased outputs, or inconsistent results. In casual or entertainment applications this may not cause major harm, but in environments where decisions have real consequences, the risks become significant. Financial services, healthcare, legal analysis, and policy decisions all require a much higher level of certainty than current AI systems can consistently provide.

This challenge is part of the reason why Mira Network has been developed. The project focuses on transforming AI outputs into verifiable information rather than simply accepting the result of a single model.

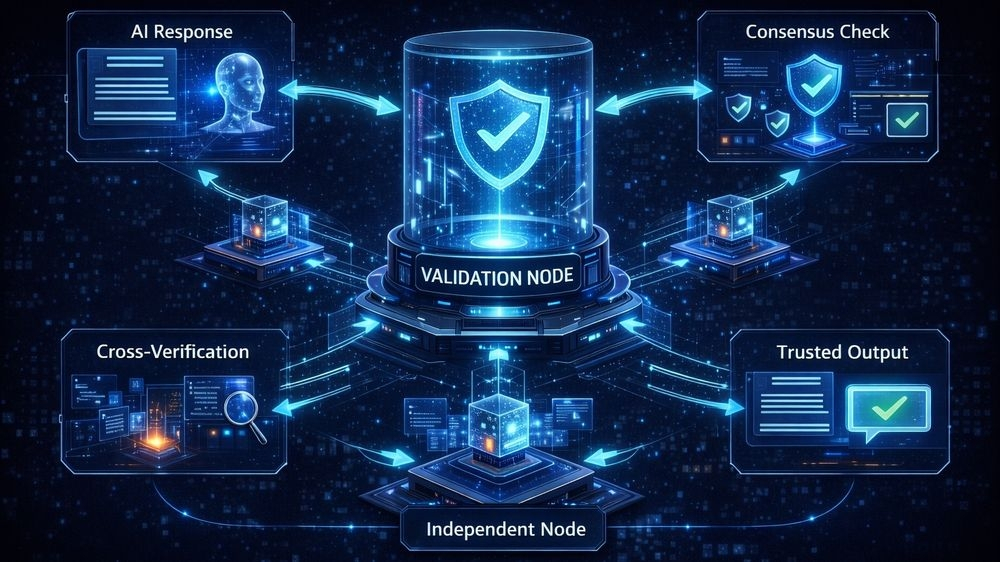

The concept behind Mira is relatively straightforward but powerful. Instead of relying on one AI model to generate and validate an answer, Mira breaks down complex AI outputs into individual verifiable claims. These claims are then distributed across a network where multiple AI systems participate in verifying the accuracy of the information.

Through this process, the system introduces an additional layer of verification that is often missing in traditional AI architectures. Rather than trusting a single system, the network creates a collaborative validation mechanism.

One of the key advantages of this approach is transparency. The results of the verification process can be recorded on a blockchain, creating a traceable record of how a conclusion was reached. Developers and organizations can review these records to understand the verification path behind an AI-generated result.

This level of transparency is especially important in sectors where accountability and auditability are essential.

Another interesting aspect of Mira Network is its neutral design. The system is not built around a single AI provider or model. Instead, it is designed to work with multiple AI systems from different developers. By allowing various models to evaluate and verify each other's outputs, the network aims to reduce dependence on any single source of information.

In theory, this structure could significantly improve the reliability of AI-generated insights.

However, as with any emerging infrastructure, several important questions remain. Verification networks must ensure strong incentive mechanisms for validators to participate honestly. Without proper incentives, the system could struggle to maintain reliable participation.

There are also challenges related to scalability and governance. As the network grows, it must maintain efficiency while preventing risks such as validator collusion or manipulation. Governance frameworks will play an important role in determining how the system evolves and adapts over time.

Despite these challenges, Mira Network represents an interesting shift in the conversation around artificial intelligence. Much of the current AI discussion focuses on capability — how powerful models are becoming.

Mira introduces a different perspective: verification.

If verification layers become widely adopted, they could play a critical role in how AI systems are deployed in real-world environments. Reliable verification could become the missing infrastructure that allows AI to move from experimental tools to trusted decision-support systems.

In that context, projects like Mira Network and the $MIRA ecosystem are exploring an important question: not just what AI can do, but how we can trust what it produces.