Artificial intelligence is getting better at giving answers. It still struggles with something much more important: knowing when it might be wrong.

Anyone who works with language models has seen this happen. An AI produces an explanation, structured perfectly written in a tone that sounds authoritative. At glance everything appears correct.

Then someone checks the details.

* A statistic doesn't exist.

* A source was never published.

* A logical step was invented by the model.

This is what researchers call hallucination. It remains one of the most persistent limitations of modern AI systems.

The problem becomes more serious as AI moves beyond chat interfaces and into decision-making environments.

When an AI helps summarize an article, a mistake is harmless.

When AI is used inside tools, autonomous agents or on-chain systems a mistake can trigger real consequences.

This is the gap that Mira Network is trying to address.

Not by building another AI model. By building a verification layer for AI outputs.

The Core Idea Behind $MIRA

Most AI systems today follow a pipeline:

1. A user asks a question.

2. The model generates a response.

3. The user decides whether to trust it.

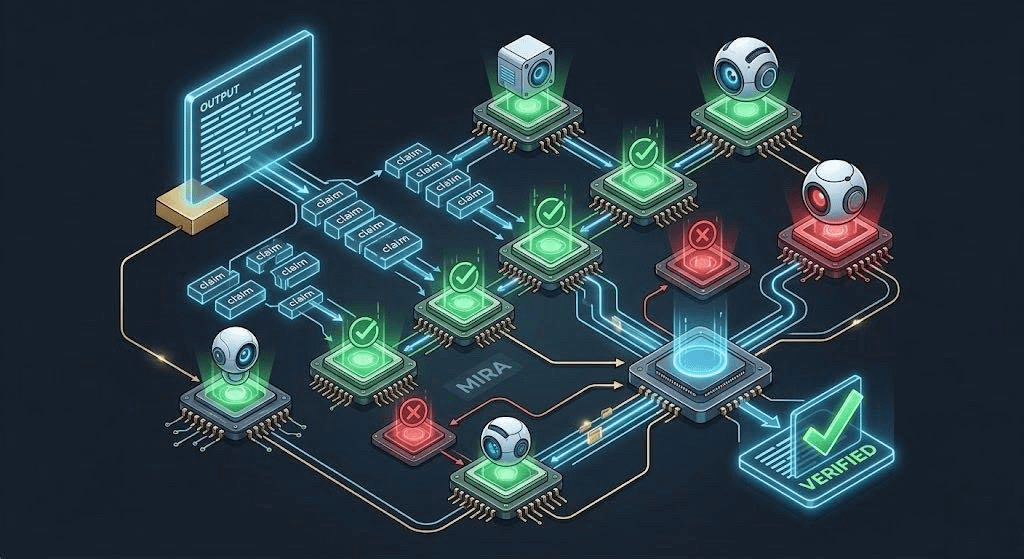

#Mira introduces an architecture.

Of trusting a single output the system breaks that output into smaller claims. These claims are then distributed across a network of validators which may include other AI models or specialized verification agents.

Each validator checks the claim separately.

If a majority agrees the claim is valid the result can be considered verified. If not the system. Rejects the output.

In words Mira separates generation from verification.

AI can still produce answers quickly. Those answers are no longer accepted blindly. They pass through a decentralized validation process before being considered reliable.

This approach borrows a principle that already exists in blockchain systems: don't trust a participant rely on consensus.

Why AI Verification Is Becoming Necessary

The idea of verifying AI outputs may sound excessive today. All millions of people already use AI tools every day without a verification layer.

The role of AI is changing quickly.

AI is no longer limited to answering questions. It is increasingly being integrated into:

* trading strategies

* DeFi analytics systems

* Research assistants for investors

* AI agents interacting with contracts

* Decision-support tools for institutions

In these environments AI outputs can trigger automated actions.

Once that happens the cost of hallucinations becomes much higher.

If an AI agent misinterprets market data and executes a trade the loss is immediate.

If an AI-powered research tool misidentifies a source it can distort investment decisions.

As automation increases the system needs something than "the model probably got it right.”

That's the gap Mira is trying to fill.

Decentralized Verification vs Centralized Control

One way to solve AI reliability would be for AI companies to build internal verification systems.

Centralized verification has limitations.

If a single company both generates and verifies the output the system ultimately still relies on one authority. Users have transparency into how the verification actually works.

Mira takes an approach.

Because the verification process is distributed across validators the final result emerges from network consensus rather than institutional control.

This design mirrors the philosophy that originally shaped blockchain technology.

Of trusting a single source of truth the system relies on multiple independent participants who are incentivized to verify information accurately.

Over time this could create a form of trustless AI validation, where reliability comes from processes rather than brand reputation.

The Economic Layer

Verification also introduces an economic model.

Within the @Mira - Trust Layer of AI validators are rewarded for verification and penalized for incorrect evaluations. This incentive structure encourages participants to prioritize precision and consistency than speed alone.

The network’s token plays a role in coordinating this system by:

* Reward validators

* Supporting staking mechanisms

* Funding verification operations

* Aligning incentives across participants

This is verification because it requires resources. Models must analyze claims compare evidence and produce conclusions.

Without incentives a decentralized verification network would struggle to sustain participation.

The Hard Part: Adoption

While the idea, behind Mira is compelling the real challenge lies in adoption.

Developers will only integrate verification layers if the benefits clearly outweigh the costs.

Verification introduces computation, potential latency and architectural complexity.