@Mira - Trust Layer of AI #Mira $MIRA

The verification pool dropped before the answer finished verifying.

I noticed it in the usage ledger, not the console.

The response itself looked harmless. Short answer. One statistic. A single sentence wrapped around a claim that barely weighed anything semantically. The kind of line that normally slide through Mira decentralized network's claim decomposition without anyone paying attention.

The decomposition layer didn't care how small it felt.

Fragment IDs minted. Mira's Validator Evidence hashes attached. Units routed through the verification node network like always.

Three fragments.

Normal.

What wasn't normal showed up in the verification meter.

token_usage: climbing

I refreshed the panel thinking the UI lagged.

Didn’t.

Independent model validators had already started attaching weight. Each verification cycle burned a little more $MIRA from the request pool. The token-powered verification usage meter ticked upward with every model pass.

Small claim.

The verification surface didn’t care.

Cheap sentence.

Expensive proof.

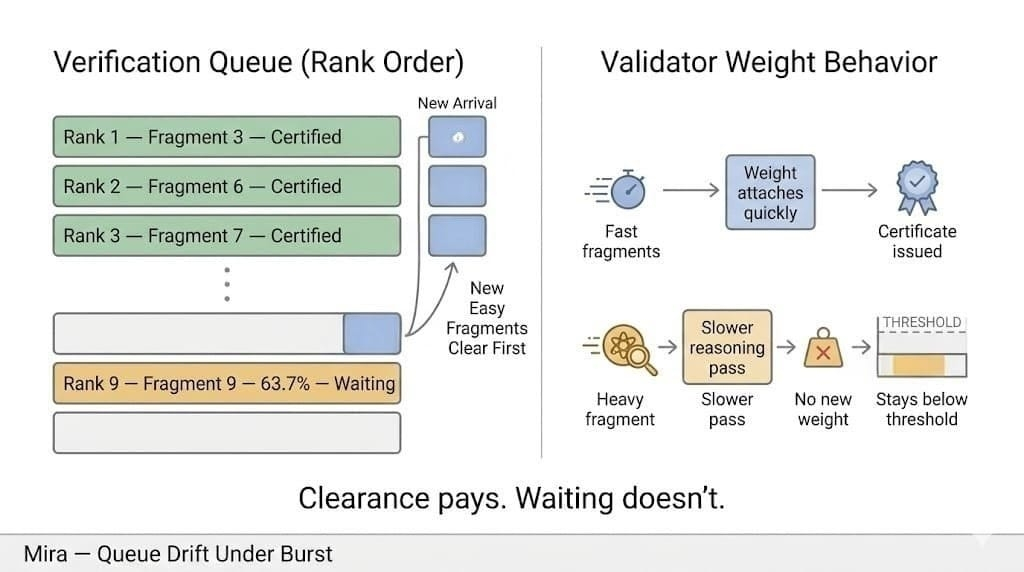

Fragment 1 sealed quickly.

Clear citation. Cheap validation path. Two validators on Mira trustless consensus committed stake weight almost immediately. The reward distribution panel barely flickered when the first certificate formed.

Fragment 2 cost more.

Evidence still straightforward.

Validators took a longer path anyway.

Verification loop ticked twice before quorum.

Still normal.

Fragment 3 didn’t behave.

Not wrong.

Just… expensive.

The claim fragment looked simple in plain language... a conditional clause sitting quietly at the end of the sentence. Mira’s verification mesh treated it differently. evidence path branched wider than the other fragments. Multiple candidate references. Two competing contexts.

The validator models started exploring both.

token_usage: climbing again

Cursor blinked.

More model passes.

The token meter moved again.

The validator queue length jumped from 2 to 7.

Stopping early would save tokens.

Nobody stops early.

If I cap the search, I keep the pool.

If I don’t, I keep the proof.

Fragment 1 already had its cryptographic verification certificate. Fragment 2 followed a few seconds later. Both fragments now exportable, signed, ready to be reused downstream.

Fragment 3 stayed open.

The sentence was technically “verified enough” for most integrations already pulling certified fragments from the network.

But Mira wasn’t finished spending.

The usage panel ticked again.

The verification pool balance dropped another notch.

I opened the verification trace expecting to see a dispute marker. Something that justified the extra cycles.

Nothing dramatic.

Just two validators taking longer evidence paths through the reference graph.

Mira's node network doesn’t guess when citations fork.

It walks the fork.

token_usage: climbing again

Generation finished in milliseconds.

Verification didn’t.

Fragment 3 finally crossed quorum after another pass. Validators attached the final weight. Consensus validity checks triggered. The certificate tuple recomputed across the fragment set.

Round closed.

Total verification usage printed in the ledger.

I stared at the number longer than I expected to.

The token burn was higher than the generation cost of the original response.

Not by a little.

By a multiple.

Validator reward weights recalculated and paid out of the verification pool.

From the network’s perspective everything worked exactly as designed.

Accurate claim.

Verified fragments.

Certificates issued.

Economic trace left a strange footprint.

Most expensive part of the sentence was the smallest fragment inside it.

I’m watching the next request enter the Mira verification queue now.

Mita Claim decomposition already finished.

New fragment IDs forming.

The usage meter hasn’t moved yet.

It will. #Mira

It always waits for the smallest fragment.