AI is everywhere now finance, healthcare, government, you name it. And honestly, there’s still one big thing we haven’t cracked trust. AI doesn’t promise us the truth it just spits out what’s most likely, and sometimes that means it gets things wrong or lets bias slip in. High stakes decisions can’t run on guesswork. That is where Mira Network steps in with a bold pitch what if we could actually pay people to keep AI honest?

At the center of Mira’s approach is the MIRA token economy. This isn’t just another crypto hype machine it’s a whole new way to turn checking AI’s accuracy into a living, breathing marketplace.

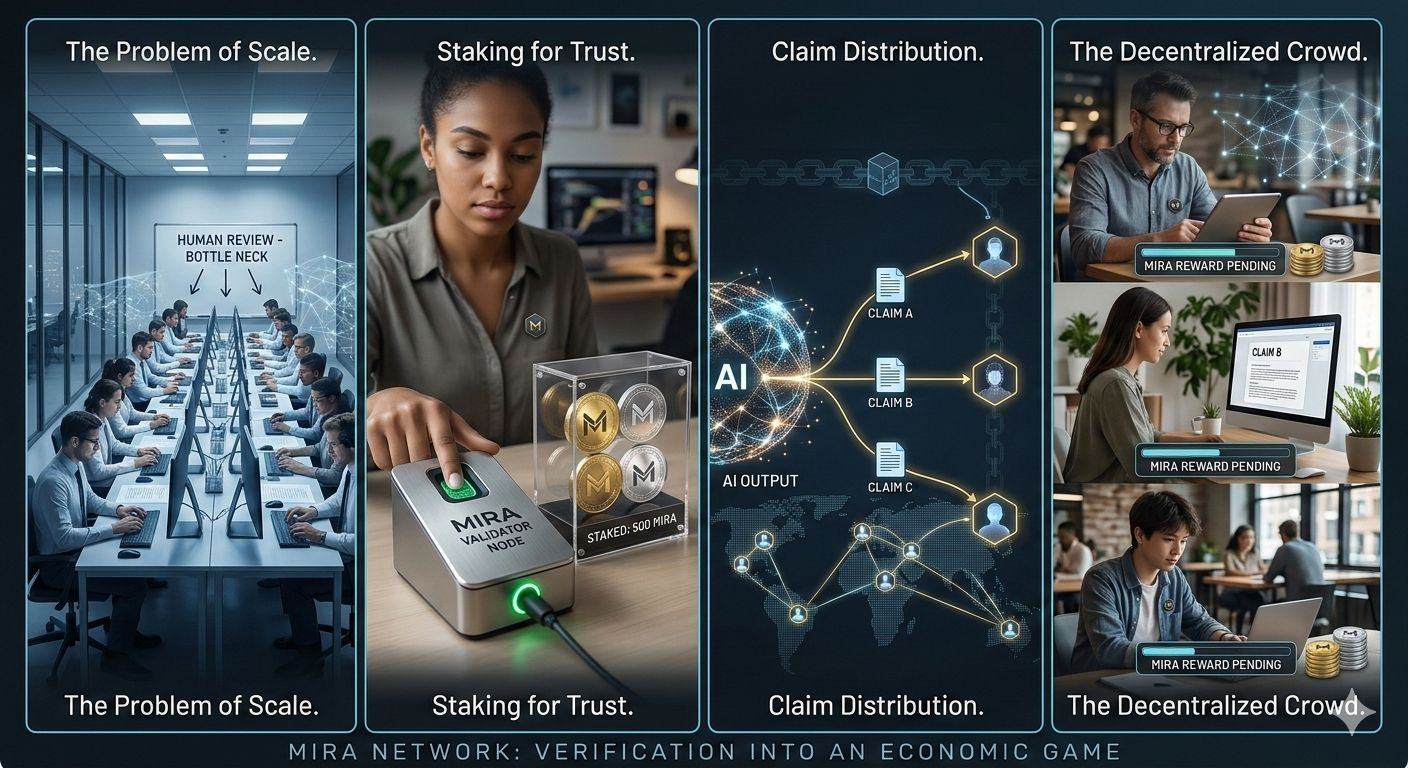

Turning Verification into an Economic Game

Normally, companies try to keep their AI in check with centralized moderation or endless rounds of human review. It’s slow, expensive, and doesn’t really scale. Mira flips the script. Instead of a handful of people controlling everything, you’ve got a decentralized crowd validators checking AI claims. No blind trust, just cold hard incentives.

Here’s how it works: Validators have to stake MIRA tokens to join in. When AI generates an output, it gets broken into smaller, bite-sized claims. These are sent out to different validators. Each one checks a claim and reports back.

If most validators agree and you’re with them, you earn rewards. Try to game the system or just toss out random answers? You lose your tokens simple as that.

This setup means honesty isn’t just a virtue, it’s the smart way to play.

Staking: Skin in the Game

Staking is the backbone of Mira’s security. Validators have to put their own $MIRA tokens on the line before they can even start. The more tokens you stake, the bigger the potential rewards but the risks grow, too. If you mess around or try to cheat, you’ll lose more.

So, everyone’s motivated to get it right. Financial risk and reward are directly tied to whether your verifications are accurate.

Slashing: The Cost of Dishonesty

No economic system works without real consequences. Mira’s got slashing validators who mess up, try to manipulate things, or just keep disagreeing with everyone else lose their staked tokens.

Slashing keeps people honest and stops groups from teaming up to cheat the system. If you’re dishonest, you’re just burning money. It’s a model proven by other blockchains, but here, it’s tuned specifically for checking what AI spits out.

Reward Distribution & Sustainable Incentives

Rewards in the MIRA system aren’t random. They go to validators based on how accurate, active, and committed they are, plus how much the network is being used.

As more people use AI, there’s more stuff to verify and more rewards up for grabs. The whole thing starts to feed on itself more AI activity brings more validators, which makes the network safer and more trustworthy. The $MIRA token isn’t just a way to pay people it’s what keeps the whole thing running.

Aligning AI With Market Forces

Here’s the real twist Mira doesn’t just hope AI gets smarter over time. It creates a whole marketplace where accuracy gets priced checked & rewarded every step of the way.

Truth is not just a philosophical idea here it is something you can measure and get paid to deliver. Validators are not just bystanders they’re active players with skin in the game. AI outputs go from being vague probabilities to claims that are financially backed and collectively agreed upon.

Why This Matters for Autonomous Systems

Think about it robots DeFi bots, autonomous agents they don’t get second chances. They need certainty before they move money, sign contracts, or make decisions that could affect real people.

The MIRA token economy gives us a way to bake trust right into machine outputs, at scale, without a central authority peering over everyone’s shoulders.

As AI and blockchain keep merging, Mira’s model could be the start of something new a decentralized trust layer for the age of intelligent machines.

There’s more information out there than ever, but actual, verifiable truth is rare. Mira’s betting that if you make truth valuable something people can earn by proving you’ll get more of it. Maybe that’s how we finally get AI we can trust.

#mira || #Mira || @Mira - Trust Layer of AI