I’ve seen this movie before, but the crypto version is sharper because agents and onchain automation turn certificates into permission. In every serious automation system I’ve worked around, the last mile isn’t intelligence. It’s authorization. The tool doesn’t fail because it can’t decide. It fails because it isn’t allowed to act. Someone flips a switch from “suggest” to “execute,” and the system stops being judged by how clever it sounds and starts being judged by what it can justify.

That’s why I don’t treat Mira Network as “a verification layer” in the comforting sense. If Mira succeeds, its certificates won’t sit there as optional paperwork. They will become the gate that decides whether an agent can proceed and whether an automated path is even permitted. Once that happens, verifiability stops being a safety feature and becomes a boundary around what the system is allowed to do.

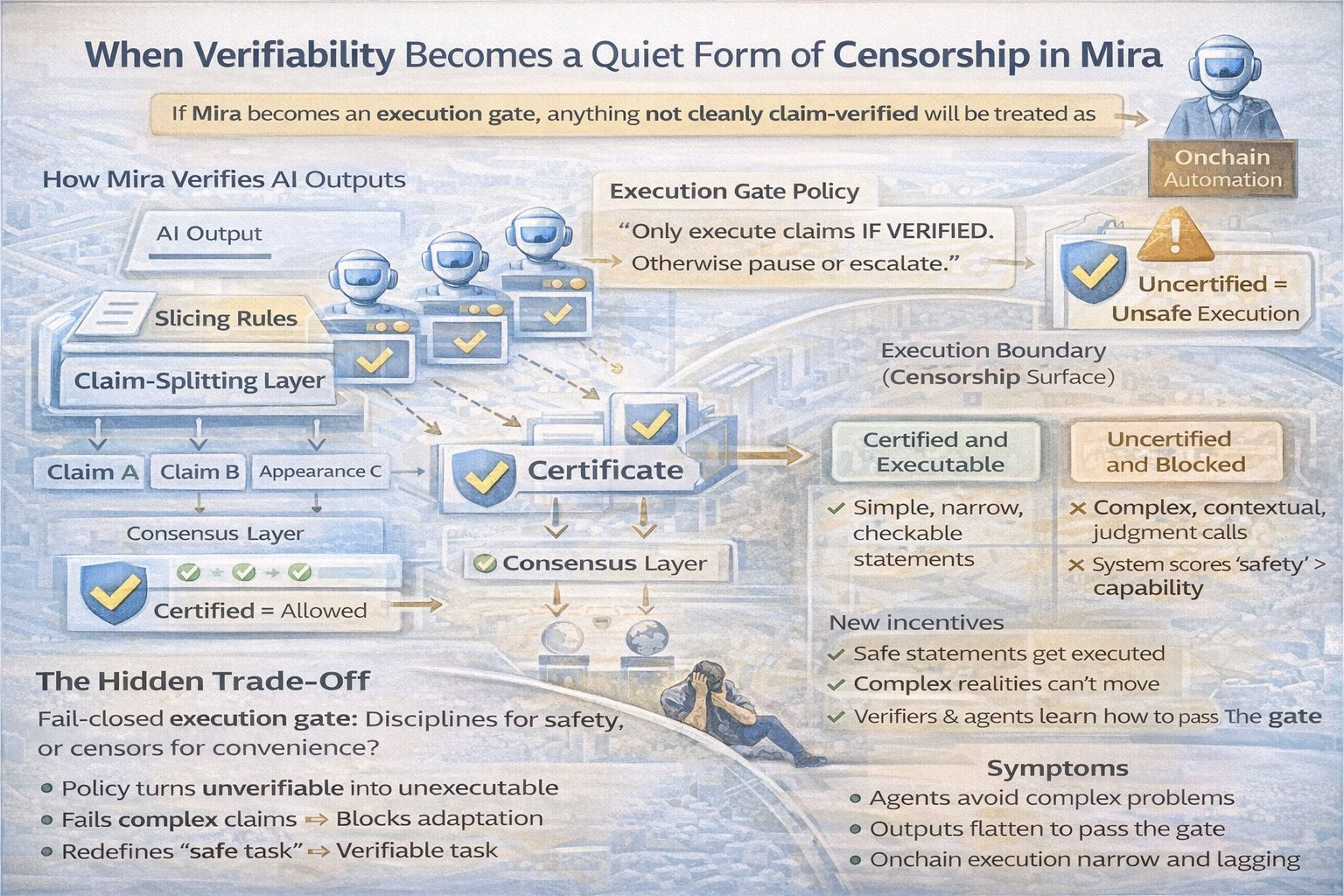

The mechanism is straightforward. Mira takes an AI output, breaks it into claims, pushes those claims through independent verification, and produces a certificate based on consensus. In an automated workflow, that certificate becomes policy input. Only execute if the certificate clears a threshold. If consensus is split, fail closed and block the action. If the claim cannot be cleanly verified, route it to escalation or require a stricter verification setting. The certificate stops being descriptive and becomes a control signal.

Now look at what that implies. Anything that cannot be expressed as a clear claim, evaluated by verifiers, and resolved into a certificate state becomes unsafe by default under execution policy. It doesn’t matter if the statement is true in a human sense. It matters whether it fits the format the gate can process. The system doesn’t “miss” the truth. It simply refuses to carry what it cannot certify.

That refusal has a predictable shape. Cleanly verifiable claims tend to be discrete, bounded, and testable without interpretive burden. The hard parts of reality tend to be contextual, probabilistic, and dependent on incomplete information. Humans make decisions in that messy zone every day. An execution gate built on claim verification will treat that zone as suspect, not because it is false, but because it is difficult to formalize into something multiple verifiers can agree on.

When a certificate becomes the key to execution, everyone upstream adapts to the gate. The generator learns what passes. Verifiers learn what is rewarded. Users learn what gets through without delay. Over time, the pipeline bends toward statements that are easiest to certify, because certified statements are the ones that can move. That is the quiet constraint: the system starts optimizing not for truth in the broad sense, but for truth in the machine-checkable sense.

In practice, this is how the action space shrinks. If a policy says an agent can only execute on verified claims, then unverifiable claims don’t just get flagged. They get excluded from the set of permissible actions. The system begins behaving as if “unverifiable” equals “unexecutable.” That can be a reasonable safety posture early on. It also quietly changes what kinds of tasks the system can attempt without human rescue.

I’m not using the word censorship in a political way. I mean it as a systems property. A gate filters what can be acted upon. In the same way a compiler rejects code that doesn’t match its grammar, an execution policy rejects decisions that don’t match its claim grammar. The agent isn’t punished for being wrong. It’s blocked for being unverifiable. And unverifiable often means complex, contextual, or new.

That last part is where the risk becomes strategic. New information is rarely easy to certify. Early signals are noisy. Emerging threats have weak consensus. Novel fraud patterns look like anomalies until they become obvious. If execution is conditioned on clean verification, the system will tend to lag reality. It will wait until claims become stable enough to certify, which often means waiting until they become conventional enough to agree on.

Onchain automation makes this sharper because smart contracts and automated strategies run on conditions, not nuance. If certificates become conditions, then certificate fields like quorum outcomes, threshold status, and “verified versus disputed” states can decide whether capital moves. At that point, “what can be verified” becomes a definition of “what can be executed.” A certificate doesn’t just describe what the system believes. It becomes part of the machinery that translates belief into action.

There is a real trade-off hiding inside this. Execution gates reduce catastrophic error by narrowing the action space. That’s the promise. But narrowing the action space also reduces capability, sometimes exactly where capability is valuable. You can build a system that is safe because it refuses to do anything uncertain. Many institutions already do this. They call it governance. The result is a machine that is correct in a narrow corridor and ineffective outside it.

Mira, if it becomes a standard, risks recreating that corridor in protocol form. Not because anyone is malicious, but because certificate-driven policies are easy to justify. Only execute if verified. Only execute if quorum is strong. Only execute if the certificate clears the strict threshold. Those rules sound responsible. They also systematically push agents away from tasks that require judgment and toward tasks that reduce to checklists.

Once this logic is installed, a second-order effect follows. People begin designing work around the gate. Teams rewrite procedures so outputs can be decomposed cleanly into claims. They simplify context so verifiers can converge. They flatten decisions into smaller statements because smaller statements are easier to certify. Over time, the system doesn’t just block actions. It reshapes workflows into whatever the certificate can express.

Every gate does this. When you require forms, people write work to satisfy forms. When you require audit trails, people write work to satisfy audit trails. When you require verification certificates, people learn to write truth in the shape the certificate can carry. The system becomes more legible and more constrained at the same time.

The uncomfortable question is what happens to the parts of reality that refuse to become legible. They don’t disappear. They get pushed outside the execution boundary. Humans still handle them, often under time pressure, often without the same safeguards. A certificate-driven world can quietly create two lanes: certified execution and uncertified judgment. The certified lane looks safe. The uncertified lane absorbs the mess.

This isn’t an argument against Mira. It’s an argument about what Mira becomes if it succeeds. If certificates are trusted as execution gates, then verifiability becomes a boundary condition for action. The real question is whether Mira’s claim-verification format can avoid becoming the grammar everything must obey, because once the grammar is installed, the system will treat the unverifiable as unexecutable.

That is the most durable constraint a technical protocol can introduce. It doesn’t silence ideas. It makes some ideas impossible to act on.

@Mira - Trust Layer of AI $MIRA #mira