The AI boom is moving so fast that most people are focused on capability: bigger models, smarter systems, faster outputs. But the deeper I look at where this industry is heading, the more I realize the real bottleneck isn’t intelligence.

It’s trust.

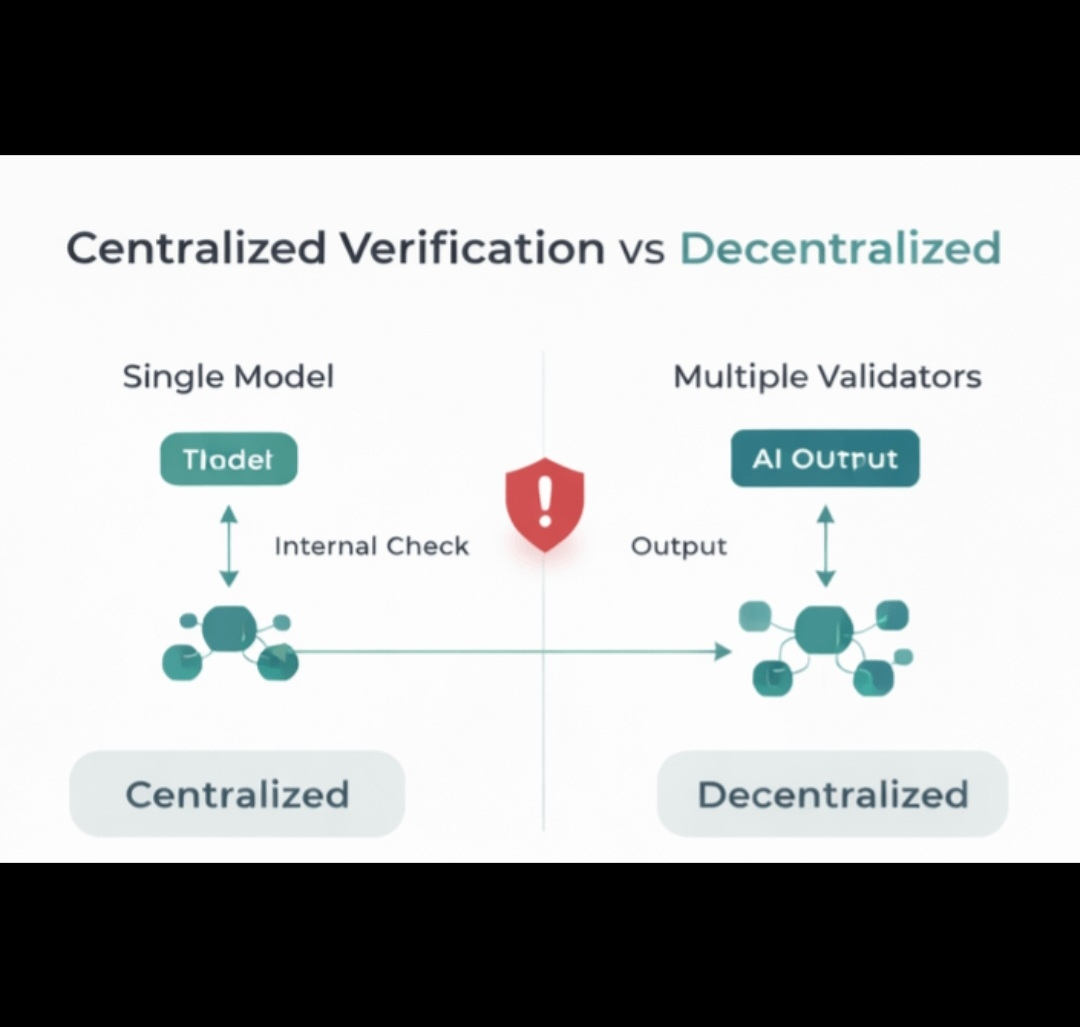

Right now AI systems generate text, images, code, research, and analysis at massive scale. But there’s a fundamental problem that almost nobody talks about enough: how do we verify any of it?

When AI can generate millions of outputs every minute, the internet starts filling with synthetic content faster than humans can verify it. Data becomes questionable. Information becomes harder to trust. And suddenly the most important resource in the AI economy isn’t intelligence — it’s verification.

That’s exactly where MIRA starts to stand out.

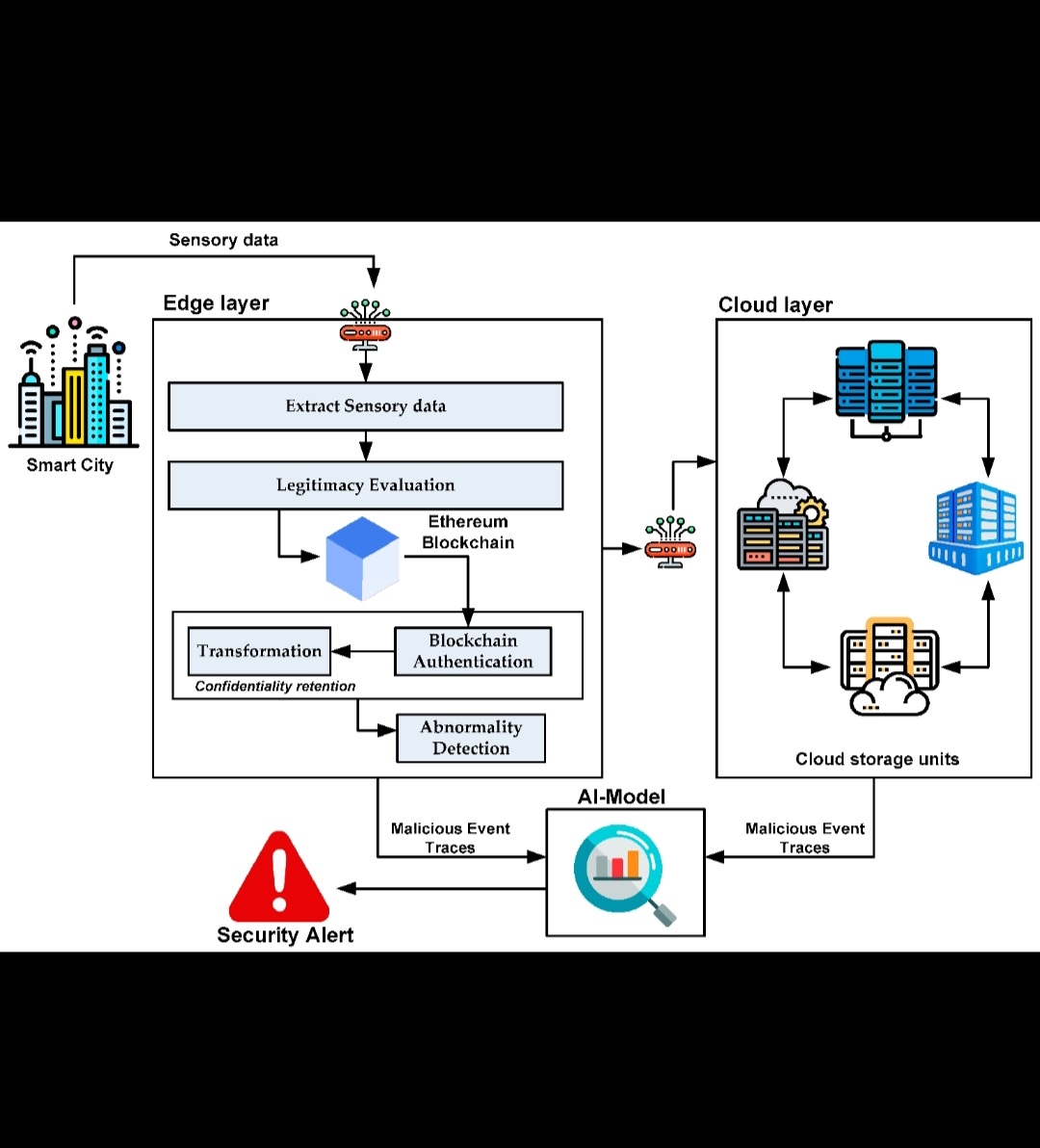

Instead of trying to build another AI model or competing in the endless race for larger datasets, Mira is approaching the problem from a completely different angle. The project is focused on building a verification layer for artificial intelligence — infrastructure that helps determine whether AI outputs can actually be trusted.

And in my opinion, that problem is far bigger than most people realize.

Think about where the world is heading.

AI agents are starting to perform research. They generate reports. They write code. They analyze markets. Some systems are even beginning to make automated decisions that influence real-world outcomes.

Now imagine millions of these agents operating simultaneously.

Without a verification layer, the entire system becomes fragile. False outputs, manipulated models, biased data, and hallucinated information could spread at enormous scale. The problem isn’t just technical — it becomes economic and societal.

If AI becomes a core part of decision-making, then verifiable intelligence becomes one of the most valuable resources on the internet.

That’s the thesis that makes MIRA interesting.

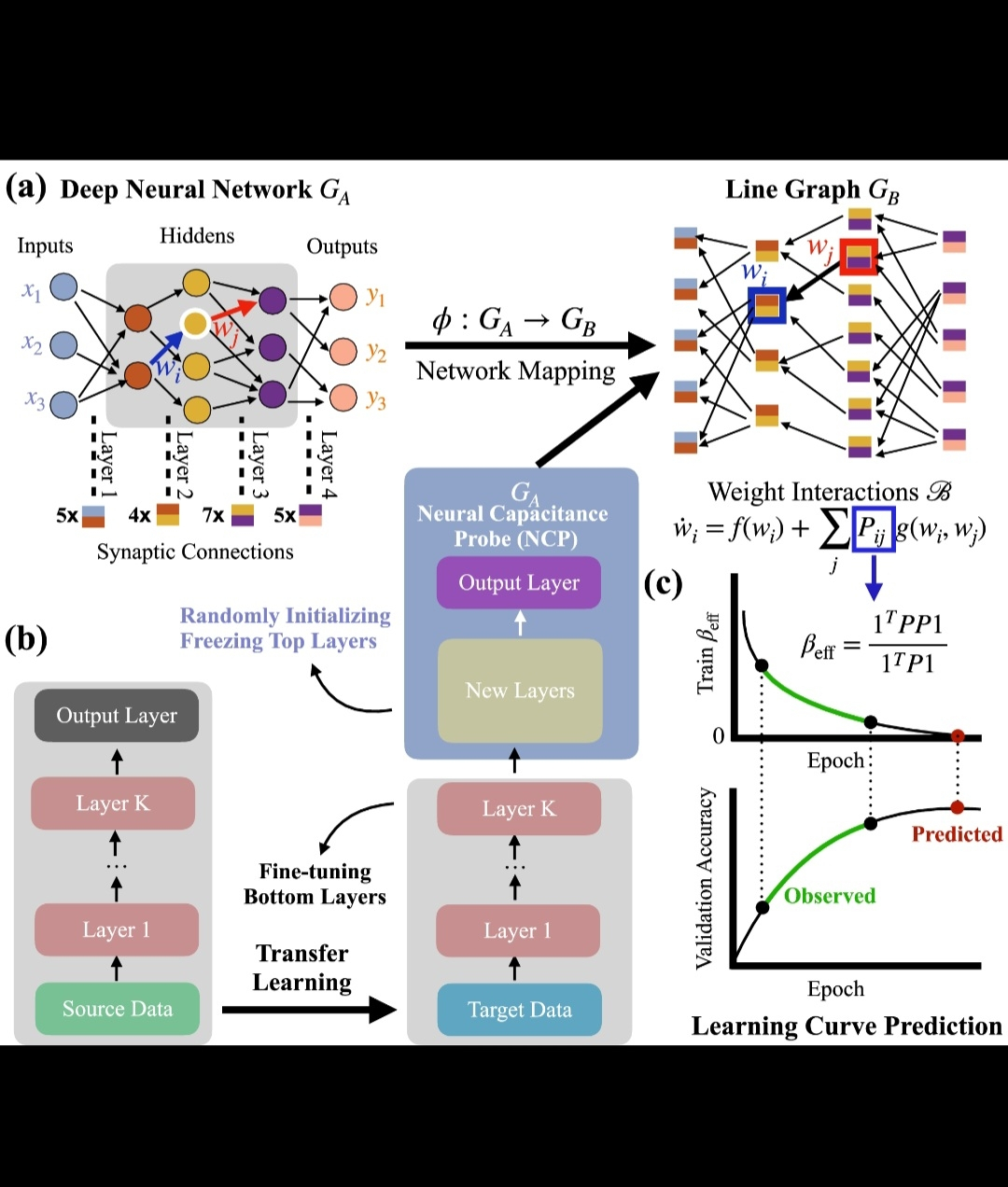

Instead of focusing purely on AI capability, Mira is positioning itself around the idea that the future AI ecosystem will require trust infrastructure. Networks capable of validating outputs, evaluating models, and ensuring that the information produced by machines meets a certain level of reliability.

When you frame the problem this way, the project starts looking less like a niche experiment and more like a foundational layer.

What fascinates me is how early this conversation still is.

The tech world is obsessed with building smarter AI. But very few projects are asking the harder question: how will we trust the outputs these systems generate?

Because intelligence without verification quickly turns into noise.

If the internet becomes flooded with machine-generated content, markets, research, media, and even governance systems will eventually demand mechanisms that separate reliable outputs from unreliable ones.

That’s exactly the gap Mira is attempting to address.

And if AI truly becomes the dominant technological force of the coming decade, then the infrastructure that ensures its reliability may become just as important as the models themselves.

That’s why MIRA keeps showing up on my radar.

Not because it promises hype or short-term narratives, but because it is exploring a problem that will only become more urgent as AI continues scaling across every industry.

In a world increasingly shaped by artificial intelligence, the most valuable layer might not be the one that generates answers.

It might be the one that proves those answers can be trusted.