I integrated the Mira SDK into an existing workflow last week. This was not a greenfield build or an experimental prototype. The pipeline was already stable and doing its job. It extracted contract clauses and passed them into a downstream classification layer. Accuracy was solid. Latency was acceptable. From a purely technical standpoint, nothing was broken.

But there was one persistent friction point: approval.

Every extracted clause still had to be reviewed by a human before it could move forward. Not because the model performed poorly. Not because we lacked benchmarks. The requirement existed because compliance does not operate on confidence scores. It operates on proof. Internal policy still required a “human validated” tag before anything could be relied upon.

That line in the policy did not move, even as model metrics improved.

So I added Mira.

The integration was straightforward. Install the SDK. Point the endpoint to apis.mira.network. Add the key. Within minutes, the first responses were coming back. On the surface, nothing seemed dramatically different. The outputs resembled what the model had been producing before.

The real difference showed up in the logs.

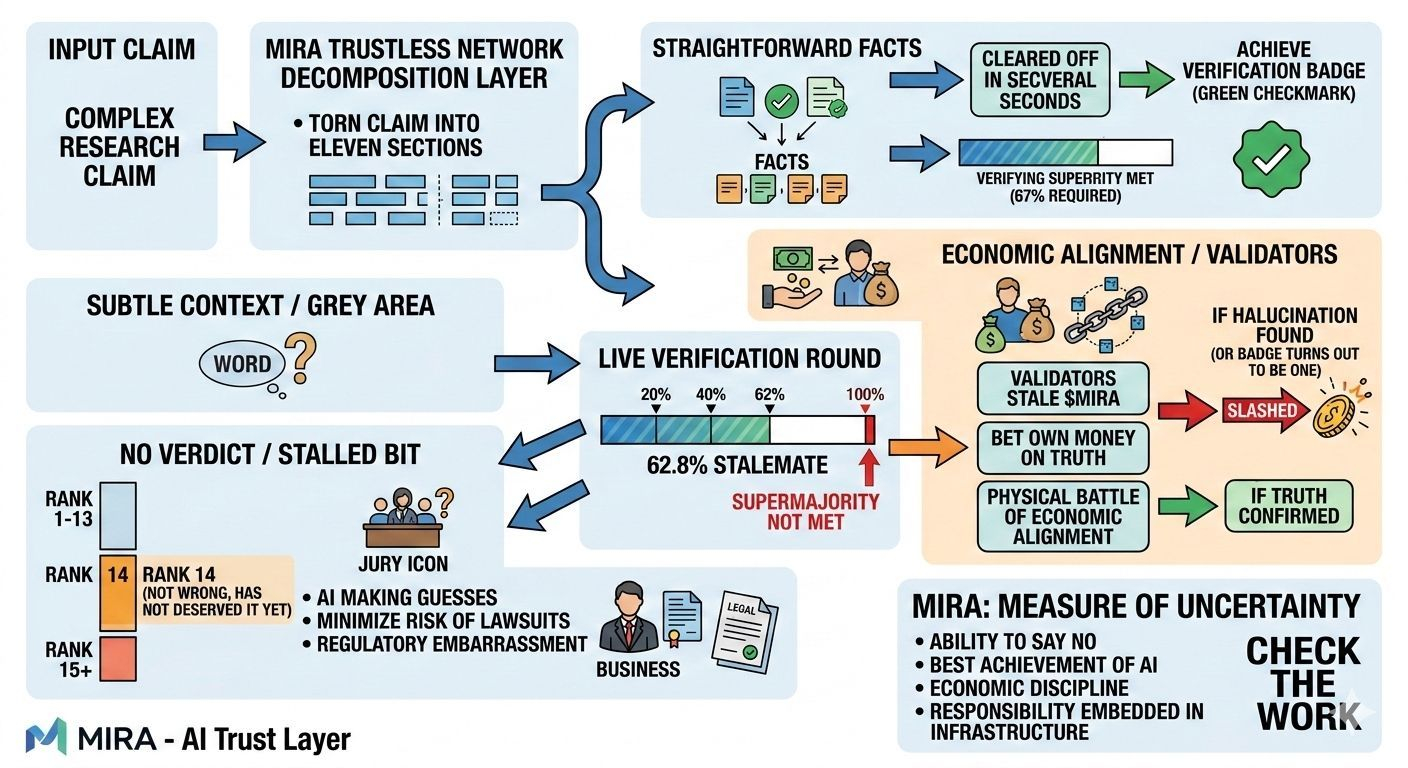

The first API call was simple. A straightforward clause referencing a date and jurisdiction. Standard boilerplate language. Validators engaged almost immediately. A quorum formed quickly. Stake was committed. A certificate was issued and the output hash anchored.

Clean. Fast. Predictable.

The second request looked routine at first glance. It was another clause from the same contract set. But this one contained an indemnification carve-out with conditional phrasing. Its interpretation could vary depending on jurisdiction and contextual framing.

This time the process unfolded differently.

Independent validators began evaluating the claim. These were distinct models, trained separately, each bringing its own assumptions and priors. As their confidence vectors formed, the variation became visible. Some leaned toward one interpretation. Others toward another.

The quorum weight began to rise, then slowed. It paused briefly. Then resumed.

Eventually consensus crossed the threshold and a certificate was issued. The claim passed verification.

But one metric stood out: dissent weight.

Even though the claim cleared quorum, disagreement among validators remained higher than in the earlier example. That number stayed in the logs. It did not disappear once the certificate was printed.

In the previous system, none of this nuance would have surfaced. The model would have returned an answer with a confident tone. There would have been no signal that reasonable alternative interpretations existed. Every output appeared equally certain.

With Mira, the claim still passed. The certificate still verified it. But the system also exposed how aligned the independent validators actually were.

I continued running more clauses through the pipeline.

A pattern emerged.

Factual, unambiguous clauses cleared rapidly. Quorum formed quickly. Stake committed without hesitation. Dissent weight stayed low.

Interpretive clauses behaved differently. Validators took longer to align. Confidence vectors shifted before stabilizing. Sometimes the dissent weight remained noticeably elevated even after consensus was reached.

Those became interesting.

No one had specifically requested this additional signal. The original mandate was simpler: replace the “human validated” label with something cryptographically defensible.

But once dissent weight was visible, the review process changed organically.

Reviewers began opening the clauses with higher dissent first. Not because verification had failed, but because the system highlighted where interpretation was less clean. Clauses that cleared with tight consensus stopped requiring routine inspection. The review queue started shrinking.

The improvement did not come from making the base model smarter. It came from revealing where uncertainty lived.

Previously, the pipeline flattened all outputs into the same presentation layer. Everything looked equally confident. That illusion forced humans to treat every clause as potentially risky.

Mira preserved disagreement in the record. It did not hide it behind a single probability score. The certificate verified the output, but it also reflected how smooth or contested the agreement was among independent evaluators.

That distinction turned out to matter more than marginal accuracy gains.

Compliance teams are not only concerned with whether a claim passes. They care about how robust that conclusion is under scrutiny. By surfacing validator alignment, the system provided something closer to audit-grade evidence.

The result was subtle but meaningful. Human review shifted from blanket oversight to targeted triage. Clean consensus moved through untouched. Ambiguous language received focused attention.

The model did not fundamentally change.

What changed was visibility.

And in a compliance environment, visibility into uncertainty is often more valuable than another decimal point of performance.

#Mira @Mira - Trust Layer of AI $MIRA