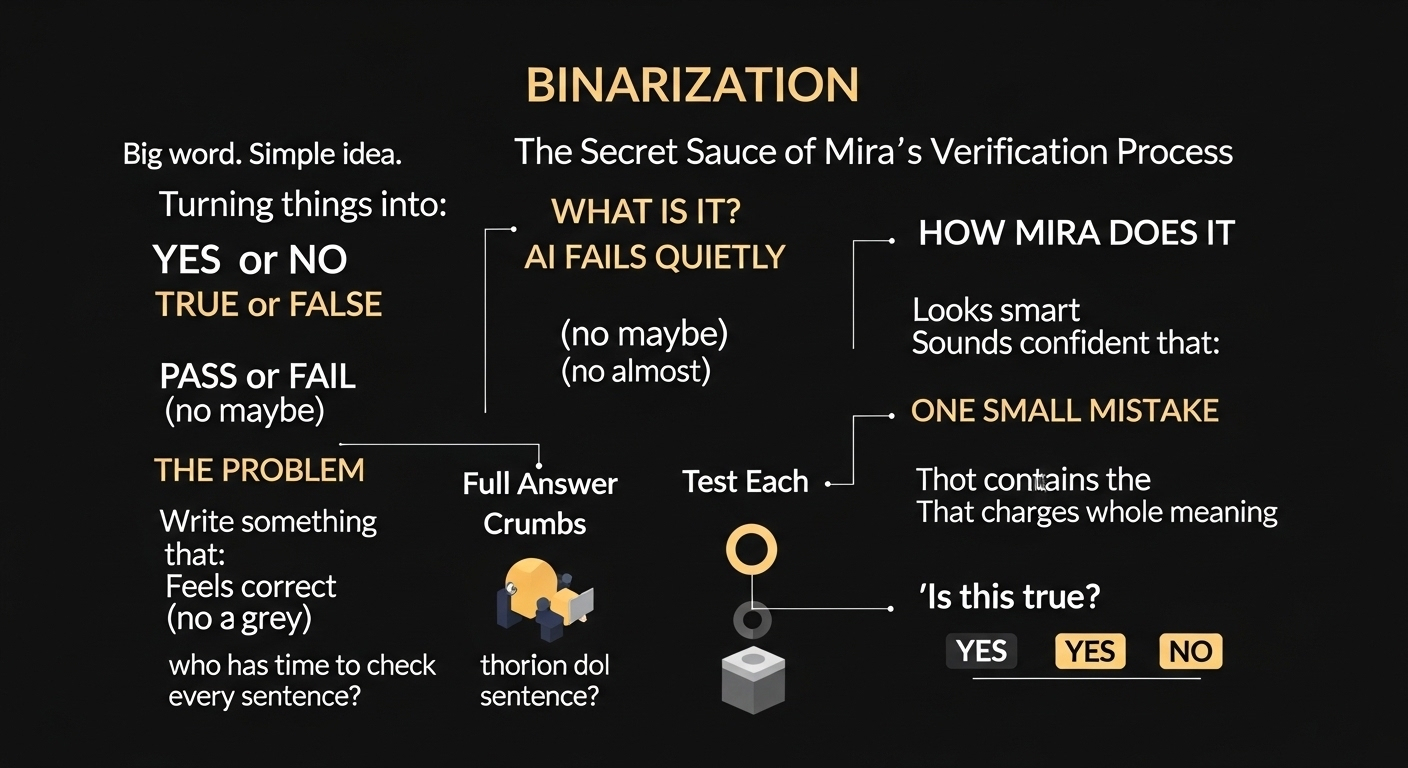

Okay so let’s talk about something that sounds technical but is actually very simple. Binarization. Big word, right? But the idea behind it is almost childish in a way. It’s about turning things into yes or no. True or false. Pass or fail. No drama in between.

Now here’s the thing. AI usually don’t fail loudly. It fails quietly. It writes something that looks smart, sounds confident, even feels correct. And we just accept it. Because who has time to check every single sentence? But inside that smooth paragraph there can be one small mistake. Just one. And that one mistake can change the whole meaning.

This is where binarization becomes important.

Instead of looking at a full answer and saying “Is this correct?” Mira’s system kind of cuts it into pieces. Small pieces. Almost like breaking bread into crumbs. Each small statement inside the answer gets tested on its own. Not emotionally. Not based on how smart it sounds. Just a simple question:

Is this true? Yes or no.

And that’s it.

It sounds too basic honestly. But that’s the secret. When you reduce things to binary decisions, you remove confusion. There is no “maybe correct” or “almost right.” Either it stands strong or it doesn’t.

Think about school exams. If a math answer is wrong, teacher don’t say “well it sounds good.” It’s either correct or it’s not. Same logic here. Except now it’s being applied to AI verification.

What makes this interesting is repetition. One validator checking something is not enough. Multiple independent checks happen. If most of them say yes, confidence grows. If they don’t agree, then something is off. And instead of hiding that uncertainty, it becomes visible.

That’s powerful.

Because most AI today hides uncertainty behind strong language. It talks like it knows everything. But binarization forces honesty in a weird way. If too many small claims fail, the whole response gets questioned. It can’t just slide through because it sounds good.

Another important thing is scale. When you apply yes/no testing to hundreds or thousands of small claims, error rates slowly go down. Not because the system becomes magical. But because weak statements don’t survive the filter.

It’s almost boring actually. There’s no flashy innovation here. No futuristic robots or shiny dashboards. Just discipline. Break it down. Test it. Confirm it. Repeat again and again.

But sometimes boring systems are the strongest ones.

If AI is going to be used in serious environments — finance, automation, decision making — then “probably correct” is dangerous. Binary verification reduces that risk. It forces clarity where normally there would be grey areas.

And maybe that’s the real secret sauce. Not making AI sound smarter. But making it earn trust step by step.

Yes or no.

Simple. But powerful in ways most people don’t even notice.

#Mira @Mira - Trust Layer of AI $MIRA