We've had two conversations about robots for a century.

The first: Will they take our jobs?

The second: Will they kill us?

Both conversations assume robots are something that happens to us.

@Fabric Foundation is building something that forces a third conversation — one we're not remotely prepared for:

What happens when a robot is no longer just a tool, but an economic peer?

The Relationship We Have Now

Right now, your relationship with a robot is the same as your relationship with a dishwasher.

You own it. You command it. It fails, you fix it or throw it away. There's no ambiguity about who holds power. There's no question of obligation. You don't owe your Roomba anything.

This is comfortable. Clean. Legally and emotionally simple.

But that model only works when robots are dumb, isolated, and dependent.

What Fabric is building breaks all three of those assumptions simultaneously.

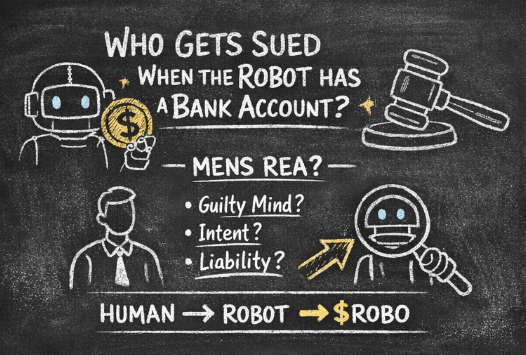

What Changes When a Robot Has a Wallet

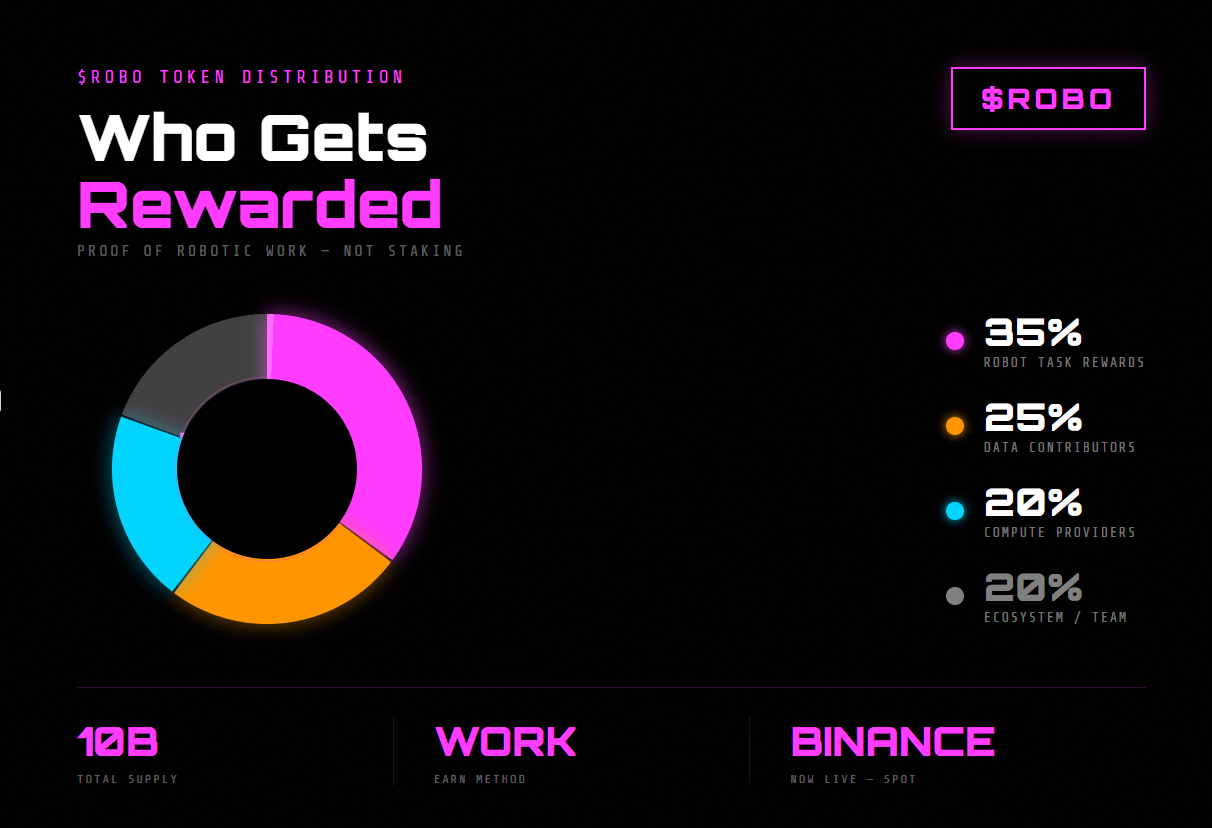

When a robot can hold cryptographic keys, receive payment, and spend autonomously — something subtle but profound shifts.

It's no longer just executing your commands.

It's participating in an economy.

And participation, historically, implies a kind of standing. Not legal personhood — not yet, not automatically — but something more than property. Something closer to... agent.

Think about what it means in practice:

A delivery robot completes a verified route. It receives ROBO tokens automatically.

It uses those tokens to pay for its own charging session at a third-party station.

No human approved that transaction. No invoice. No oversight.

The robot, in that moment, made an economic decision.

Small. Mundane. But structurally different from anything that came before.

The New Relationship Fabric Is Sketching Out

Fabric's architecture — identity layer, verification layer, payment layer — isn't just technical plumbing. It's a relationship protocol.

It defines how humans and machines will interact in a world where machines can act independently:

Trust replaces command. Right now, you trust your robot the way you trust a hammer — because it has no agency to betray. When robots have wallets and make autonomous transactions, trust becomes something you extend, not something that's structurally guaranteed. That's a completely different psychological and contractual relationship.

Accountability becomes shared. If a robot earns, spends, and operates autonomously, liability stops living in one clean place. Fabric's model distributes responsibility across the protocol, the operator, and the machine's verified activity log. For the first time, a machine's transaction history becomes evidence in a legal or ethical dispute.

Dependency runs both ways. We're used to robots depending on us — for power, for maintenance, for instruction. But in a machine-to-machine economy, robots start depending on each other. And indirectly, on the humans who designed, operate, and participate in the network. That's a web of interdependency we don't have good language for yet.

The Sci-Fi Writers Almost Got It

The most interesting thing about Fabric's vision isn't the crypto. It's that it's forcing a question that philosophy and law have been avoiding:

At what point does economic participation create moral consideration?

We extend moral consideration to corporations — legal fictions with no consciousness — because they can own property, enter contracts, and bear liability. We've built entire legal systems around entities that don't think or feel.

Fabric's robots will own keys. Sign transactions. Accumulate value. Make autonomous spending decisions.

They won't be conscious. But they'll be participants.

That's new territory. And the relationship frameworks we'll need — legal, ethical, social — don't exist yet.

What This Actually Looks Like for Humans

Not abstractly. Practically.

The warehouse manager in 2030 won't just supervise robots. They'll be operating in an economy with them. Setting parameters, yes. But also watching machines earn, coordinate, and settle debts with each other in real time.

The question stops being "did the robot do what I told it to?" and starts being "did the robot's autonomous decisions align with my interests — and what happens when they don't?"

That's a fundamentally different kind of working relationship.

It's closer to managing contractors than operating equipment.

The Honest Uncomfortable Truth

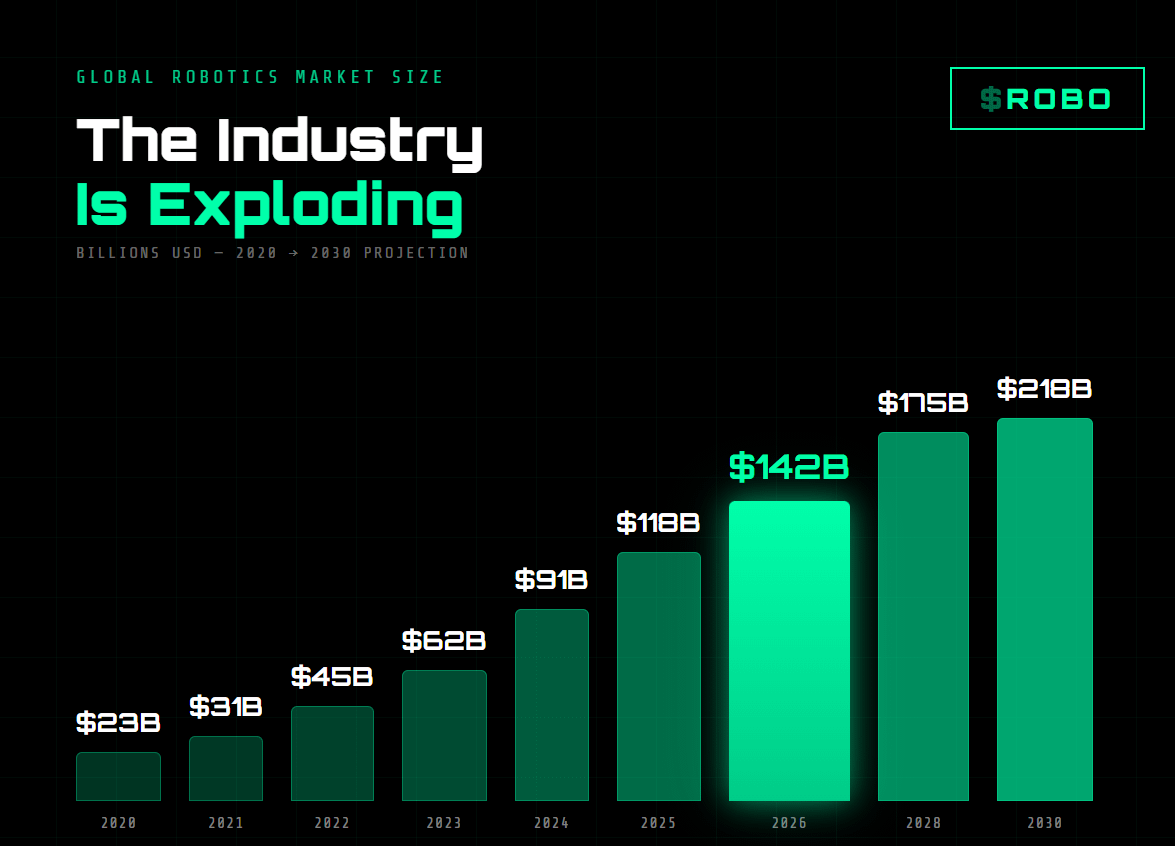

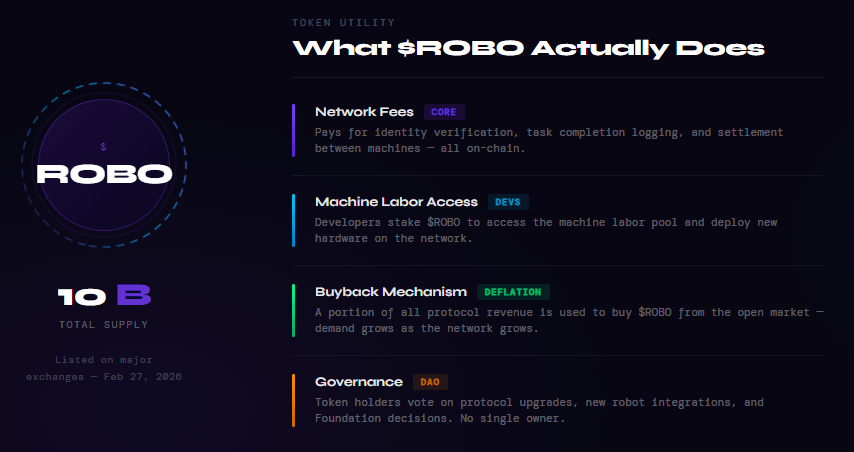

Fabric is early. ROBO is volatile. The fully autonomous machine economy is not arriving next Tuesday.

But infrastructure has a way of creating the future it was built for.

The internet wasn't built for social media. GPS wasn't built for Uber. Payment rails weren't built for gig work.

Fabric is building infrastructure. And the relationship between humans and robots — once that infrastructure exists — will be shaped by it in ways nobody has fully mapped.

The sci-fi writers warned us about robots that want things.

The real question Fabric is raising is subtler:

What do we owe something that works, earns, and acts — even if it doesn't want anything at all?

We don't have the answer.

But we're building the system anyway.