I keep hearing the word autonomous in agent discussions. Most of the time the teams using the label have quietly added a second confirmation step behind the scenes. Not because they distrust AI. Because nobody wants to ship a wrong action with confidence and no rollback.

@Mira - Trust Layer of AI is building the infrastructure to replace those hidden steps with protocol-level verification. The approach deserves a close look.

The Ladder Nobody Announces

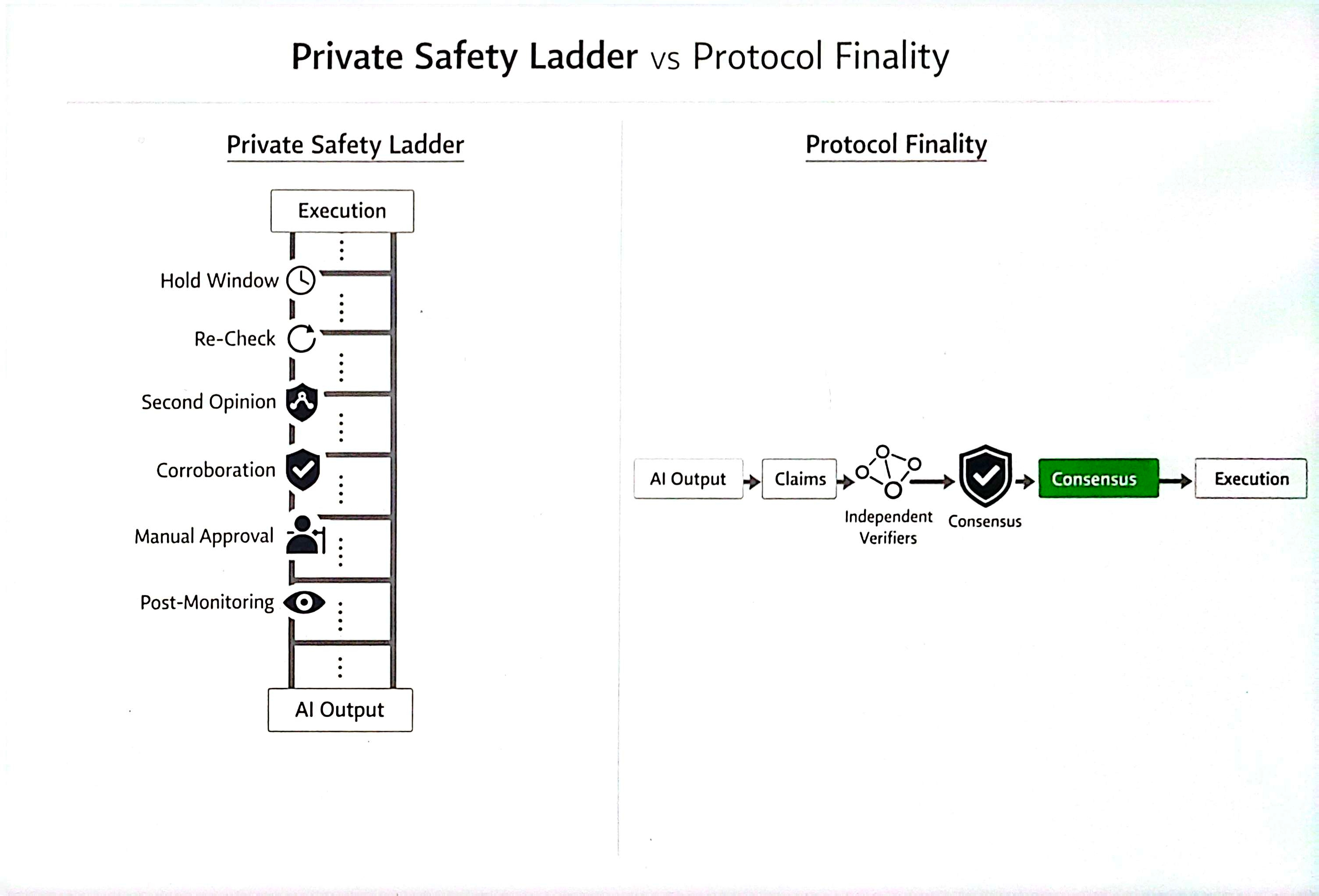

In production systems, "autonomous" often means the model generated the output, the UI felt complete, and the team inserted a re-check before execution. A hold window. A second model. A manual gate for anything involving money or permissions. None of this gets announced. Teams call this reliability work. What they are confessing: verified did not collapse into usable, so supervision stayed.

This is the operational problem Mira targets. Not model quality. Coordination.

How Mira Structures Finality Instead of Guessing

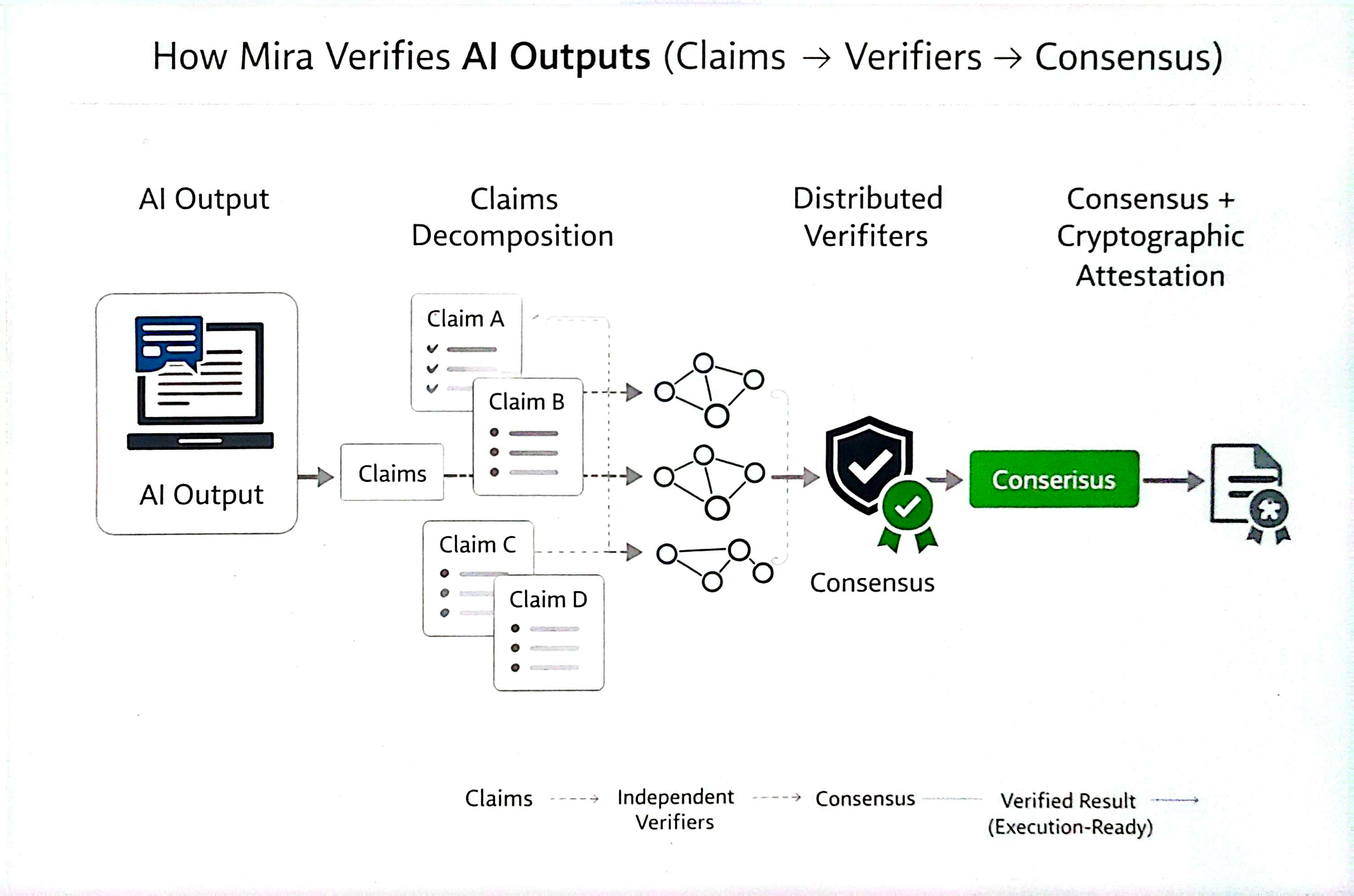

Mira's whitepaper describes a network where complex AI output gets broken into independently verifiable statements. Those statements travel to distributed verifier nodes running diverse models. Results aggregate through consensus and the network returns a cryptographic certificate. Users specify requirements including consensus thresholds like N-of-M agreement.

The claim here is structural: instead of asking one more model for a second opinion, Mira builds shared rules for when an answer becomes final. The free alternative most teams use is a private re-check ritual with no guarantees. Mira replaces the ritual with a protocol.

Why the Economics Are the Red Line

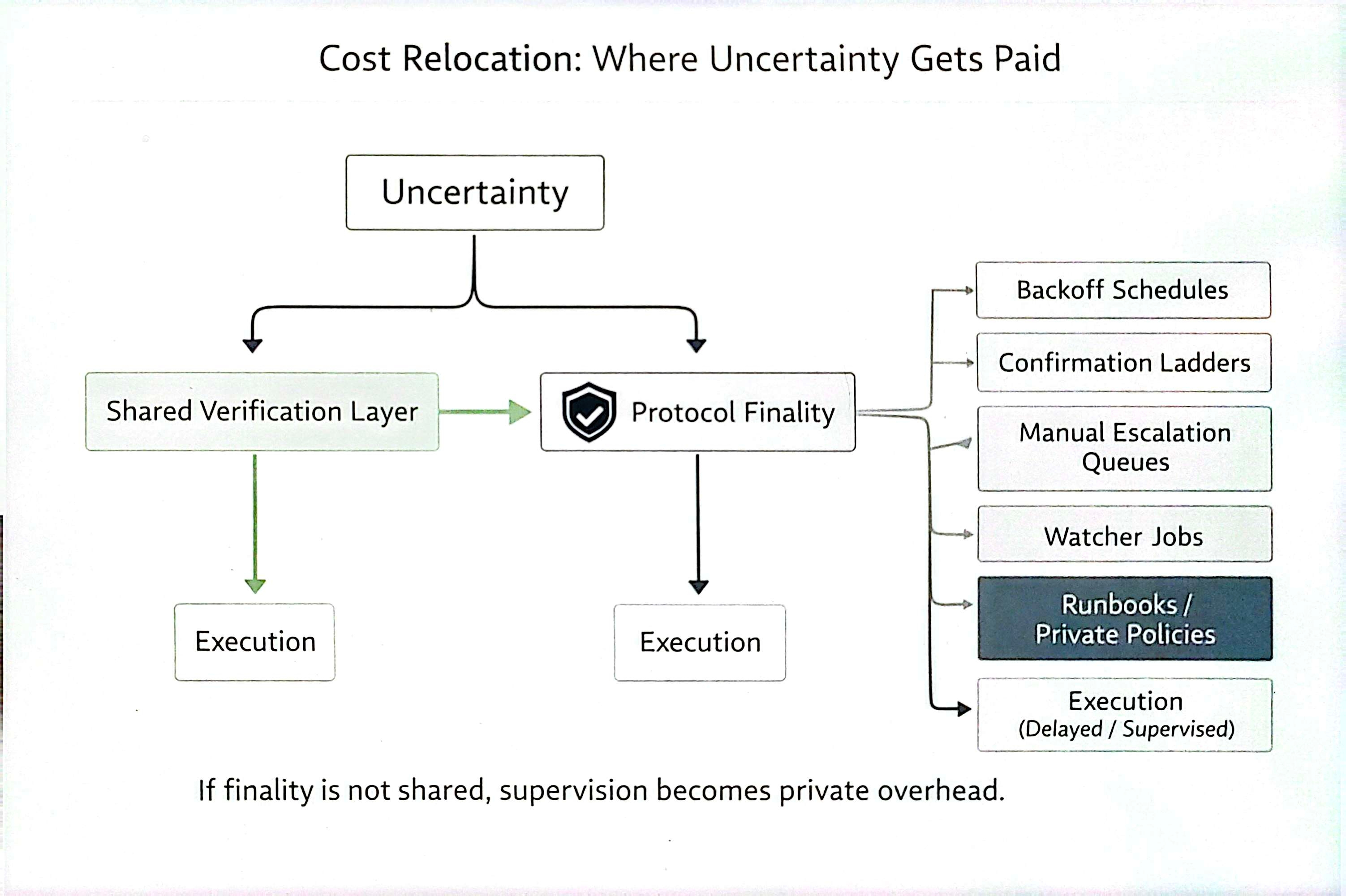

Verification capacity needs to exist when the network is busy. If verifiers lack incentive alignment, the cost leaks back into the application layer as private reviewer queues. The same ladder rebuilt off-chain.

$MIRA positions the token as infrastructure for participation in verification, governance, and API access payments, with staking tied to node operator roles. The reward structure ties honest behavior to economic returns and slashing to dishonest output. At all times, the network needs enough funded verification capacity to keep integrators from building private workarounds.

This is where most AI reliability wrappers fall short. They treat trust as a prompt engineering problem. Mira treats trust as a coordination problem with incentives. The difference is fundamental.

The Solve for Autonomy Is Not Accuracy

The right question about #Mira is not whether the network produces a verification result. The right question is whether integrators stop writing private safety policies around the result. Do teams still require a second confirmation outside Mira? Do hold windows become normal?

If yes, supervision is still winning. If no, Mira has removed the need for private safety ladders.

I want to learn what happens under real production load. The challenge is proving this under adversarial conditions.

Why I Am Constructive on This Approach

Mira forces the industry to stop confusing fluency with finality. Verification-first systems add friction and demand structure. But in high-stakes automation, friction is the cost of turning "looks correct" into "safe enough to execute."

The competition in this space will not come down to who generates the cleanest answer. The trading of attention between projects will sort itself. The winners will be whoever makes finality cheap enough to eliminate the hidden confirmation box every serious team builds. Each packet of trust needs to be auditable, not assumed. Mira is one of the few projects building toward making the code of verification a shared layer.

The trending conversation in crypto AI circles keeps returning to trust infrastructure. The day the private safety ladder disappears is when autonomy stops being a marketing earn and starts being operational reality. I think Mira is working on the right problem.