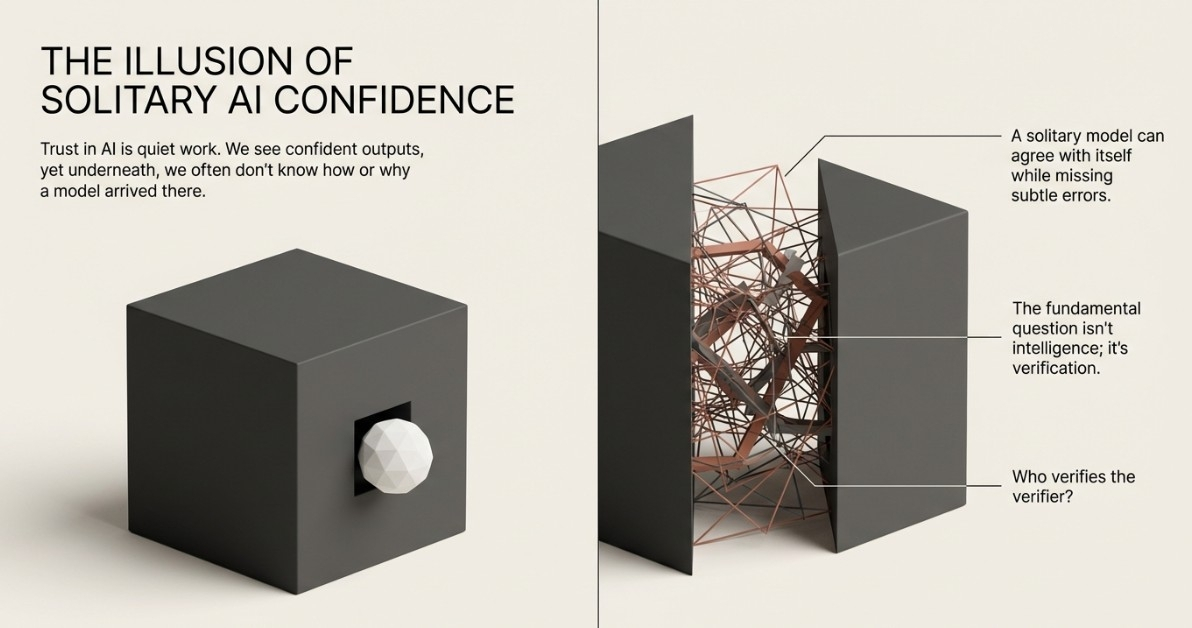

Trust in AI is quiet work. We see confident outputs, yet underneath, we often don’t know how or why a model arrived there. One model can agree with itself while missing subtle errors. The real question isn’t intelligence - it’s verification. Who verifies the verifier?

Most AI today works alone. One model produces an answer, and users must accept it or challenge it. Mistakes can propagate quietly because there is no structured way to respond. Trust becomes reputation rather than something measurable.

Watching the network shows subtle shifts. Participants hesitate before agreement. Bold claims are broken into smaller verifiable pieces. Language grows careful. Trust develops slowly, earned through repeated cycles of verification, rather than declared.

Influence forms in small ways. Some participants gain weight because their judgment is consistent. Others adjust their behavior around those signals. No one announces leadership. The network organizes around steady reliability rather than position.

There is tension in this process. Consensus reduces risk, but participants anticipate disagreement. They think about the cost of being wrong. Decisions are shaped by what others might observe. The texture of the network changes gradually under pressure.

Transparency is another quiet benefit. Every claim shows who supported it and who challenged it. The audit trail is clear, unlike a single model’s hidden confidence scores. Trust becomes visible rather than assumed.

Errors still happen. Distributed consensus does not remove uncertainty. What it does is create a structure where disagreement has a place. Mistakes are less likely to linger unnoticed because the network itself can contest them.

In the end, MIRA is exploring a different foundation for AI trust. Truth is not imposed.