@Mira - Trust Layer of AI #Mira

For years the conversation around artificial intelligence has focused almost entirely on capability: bigger models, faster inference, more data, and increasingly impressive outputs that appear, at least on the surface, to approximate human reasoning. Yet beneath this rapid progress lies a quieter and more difficult question that the industry has only recently begun to confront with seriousness: how do we determine when an AI system is actually trustworthy Not simply convincing, not merely confident, but reliable in a way that institutions, markets, and critical infrastructure can depend on without hesitation.

The challenge exists because modern AI systems do not produce knowledge in the traditional sense they generate probabilities shaped by patterns in their training data. A model may sound authoritative while quietly fabricating a citation, misreading a regulatory clause, or combining fragments of information into something that appears logical but rests on unstable foundations. These failures rarely appear dramatic. Instead, they manifest as subtle distortions that pass unnoticed until their consequences surface in financial reports research summaries or automated decisions that rely on the model’s output as if it were verified fact

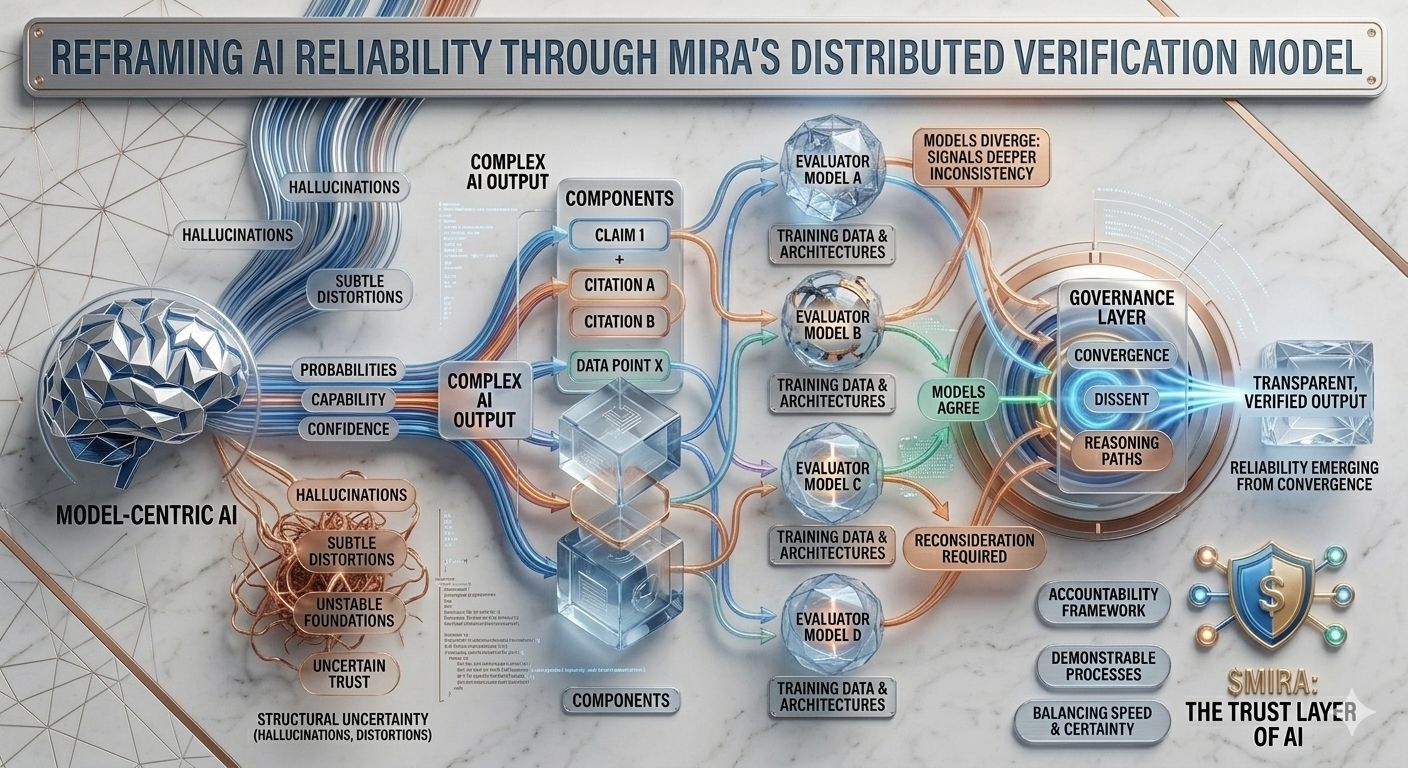

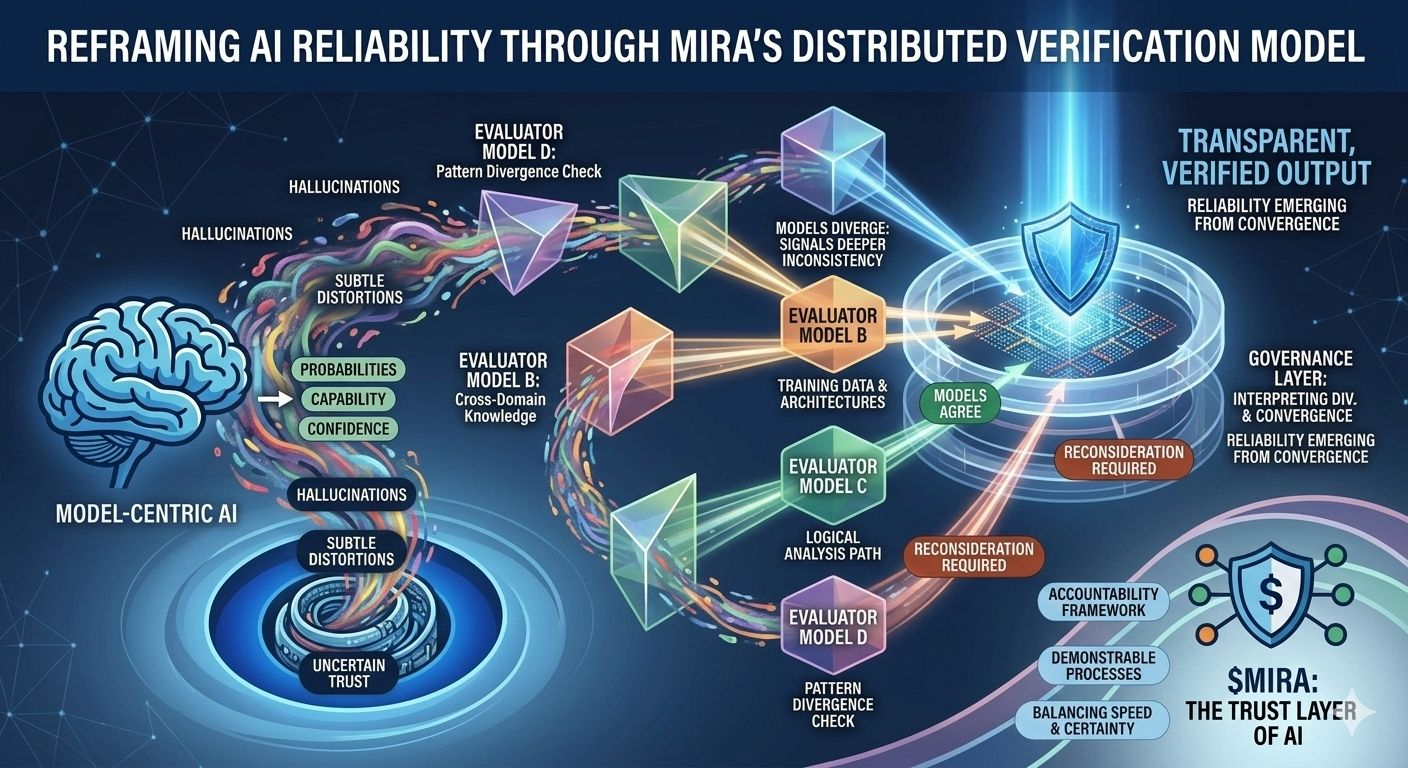

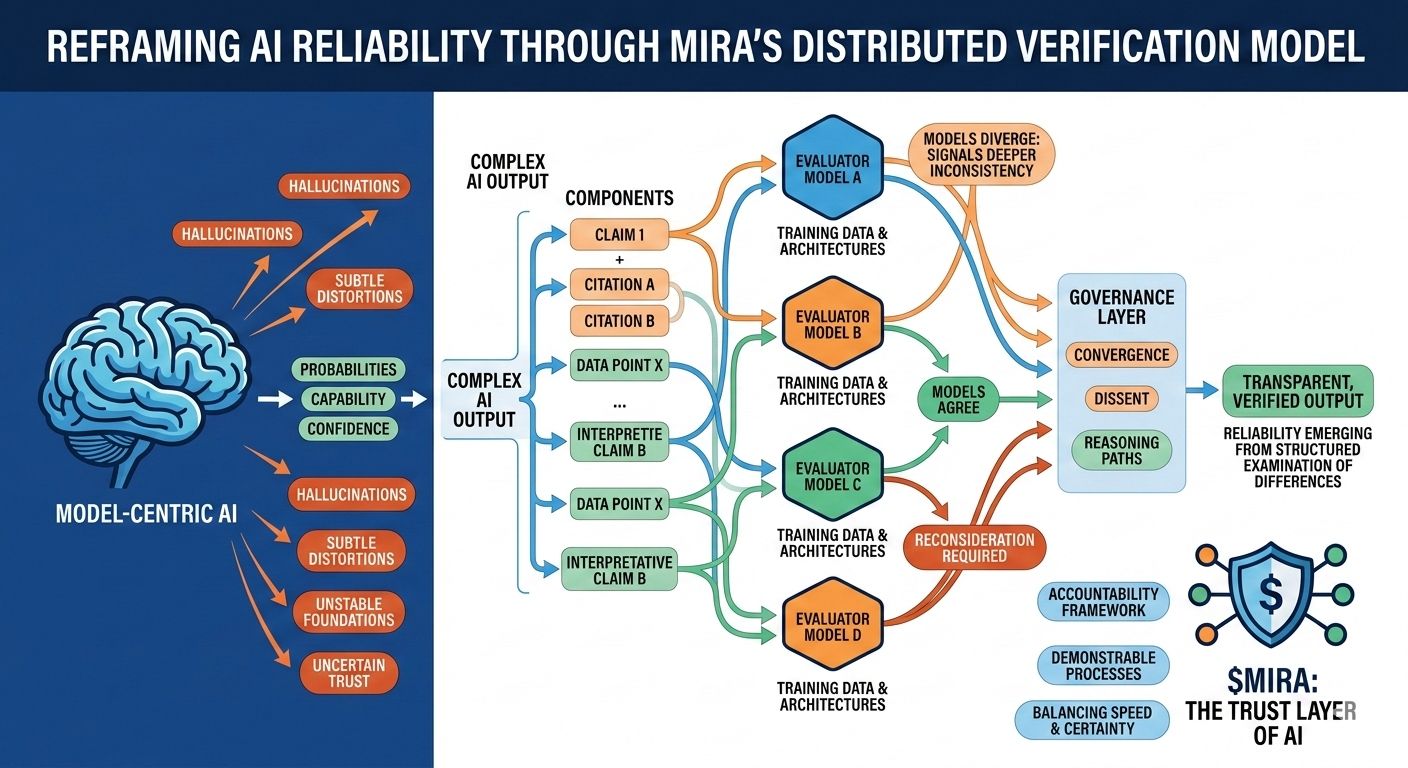

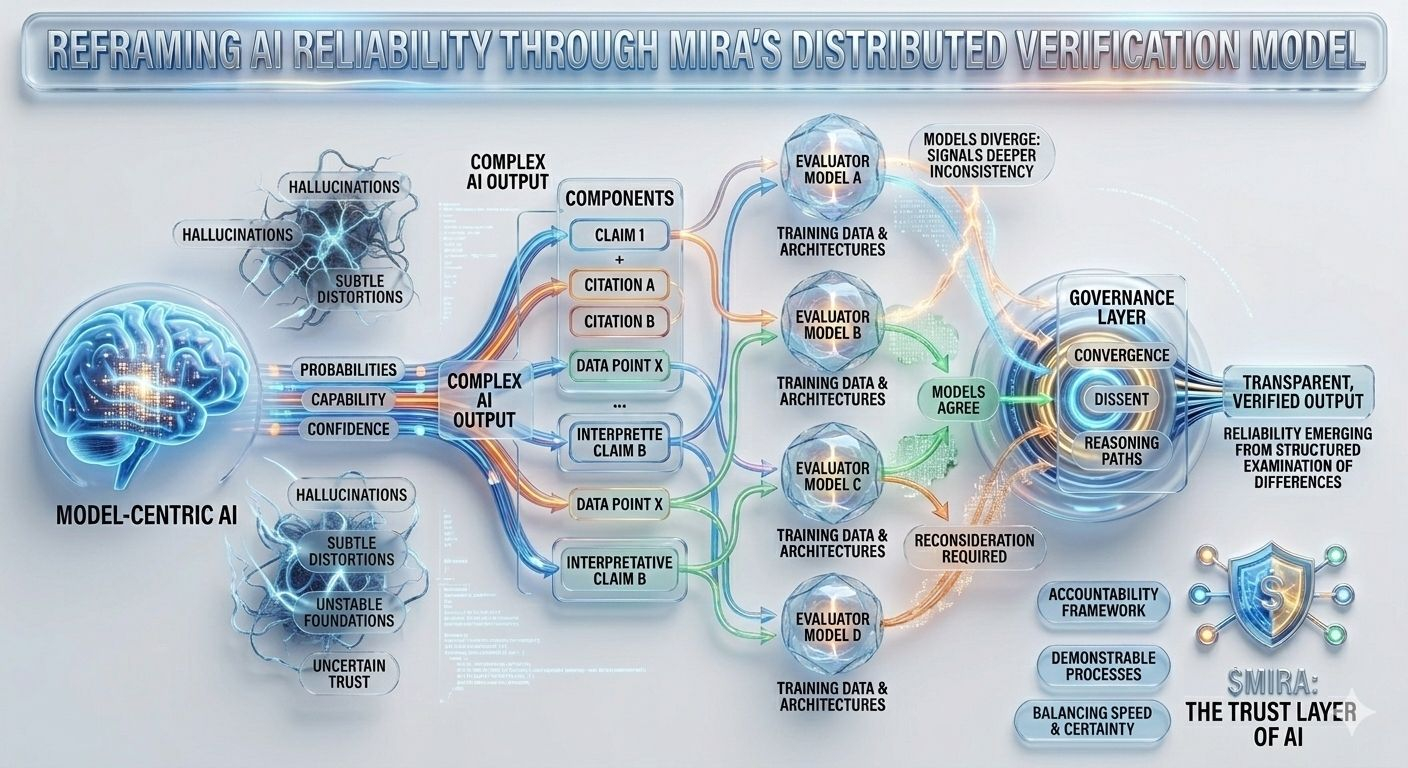

This structural uncertainty is precisely the problem that Mira attempts to address, not by demanding perfection from a single model but by rethinking the entire process through which AI answers are produced and validated. In Mira’s architecture, an AI output is treated less like a finished conclusion and more like a hypothesis entering a verification pipeline. Instead of trusting the reasoning path of one model the system distributes evaluation across multiple independent models that examine the same claim from different perspectives each shaped by distinct training corpora architectures and internal biases.

What makes this approach particularly interesting is that the objective is not blind agreement between models. Simple majority voting would offer only superficial reassurance since models trained on overlapping data often inherit similar assumptions and blind spots. Mira’s governance framework instead focuses on interpreting how models agree where they diverge and whether disagreement signals a deeper inconsistency within the claim itself. In other words reliability emerges not from uniform answers but from the structured examination of differences in reasoning.

To make this possible complex AI outputs must first be broken into smaller verifiable components. A generated research summary becomes a series of traceable statements a legal explanation turns into a sequence of interpretive claims a financial analysis separates into quantifiable assertions that can be cross-checked independently. Each of these fragments can then be evaluated by separate models allowing the system to map not just whether the overall response appears correct but which specific elements withstand scrutiny and which require reconsideration.

This shift may seem subtle yet it represents a profound change in where trust resides within an AI system. Traditional pipelines concentrate authority within the model itself: if the model performs well the system performs well if it fails the entire process collapses. Mira distributes that responsibility across a governance layer that evaluates claims before they solidify into outputs. In this environment, credibility does not originate from a model’s confidence score but from the convergence of independently assessed reasoning paths.

Of course, distributing verification does not eliminate every form of error. Models trained on similar datasets can still reproduce outdated information and sophisticated adversarial prompts may exploit systemic weaknesses shared across architectures Multi-model consensus reduces the likelihood of random hallucination, but it cannot fully prevent coordinated error that emerges from shared assumptions embedded in the broader AI ecosystem. For that reason, transparency becomes as essential as verification itself. Users must understand whether the verifying models truly represent independent perspectives or merely variations of the same underlying system.

Another dimension of this design lies in its economic implications Verification is not free: each additional model call introduces computational cost latency and infrastructure complexity. As AI systems increasingly integrate verification layers developers must make deliberate choices about when deep validation is necessary and when rapid responses are sufficient Applications built on verified AI therefore evolve into reliability managers constantly balancing speed cost and certainty while determining which outputs require deeper scrutiny or human oversight

These trade-offs will likely reshape how AI platforms compete in the coming years.Capability alone will no longer define the strongest systems. Instead the ability to demonstrate transparent verification processes clearly communicate uncertainty and gracefully expose disagreement between models may become the defining characteristics of trustworthy AI infrastructure. Systems that acknowledge their limitations while systematically containing errors will ultimately prove more valuable than those that simply project confidence

Seen from this perspective Mira’s model is less about building smarter individual models and more about constructing an accountability framework around machine intelligence itself. AI responses become proposals rather than declarations—statements that must pass through a network of independent evaluators before being accepted as credible outputs. In such a system mistakes remain inevitable but their impact is contained through verification mechanisms that identify weaknesses before they propagate into decisions financial systems or public discourse

Ultimately the future of reliable AI may depend less on achieving perfect agreement between models and more on defining how that agreement is interpreted how dissenting signals are analyzed and what safeguards activate when consensus begins to fracture. The true measure of trust will not be whether machines always produce the right answer, but whether the systems surrounding them are designed to question, test, and validate those answers before the world relies on them.

$MIRA