I didn’t really question AI answers much when I first started using these tools.

Like most people, I was mostly impressed. You type something and within seconds you get a structured response that looks clean and confident. It explains things clearly, sometimes even better than articles written by humans.

But after using AI long enough something small starts to bother you.

Not because it’s always wrong.

Because it sounds equally confident when it is wrong.

Sometimes the information is correct. Sometimes it’s slightly inaccurate. Sometimes the model simply invents something that sounds believable. And the strange part is that the tone rarely changes. The answer still looks polished even when the details aren’t reliable.

That’s the part that made me start paying more attention to Mira Network.

At first I assumed it was just another AI project trying to build a better model But the more I looked into it the clearer it became that Mira isn’t trying to compete with model builders at all

It’s trying to solve a different problem.

Verification.

Most AI systems today operate on probability the model predicts the most likely sequence of words based on patterns it learned from training data That’s why the answers often look convincing but it also means the system doesn’t truly know whether the information is correct

Mira approaches this problem from a completely different angle.

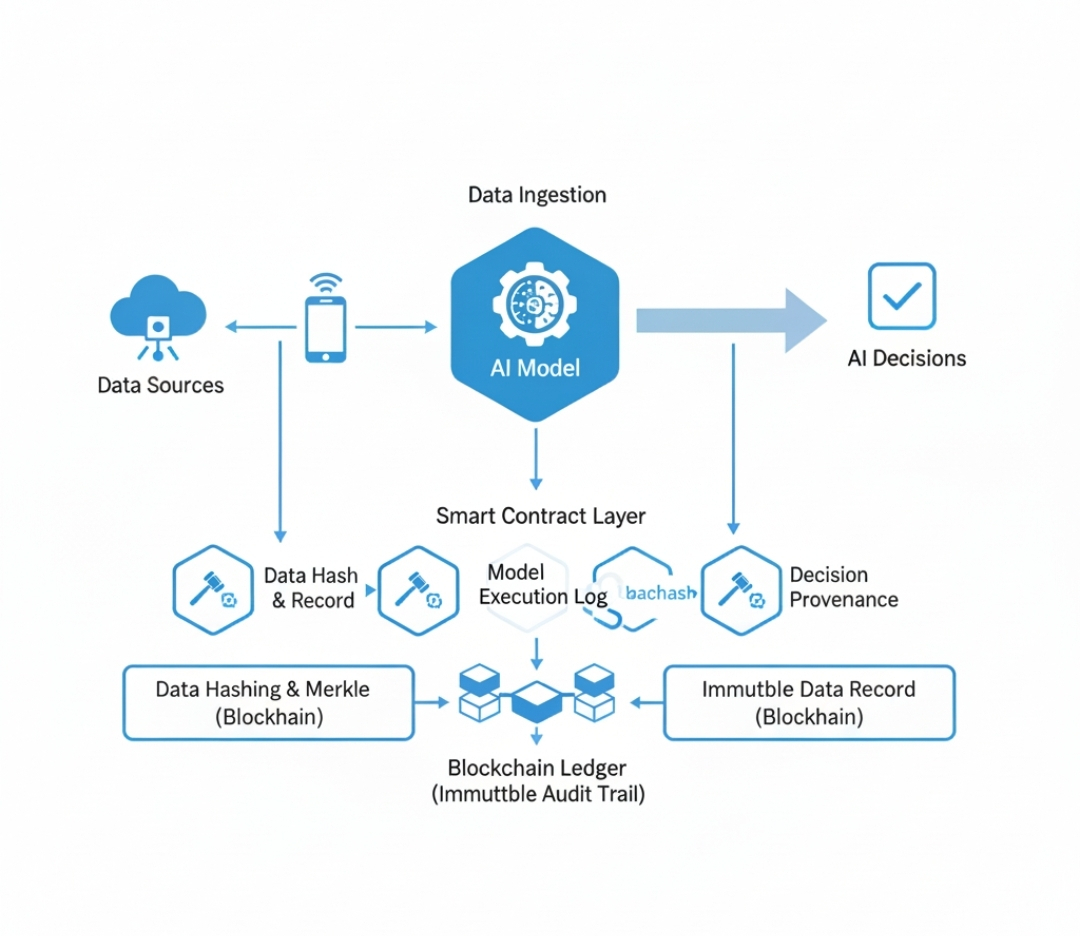

Instead of trusting a single AI output, the protocol breaks that output into smaller claims. Each claim can then be evaluated independently by a network of validators.

These validators can be different AI models or verification systems analyzing the same information from multiple perspectives. Once those validators examine the claim, their evaluations are coordinated through blockchain consensus.

So rather than trusting one model’s confidence, the network looks for agreement across independent validators.

That idea seems simple at first, but it changes the trust model significantly.

Right now when you receive an AI answer, you either trust the model or you manually verify it yourself. Mira introduces a third option where verification becomes part of the system itself.

Claims are checked by multiple participants.

The results are recorded transparently.

Consensus determines whether the information is reliable.

Another layer that makes the system work is incentives.

Validators inside the network are rewarded for accurate verification and penalized for approving incorrect claims. That creates an economic reason to evaluate information carefully instead of blindly confirming outputs.

When I started thinking about where AI is heading, this approach began to feel more important.

Right now AI mostly assists humans. You still read the output before doing anything serious with it. But the direction of the technology is clearly moving toward autonomous agents that can execute tasks, interact with financial systems, or manage workflows automatically.

In those environments, unreliable information becomes a bigger problem.

If an AI agent is executing actions based on incorrect data, the consequences can spread quickly. A mistake that might have been harmless in a conversation becomes much more significant when it affects financial transactions, governance systems, or automated decision making.

That’s where verification infrastructure starts to matter.

What I find interesting about Mira is that it doesn’t assume AI hallucinations will disappear completely. Many discussions about AI improvement focus on training larger models or improving datasets as if those changes will eliminate errors entirely.

Mira takes a more pragmatic view.

It assumes probabilistic systems will always carry some uncertainty and builds a network designed to verify outputs after they are generated.

In other words, intelligence and reliability are treated as two separate layers.

AI models generate answers.

The network verifies them.

Of course there are still challenges. Breaking complex outputs into verifiable claims is not trivial. The validator network needs enough diversity to avoid shared biases. And verification must remain efficient enough that applications can still operate quickly.

But the direction feels increasingly relevant as AI systems move toward more autonomous roles.

Because the more capable AI becomes, the more important one question becomes.

Not just how intelligent the system is.

But whether the information it produces can actually be trusted.