So here’s something interesting that’s been getting talked about — Nvidia is reportedly shifting its production priorities toward **Vera Rubin chips**. If you follow tech or AI hardware at all, you know this isn’t just another product announcement. It signals something bigger in how the industry is gearing up for demand that’s rapidly growing, especially in AI workloads.

Let’s break it down in a way that actually makes sense without all the corporate jargon.

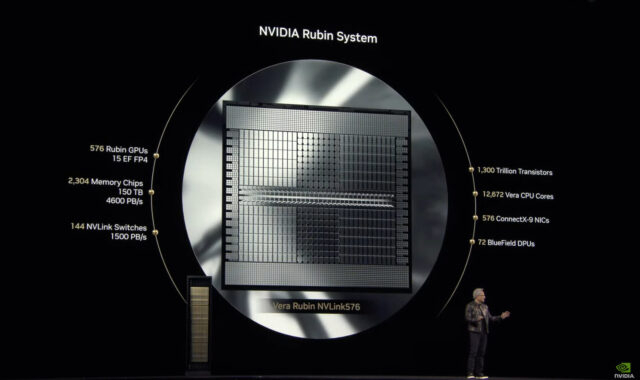

For years, Nvidia has been the go-to name in GPUs — especially for AI training and inference tasks. But as AI models become more complex and datasets grow larger, the need for more specialized, efficient silicon is becoming obvious. General-purpose GPUs are great, but they aren’t always the most power-efficient or cost-effective solution for every kind of AI task. That’s where Vera Rubin chips come in.

These chips are designed with specific workloads in mind — especially tasks involving large-scale AI model processing that traditional GPUs might struggle with or handle less efficiently. Nvidia seeing rising demand for Vera Rubin suggests that customers aren’t just buying AI compute because it’s trendy. They actually need hardware that can keep up with real-world usage and intensive workloads.

Think of it this way — if you’re building something that’s going to run 24/7 with huge data throughput and constant reasoning operations (like large AI models or autonomous systems), you want hardware that’s optimized rather than general-purpose stuff that burns more power and gives diminishing returns.

Shifting production focus means Nvidia is reading the market signals loud and clear. They see where demand is heading, and they’re adjusting their manufacturing pipeline accordingly. That has a few implications:

1. **AI adoption isn’t slowing down** — Companies are not just experimenting, they’re deploying at scale.

2. **Demand for efficient AI hardware is rising** — Vera Rubin chips are carving out a niche where performance and power efficiency matter.

3. **The industry is maturing** — We’re moving past the phase of “just throw GPUs at everything” to “use the right tools for the job.”

This also suggests future hardware innovation won’t be one-size-fits-all. We’re entering an era where specialized processors — tailored for specific tasks — start becoming the norm. That has ripple effects across cloud providers, data centers, robotics, autonomous vehicles, and basically anywhere AI compute is essential.

At the end of the day, Nvidia shifting focus isn’t just about a new chip. It’s about the market evolving, customers demanding more efficiency, and the tech stack adapting accordingly. It’s a small headline with a big underlying story — AI workloads are growing up, and the hardware world is trying to keep pace.

Nothing here is hype — just the direction the tech is moving.