In the past few years, AI systems have advanced significantly. They are now capable of producing content, analyzing data, and even helping make decisions. However, there is a recurring theme that has been observed with AI systems: the need to verify if the output is actually correct.

Anyone familiar with AI systems and has used them extensively would likely be familiar with this situation. The output might look correct, but verification might require a bit more effort.

While this might not be a significant issue for casual use, in more important fields, accuracy is a lot more important.

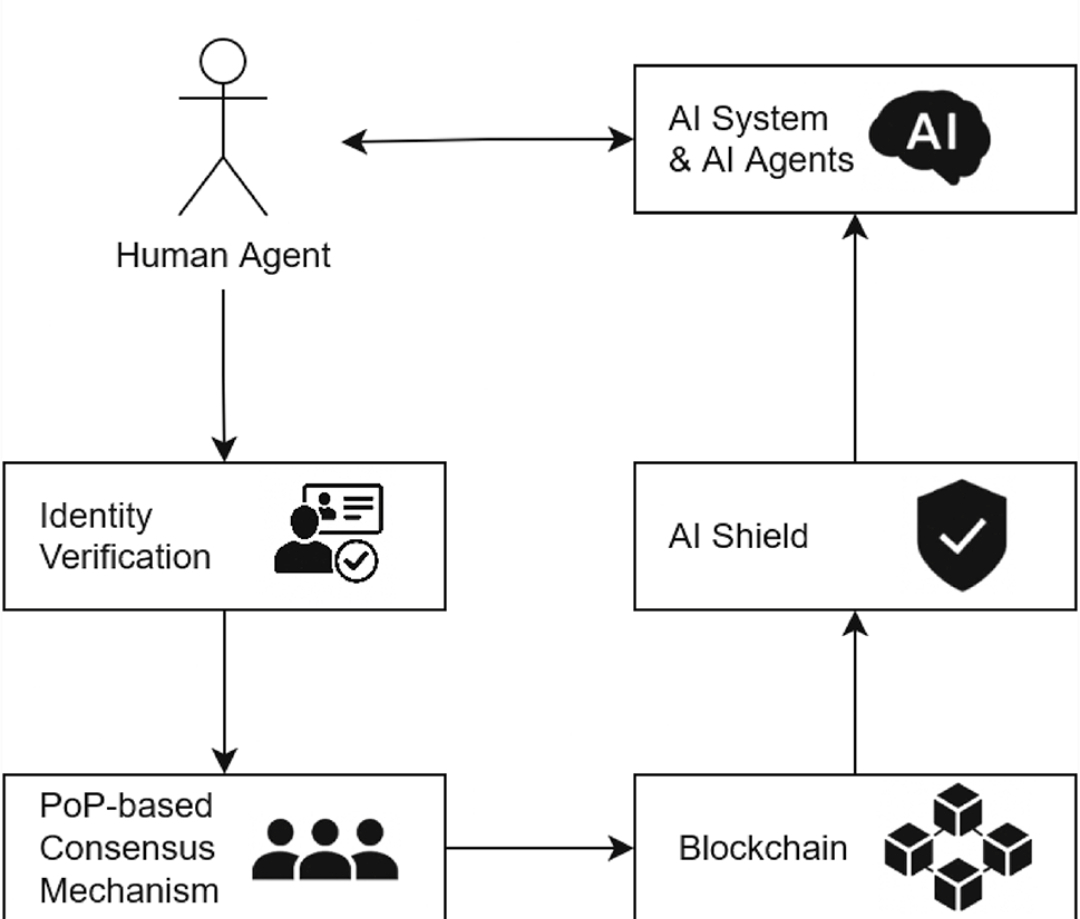

This is where the underlying premise behind MiraNetwork is interesting.

While other AI systems are busy working on creating new AI systems, @Mira - Trust Layer of AI is working on a premise that is a bit more interesting: how AI systems might be able to verify the output, rather than simply relying on it.

This is where $MIRA might potentially contribute to the overall AI ecosystem: a verification layer that might make AI systems a lot more accurate, especially in a situation where accuracy is important.#Mira