There is a category of artificial intelligence failure that rarely appears in benchmark reports.

The model performs as expected. The output is factually correct. Validators confirm the result. Every visible component works according to specification. And yet the institution that relied on that output still finds itself facing regulatory scrutiny.

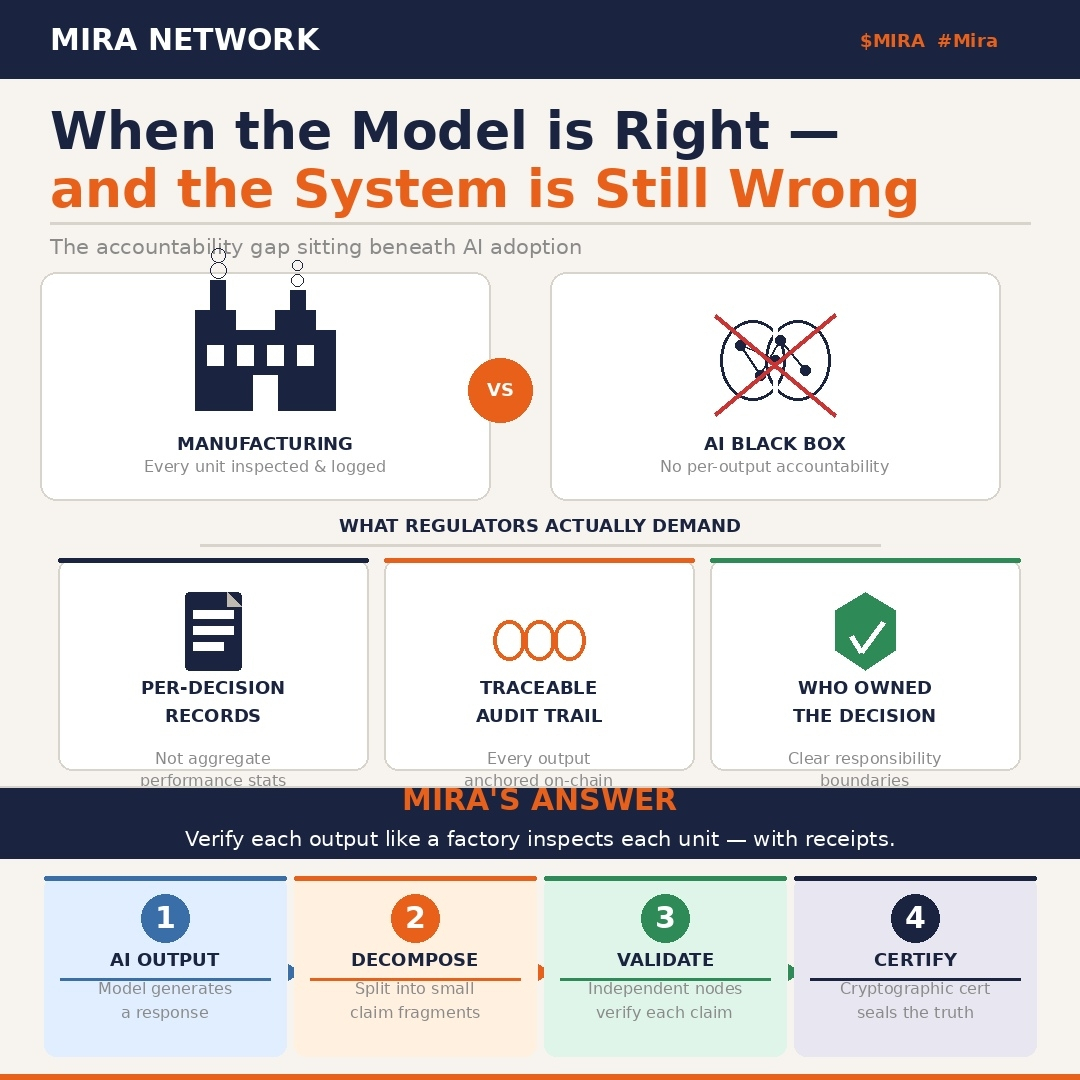

The reason is simple but uncomfortable. An accurate answer that moved through a system is not automatically a defensible decision.

That distinction sits underneath most conversations about AI reliability. It is also the gap Mira Network is attempting to close.

Many people describe Mira as a protocol that improves accuracy by routing AI outputs through distributed validators rather than relying on a single model. That description is valid. Running claims across models with different architectures and training distributions can materially increase reliability. Hallucinations that pass through one system often fail when examined by several independent ones.

But the deeper shift is architectural, not statistical.

Infrastructure Begins With Chain Selection

Mira Network is built on Base, the Ethereum Layer 2 network developed by Coinbase. That decision reflects a design philosophy about verification systems.

Verification must be fast enough to function in operational environments. It must also anchor records to a security model that provides credible finality. A certificate attached to a chain vulnerable to reorganization is not durable evidence. It is provisional memory.

By combining Layer 2 throughput with Ethereum anchored security, the protocol attempts to balance responsiveness with permanence.

Layered Architecture and Operational Discipline

On top of this foundation sits a three layer structure designed around workflow clarity.

At the input stage, standardization mechanisms reduce contextual drift before claims ever reach validators. Structured inputs prevent ambiguous interpretation from spreading downstream.

At the distribution stage, randomized sharding allocates claims across independent nodes. This protects sensitive information while balancing computational load across the validator network.

At the aggregation stage, supermajority consensus determines whether a certificate is issued. It is not simple majority noise. It is weighted agreement designed to resist single point bias.

The addition of a zero knowledge coprocessor for SQL queries extends this architecture into institutional territory. Being able to verify that a database query returned correct results without exposing the query itself or the underlying dataset is not an experimental feature. For organizations operating under data residency rules, confidentiality agreements, and audit obligations, it becomes essential.

Proving correctness without revealing inputs moves AI verification from demonstration to procurement grade infrastructure.

Accountability Beyond Process Documentation

None of this automatically solves the accountability question. And accountability is what ultimately determines adoption.

Organizations have already learned that governance documentation does not equal operational proof. A model card shows that evaluation occurred prior to deployment. An explainability interface demonstrates visualization capability. A compliance review confirms procedural review.

None of these prove that a specific output was verified before it influenced a real decision.

Regulators increasingly request that evidence. Courts are beginning to expect it. Aggregate accuracy metrics do not satisfy those requirements.

What Mira Network proposes is a structural analogy closer to manufacturing quality control. Instead of claiming that systems are reliable on average, it treats each output as a unit requiring inspection.

Not that the production line is calibrated.

Not that procedures exist.

But that this particular unit was examined, these checks were applied, these validators participated, and this was the result.

The cryptographic certificate generated through consensus functions as that inspection artifact. It binds to a specific output at a specific moment. It records participating validators, stake weight, consensus threshold, and the sealed output hash.

When reconstruction is required, not in theory but in a specific case, that certificate provides traceable evidence.

Incentives as Structural Enforcement

The economic layer reinforces this model.

Validators stake capital. Accurate participation aligned with consensus earns rewards. Negligence or strategic deviation incurs penalties. Accountability is not expressed as policy language. It is encoded as a financial mechanism.

This transforms responsibility from aspiration into system behavior.

Cross chain compatibility further broadens reach. Applications built across different ecosystems can integrate the verification layer without migrating their primary infrastructure. The mesh operates above individual chain preferences, functioning as a reliability overlay rather than a replacement base layer.

Constraints and Realities

Verification introduces latency. Workflows that require instant output release may struggle with distributed consensus before finalization.

Liability remains a separate dimension. If validators approve an output that later causes harm, governance and legal frameworks must still define responsibility. Cryptographic assurance does not replace jurisprudence.

Yet the trajectory of institutional AI adoption suggests a clear direction. As systems grow more capable, oversight expectations intensify proportionally.

The organizations that will scale AI responsibly are not those with the most confident models. They are the ones capable of demonstrating, with specificity, what was checked, when it was checked, what consensus formed, and who bore responsibility.

That is not a benchmark statistic.

It is infrastructure.