I’m looking at Mira Network after spending quite a bit of time reading through its ideas and structure. At first, I wasn’t sure what to make of it. Projects that call themselves “infrastructure” usually take time to understand. They don’t jump out with obvious features or flashy promises. You have to sit with the idea for a while. Read slowly. Connect the pieces.

Artificial intelligence has learned how to sound right. The harder challenge is proving it is right. As AI systems move into areas where decisions carry real weight, confidence is no longer enough. What matters is whether outputs can be tested, verified, and economically defended. Mira Network is built around that exact pressure point.

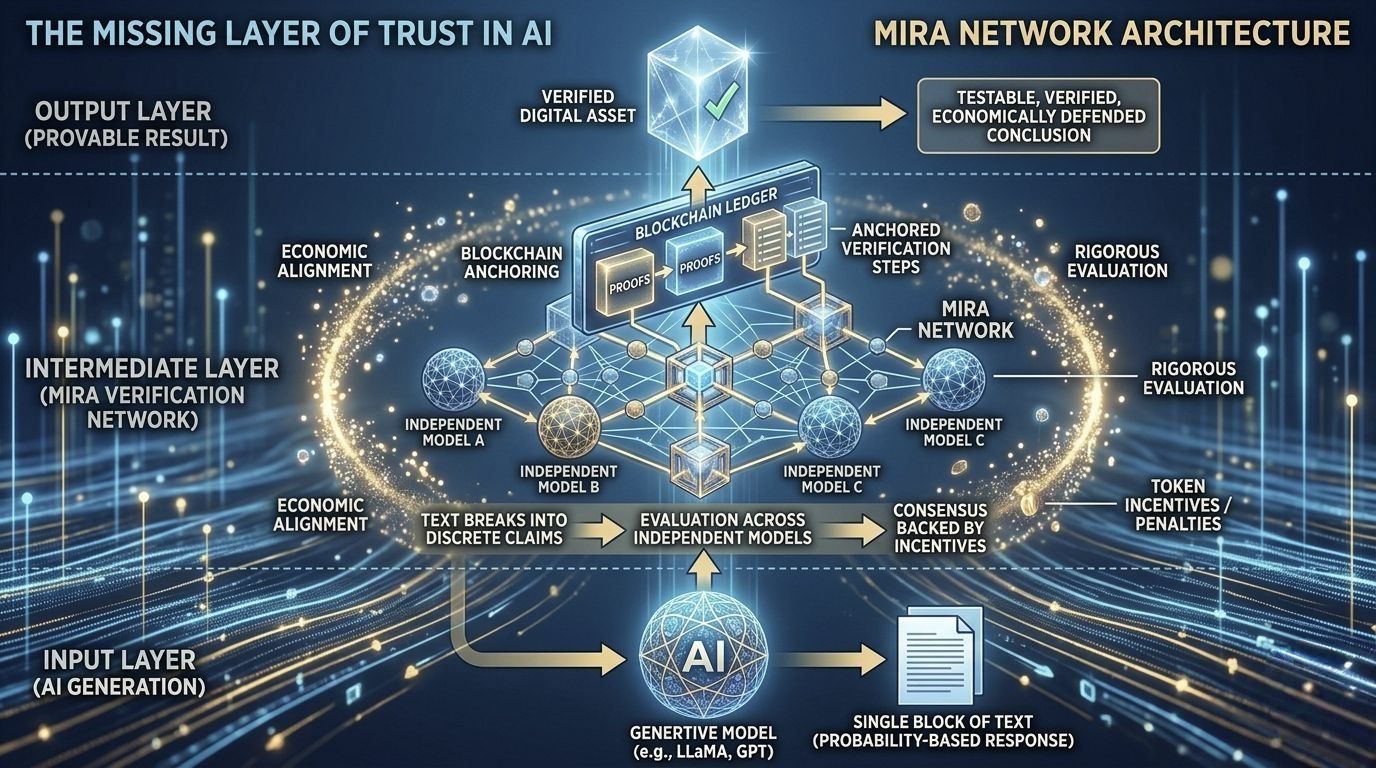

Most AI models operate like fast thinkers. They generate responses based on probability patterns learned from massive datasets. The problem is that probability does not equal truth. Hallucinations, subtle bias, and overconfident errors are not rare edge cases. They are structural side effects of how these systems work. If AI is going to handle sensitive data, financial logic, research synthesis, or automated workflows, it needs a way to separate fluent answers from provable ones.

Mira introduces a verification layer that treats every AI output as something that must earn its credibility. Instead of accepting a model’s response as a single block of text, the system breaks it into discrete claims. Those claims are then evaluated across a distributed network of independent models. Validation is not based on trusting one authority. It is based on consensus backed by incentives.

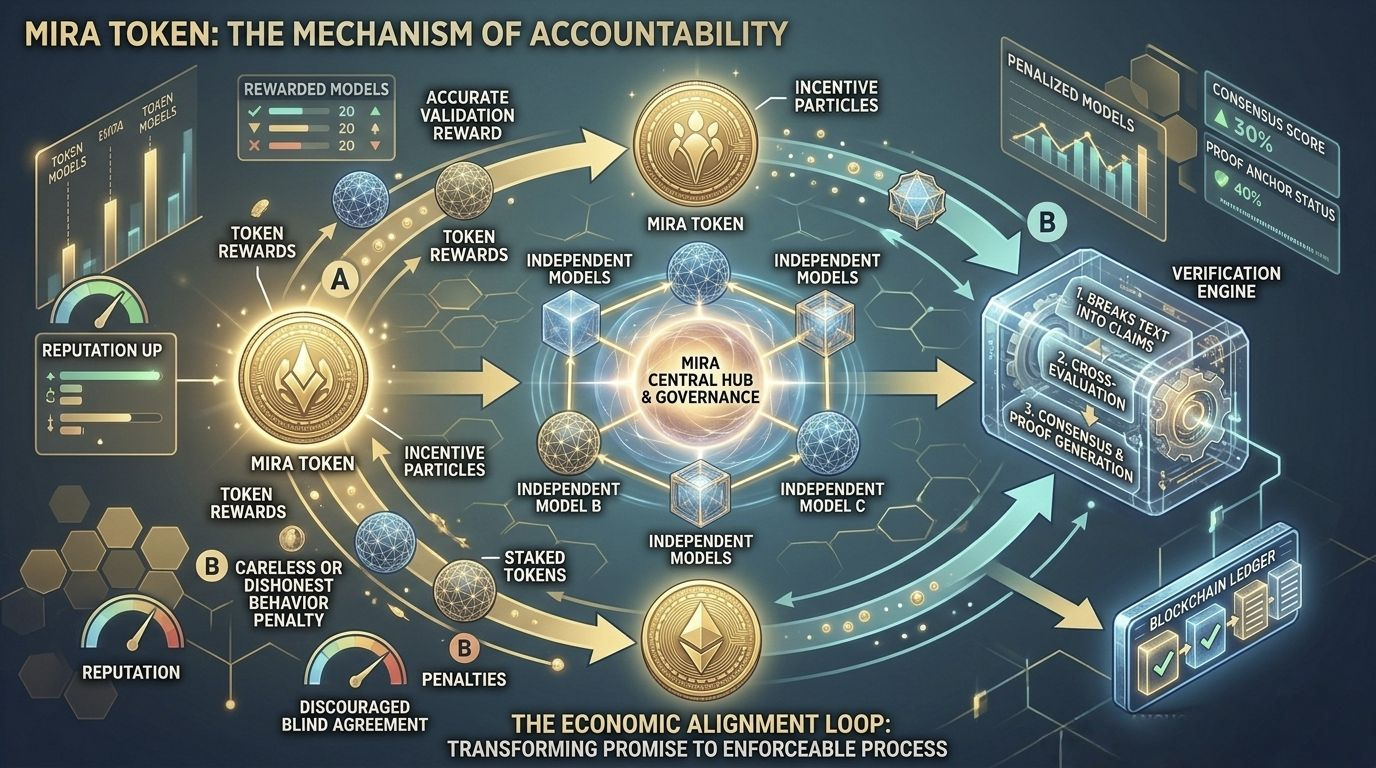

This is where the architecture becomes important. Mira uses blockchain infrastructure not to store opinions, but to anchor proofs. Each verification step is recorded in a transparent and tamper resistant environment. Participants in the network are rewarded for accurate validation and penalized for careless or dishonest behavior. Accuracy becomes economically aligned, not just technically desirable.

The token plays a central role in this design. It is not decorative. It powers incentives, secures participation, and coordinates validation activity across the network. When verification carries financial weight, the system discourages blind agreement and encourages rigorous evaluation. The token becomes the mechanism that transforms verification from a promise into an enforceable process.

What makes this approach different is the shift in focus. Instead of asking how to make a single AI model smarter, Mira asks how to make AI outputs accountable. It builds infrastructure around intelligence rather than assuming intelligence alone will solve reliability. In practical terms, that means turning AI responses into verifiable digital assets rather than unchecked text.

As automation expands, the value of AI will increasingly depend on whether its conclusions can withstand scrutiny. Mira’s core insight is simple but powerful: scalable intelligence only becomes useful infrastructure when it can prove itself under independent review.