A few nights ago I was using an AI tool to summarize a long research thread. The answer looked clean. Confident. Almost too confident.

Then I checked the original source and realized part of it was slightly off. Not completely wrong, just… tilted. That moment reminded me of something people rarely discuss about AI: we trust the output way too easily.

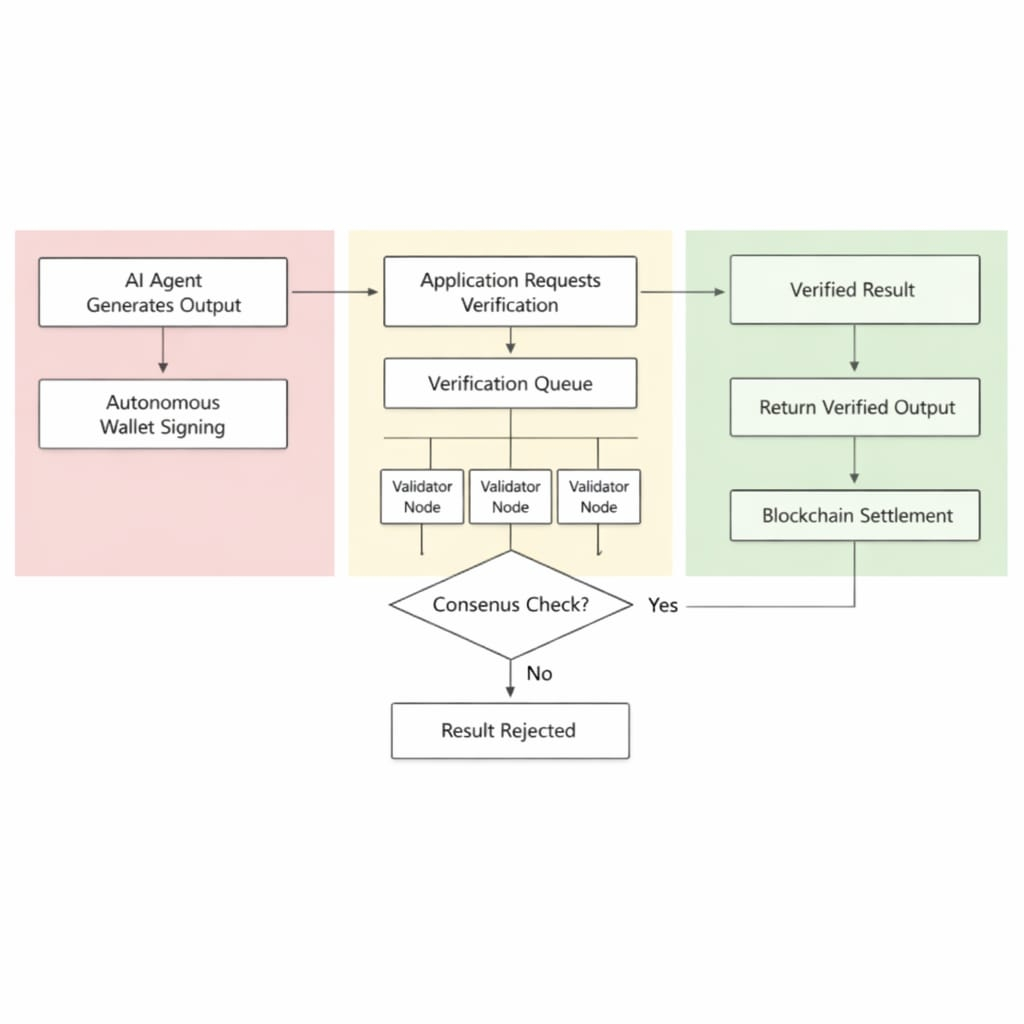

That’s why @Mira - Trust Layer of AI caught my attention recently. Most projects in the AI space focus on building bigger models or faster responses. Mira is looking at a different problem. What happens after the answer appears? Instead of assuming the response is correct, the network allows applications to request independent verification. Multiple participants can check the output, validate it, or challenge it. It turns AI responses into something closer to a system of checks rather than a single voice pretending to know everything.

And honestly, the more I think about it, the more this feels like a missing layer. AI is moving into coding tools, research assistants, even financial workflows. If those systems start making decisions, verification stops being optional. It becomes infrastructure. I’m not saying this is solved overnight, but watching the idea behind #Mira develop makes me think the real AI race might not be about generating answers.

It might be about proving those answers can be trusted.