When an AI generates a long paragraph, it is easy for a misinformation or lie to hide inside a bunch of beautiful sentences. If you try to verify the whole paragraph at once, it is a mess. You might agree with half and disagree with the other half. This is where @Mira - Trust Layer of AI uses a trick called Binarization.

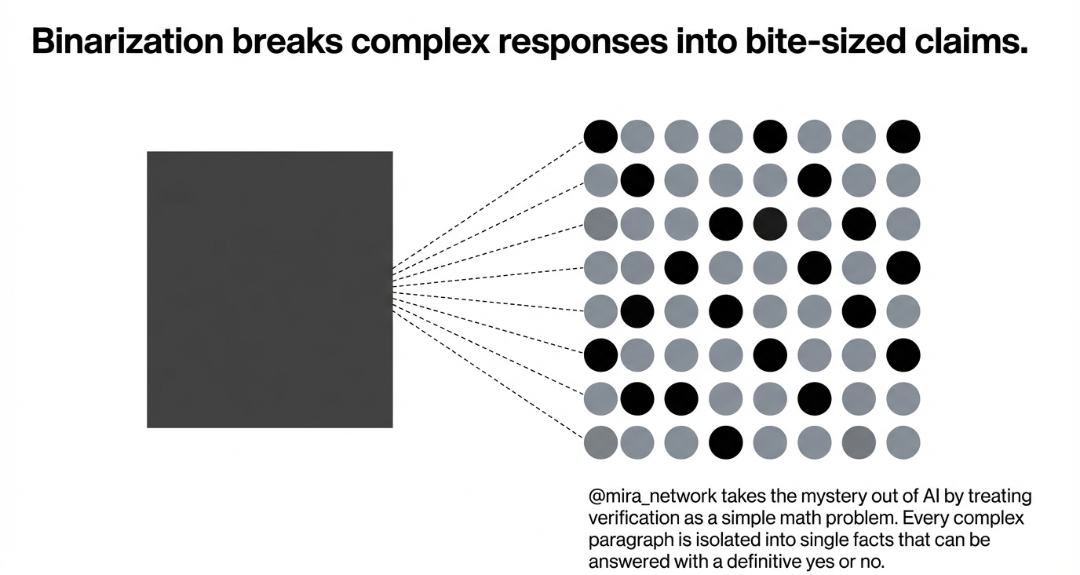

Now, what the hell is Binarization. Okey , think of it like this. If someone tells you a long and complicated story, you dont just say yes or no to the whole thing. You break it down. You ask, did this part happen? Did that person actually say or like that .

Mira does the same with AI models. It takes a complex response and breaks it into tiny small-sized claims. Each claim is a simple fact that can be answered with yes or no. Simple.

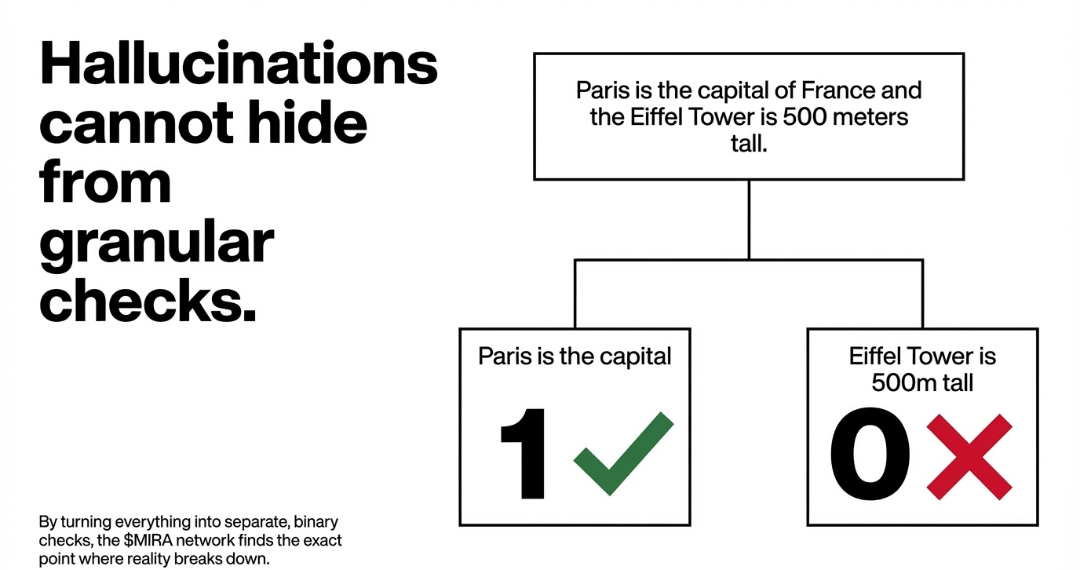

For the example, if any AI says Paris is the capital of France and the Eiffel Tower is 500 meters tall, Mira splits that into two separate checks. The network might say yes to the first part but a hard no to the second part because that's a lie or misinformation.

By turning everything into these binary checks mira network makes it impossible for hallucinations it misinformation to hide in the middle of a paragraph and that's helps to find accurate information and accuracy of AI models.

My 2 cent on Binarization:

Most people focus on making AI bigger, but Mira is focused on making it more accurate. Binarization is a great method for achieving that. It is the only way to scale verification without getting too much chaotic.