I spent two years at a computer vision startup watching the same problem repeat itself in different forms. you need labeled data to train the model. you need a working model to attract the people who provide labeled data. the loop only starts when someone absorbs the cost of breaking into it. and whoever absorbs that cost usually does it badly because the incentive to do it well doesn't exist yet.

that's the lens i brought to Fabric's Phase 1.

the vision is a self-sustaining robot economy where quality data flows freely, operators get rewarded for genuine contribution, and the network improves continuously through accumulated verified ground truth. clean loop. coherent design. but that loop requires something to exist before any of it starts working.

it requires quality data. right now. before the incentives that reward quality data are even active.

here's the specific problem buried in the roadmap.

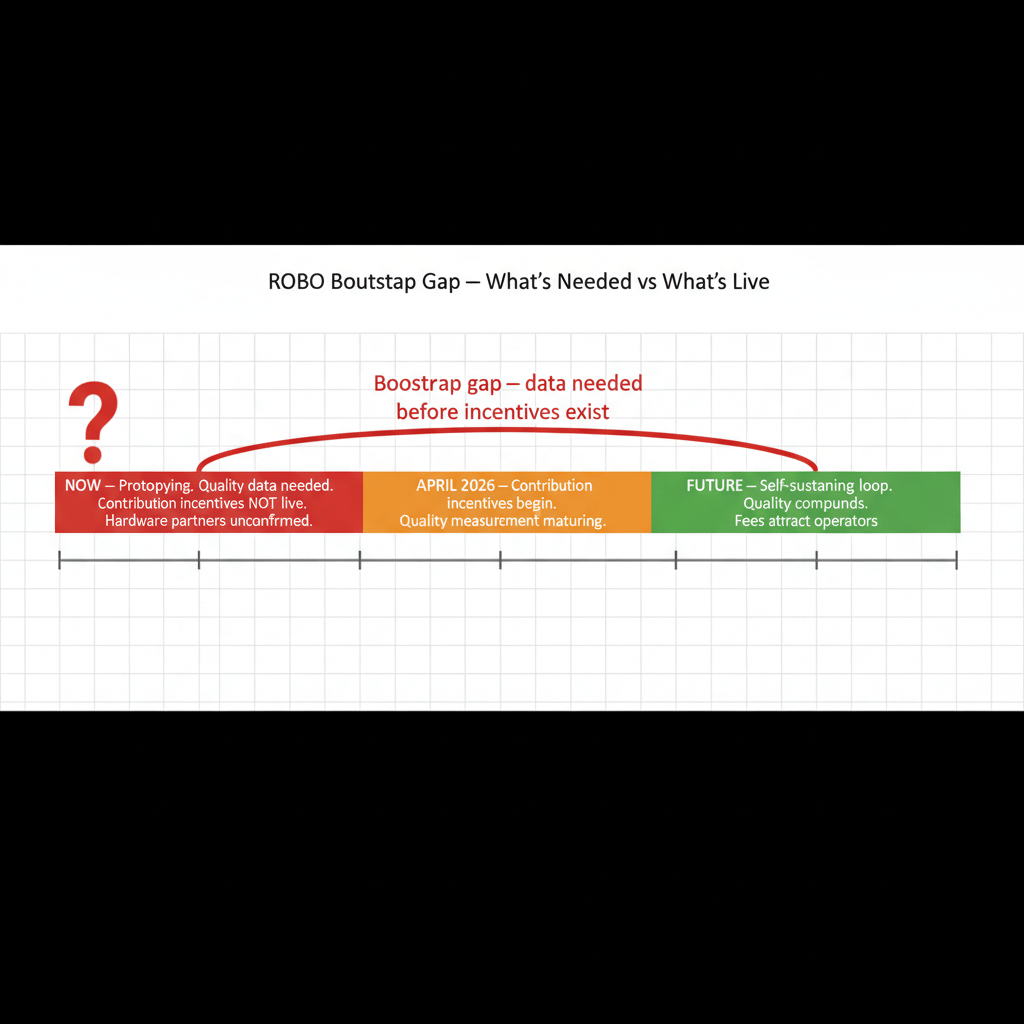

contribution incentives — the mechanism that pays operators for providing genuine value to the network — don't start until Q2 2026. that's April at earliest. Fabric is in Phase 1 prototyping right now. meaning the window between today and Q2 is a period where operators are expected to contribute data and participation to the network without the reward system that justifies doing it well.

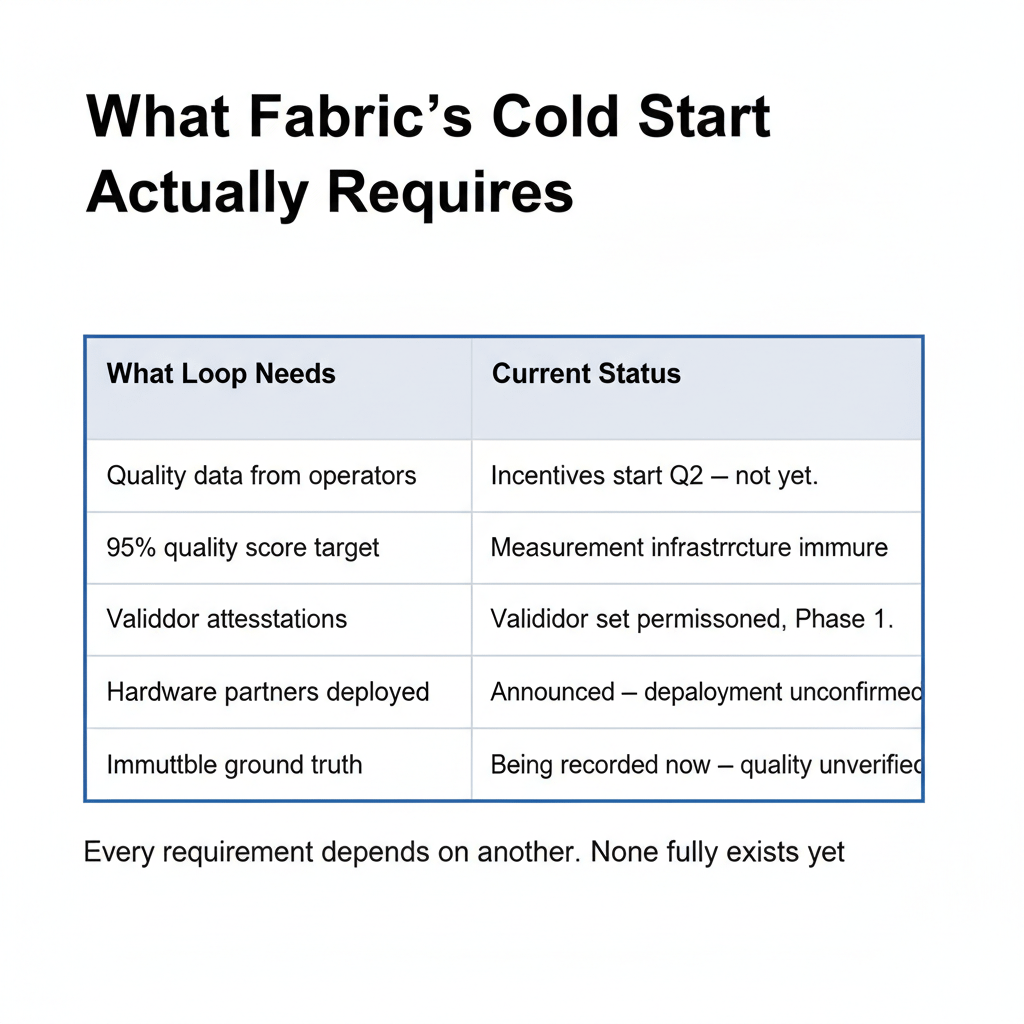

the whitepaper sets a quality score target of 95% for mature network operation. quality scores are calculated from validator attestations and user feedback — a system that requires validators to exist, users to provide feedback, and sufficient history to establish meaningful baselines. in Phase 1, none of those conditions fully exist. the quality measurement infrastructure is being built at the same time as the quality data it's supposed to measure.

what worries me is the immutability problem.

Fabric's ledger is designed to be tamper-resistant. actions recorded on-chain cannot easily be altered. that's the feature. but in Phase 1, when quality measurement is immature and incentives for honest contribution are minimal, the data being recorded isn't necessarily reliable. and unlike a traditional database where bad data can be corrected quietly, Fabric's immutable ledger preserves whatever gets written. flawed ground truth recorded in Phase 1 becomes part of the permanent foundation the mature network builds on.

what the protocol gets right here is the long-term design. once contribution incentives are live and quality measurement is mature, the feedback loop is genuinely self-reinforcing. higher quality scores attract better tasks. better tasks generate more fees. more fees attract more operators. the whitepaper's analysis of how quality compounds over time is architecturally sound.

but the transition from where Fabric is today to where that loop operates cleanly requires crossing a gap that the current incentive structure doesn't bridge.

hardware partners — BTech, Agi, and others listed in project materials — are supposed to provide early robot workloads that seed the network with genuine operational data. but confirmed deployment dates for those partnerships haven't been published. announced partnerships and operational deployments are different things. one is a press release. the other is a robot doing verified work on the Fabric network and generating the ground truth data the bootstrap phase depends on.

the cold start problem in robotics networks is harder than in pure software networks because the data isn't synthetic. you can't generate fake robot operational data that teaches the network anything real. you need actual hardware, actually deployed, actually completing actual tasks. that hardware has to exist before the incentives that justify deploying it are live.

chicken needs the egg. egg needs the chicken. Phase 1 is the moment before either exists at scale.

#ROBO @Fabric Foundation $ROBO