I’ve noticed that conversations about AI often focus on capability—faster models, better reasoning, more impressive outputs. What gets less attention is the quiet tension building around trust. As AI systems move deeper into finance, infrastructure, and decision-making processes, the question isn’t just whether they can perform tasks. It’s whether their actions can be verified in a way that other systems and institutions are willing to rely on. That’s the context in which Mira Network begins to make sense to me.

Calling it a “trust crisis” may sound dramatic, but the underlying issue is fairly practical. AI systems increasingly operate behind opaque interfaces. A model produces an output, an agent triggers a workflow, and downstream systems respond. When everything works, nobody asks many questions. But when something breaks—an unexpected trade, a misclassified transaction, a flawed automated decision—the demand for accountability appears immediately.

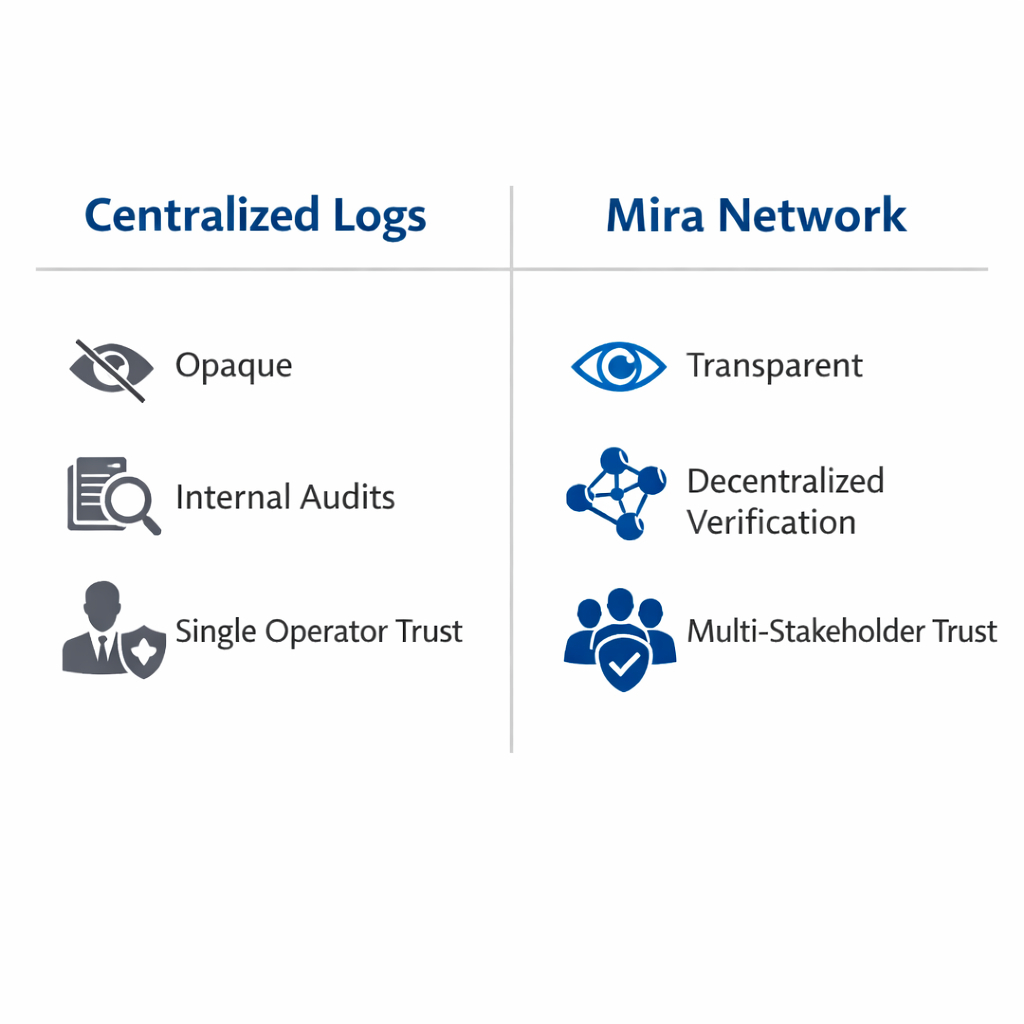

Traditionally, that accountability comes from centralized logs and internal audits. The operator of the AI system records what happened and explains it after the fact. That approach works as long as everyone involved trusts the operator. The moment that trust weakens—because of incentives, regulation, or conflicting stakeholders—the limits of centralized verification become visible.

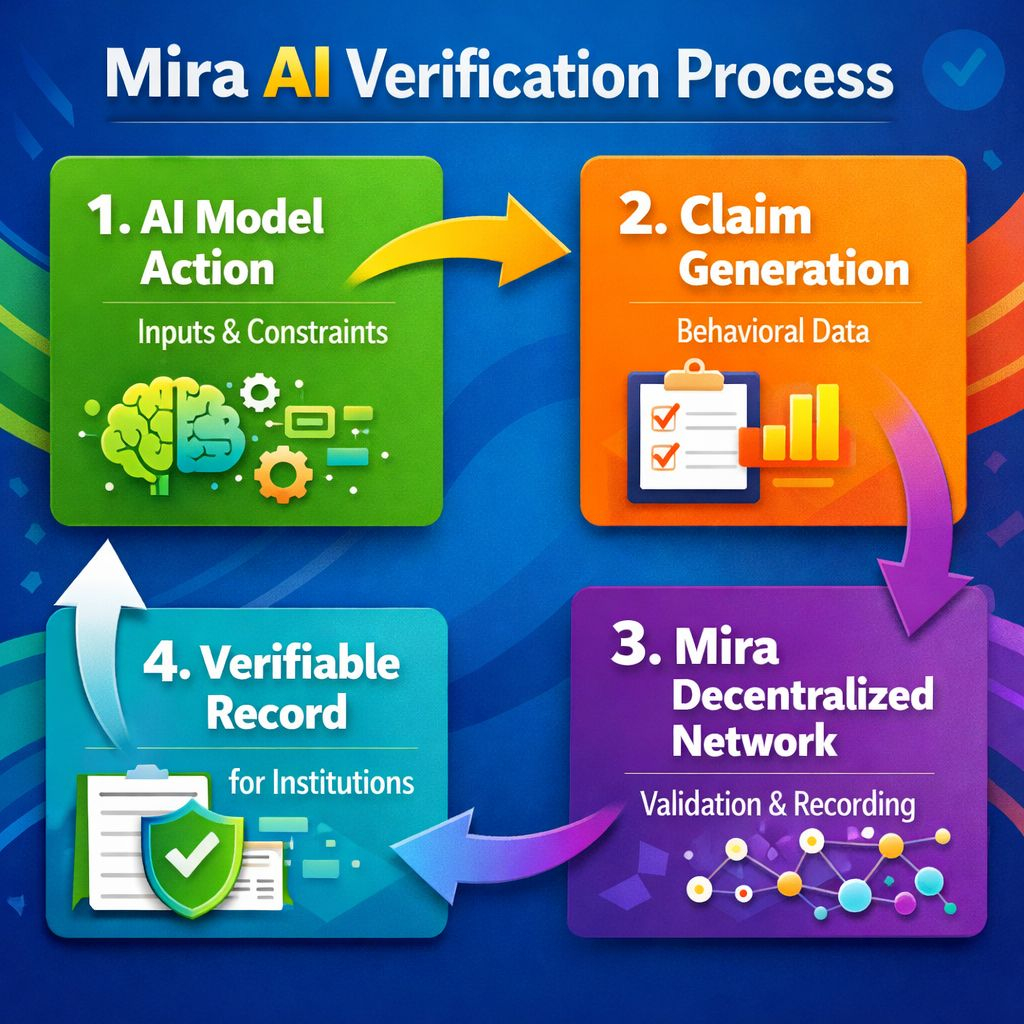

What interests me about Mira is that it does not attempt to compete with AI systems themselves. It doesn’t try to build better models or improve predictive accuracy. Instead, it focuses on a narrower layer: verifying claims about AI behavior. Inputs, constraints, execution context, and outputs can be recorded and validated through a decentralized network. In other words, the model still acts, but the verification of its behavior no longer depends entirely on the entity running it.

From an infrastructure perspective, that separation feels deliberate.

Most blockchains that intersect with AI emphasize marketplaces, compute networks, or model incentives. Mira’s emphasis on verification places it in a different category. Rather than trying to decentralize intelligence itself, it attempts to decentralize the trust layer around it. That distinction may not generate excitement, but it addresses a structural problem that grows more visible as AI systems become operational rather than experimental.

Still, I’m careful not to assume that specialized infrastructure automatically solves the issue.

Verification layers introduce friction. They require integration into existing systems, coordination among validators, and economic incentives that keep signals reliable. If the verification process becomes too complex or too expensive, operators may simply bypass it. Centralized logs may be imperfect, but they are simple and fast. Any decentralized alternative must justify its existence through practical reliability, not philosophical appeal.

Another challenge is scope. AI systems operate in wildly different environments—from financial trading platforms to healthcare diagnostics to autonomous robotics. A verification network designed for one domain may struggle to generalize across others. Mira’s specialization suggests an awareness of that complexity, but specialization can also limit reach if the infrastructure cannot adapt.

What I find noteworthy, however, is Mira’s restraint. It doesn’t claim to resolve every problem associated with AI trust. Instead, it narrows the problem to something enforceable: verifying that AI systems operated within declared boundaries. That boundary might involve approved data sources, predefined rules, or execution conditions. The network does not judge whether a decision was wise or ethical. It verifies whether it followed the stated framework.

That may sound modest, but modest infrastructure often proves more durable than ambitious promises.

As AI systems continue expanding into areas where decisions carry financial or regulatory consequences, the demand for verifiable records will likely increase. Institutions rarely rely on explanations alone when accountability is required. They rely on systems that produce evidence.

Whether Mira becomes a foundational layer for that evidence is still uncertain. Decentralized verification networks must prove themselves under operational pressure, not just theoretical arguments. They must remain credible, affordable, and usable even when systems are moving quickly.

For now, Mira feels less like a solution to the AI trust crisis and more like an attempt to define the infrastructure around it. If that infrastructure proves reliable, it may quietly shape how AI systems are audited and understood.

And if it doesn’t, the search for a trustworthy verification layer will continue, likely taking new forms as the technology evolves.