I remember the first time “verified” AI cost me money in a way that wasn’t dramatic, just annoying. I was running a tight little routine: scrape headlines, let a model classify what mattered, then I’d decide whether to size up or sit out. One morning it flagged a regulatory headline as “non material,” and I treated it like noise. Two hours later liquidity thinned, the market repriced the risk anyway, and I got clipped for being lazy. The worst part wasn’t the loss. It was realizing I couldn’t prove why the model made that call, or whether it even checked the right thing. It was just vibes with a confident tone.

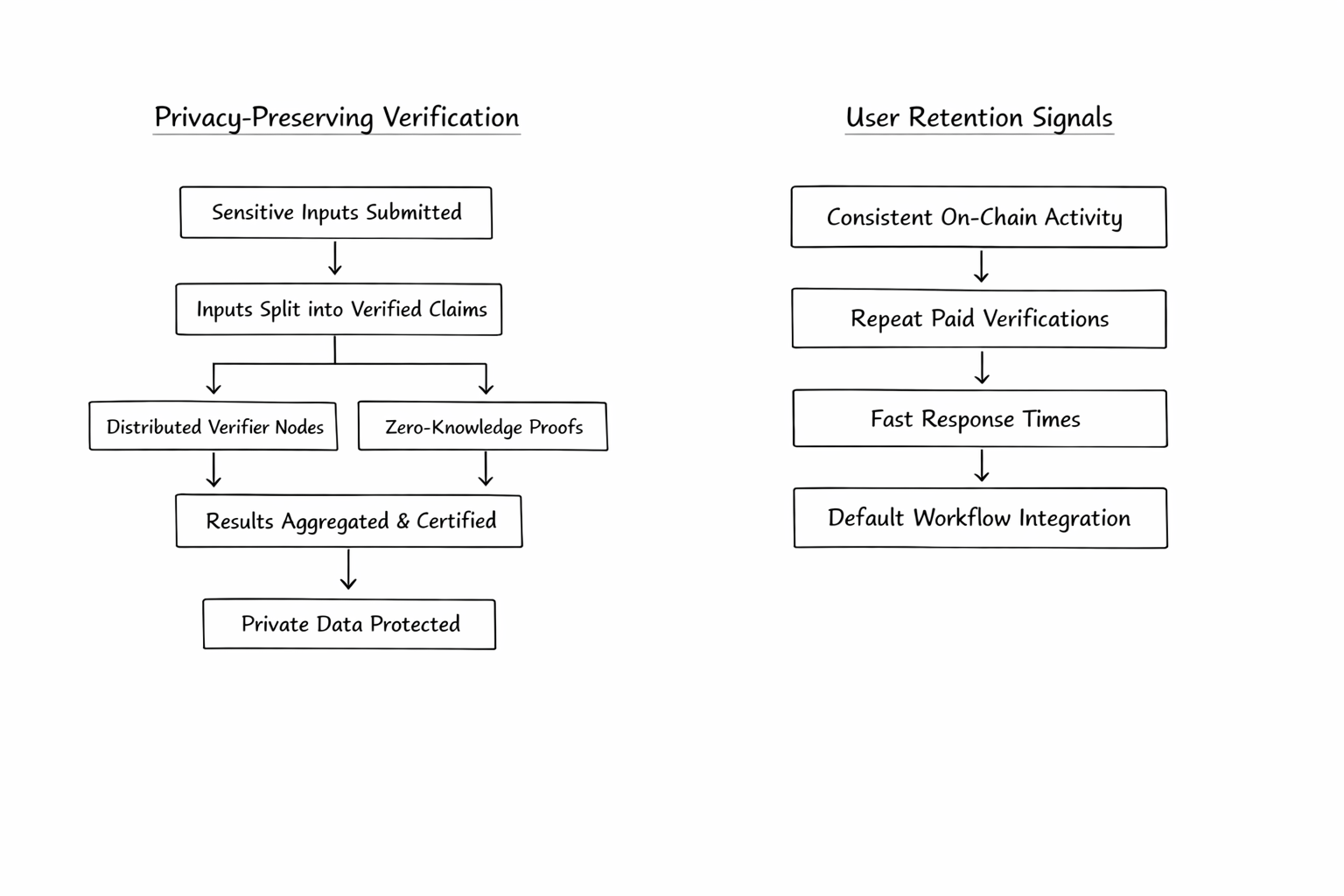

That’s why Mira has my attention, but not in a fanboy way. I’m interested in the friction it’s willing to introduce. Mira’s whitepaper describes a workflow where output gets broken into smaller, independently checkable claims, then sent through distributed consensus across different verifier models, and you get a cryptographic certificate back that records what cleared and what didn’t. Think of it like taking a messy trader’s narrative and forcing it into trade logs: entries, exits, assumptions, timestamps. Less stylish, more defensible. But here’s the thing I didn’t appreciate until I actually tried to reason about how this would get used in real flows. Verification and privacy are natural enemies. The more you show to verifiers, the easier it is to check. The less you show, the more the system starts verifying shadows instead of substance. That tradeoff is the entire game if you’re talking about finance, legal, customer support, or anything that touches user data. Mira itself frames the ambition as verifying everything from simple statements to complex content like documentation and code. That scope is exactly where privacy stops being a footnote and becomes the product risk. So as a trader, I don’t start with “does this reduce hallucinations.” I start with: where does the sensitive stuff go, and what’s left on chain? On chain permanence cuts both ways. If certificates are truly useful, they become receipts you can audit later. That’s the upside. The downside is you’ve created a breadcrumb trail of what someone asked, when they asked it, and what the system judged as true. Even if the raw text isn’t stored, metadata has teeth. Anyone who has watched wallets get clustered knows how “anonymous” turns into “obvious” once patterns emerge. When I look at Mira’s footprint, I try to separate price chatter from actual usage signals. The token market is small enough that narrative can push it around, so I treat the tape as a mood ring. CoinMarketCap currently shows Mira around a ~$22M market cap with ~244.9M circulating supply (max 1B). That’s not a guarantee of anything, but it tells you you’re not trading a ghost either. More interesting to me is that the token contract on Base shows a meaningful amount of transactional activity. BaseScan lists “Latest 25 from a total of 23,077 transactions” for the contract page I checked. Not all transactions are “verification demand,” obviously. Transfers are transfers. But numbers like that at least tell you something is happening at the contract level beyond a handful of early holders. Still, here’s my frustration with most “trust layer” tokens: retention. The retention problem kills these ideas more often than the tech does. Traders love a clean story. Real users don’t care about your story, they care about whether it makes their workflow easier next week. Verification is inherently an extra step. Extra steps get cut the second incentives fade or latency becomes a pain. This is where privacy and retention collide in a nasty way. If the only way to verify well is to ship chunks of user context to a network of third-party verifiers, a lot of serious teams will simply say “no,” or they’ll restrict usage to non sensitive queries. That shrinks your addressable demand to low stakes content, and low stakes content rarely pays enough fees to sustain a verification network long term. You can try to subsidize it, but subsidies are rented attention, and traders know how that movie ends. Mira seems aware that participation needs to be economically real. The whitepaper leans on staking and slashing to make guessing irrational and to align verifiers with honest inference. And the delegator program page literally says the contribution pool hit its cap, which hints at demand for yield or participation, or both. That’s encouraging, but it’s not the same thing as retention on the customer side. Delegators showing up is supply. What I want to see is repeat, paid verification volume that doesn’t crater when incentives cool. So what am I watching that would actually change my mind, bullish or bearish? I want evidence that privacy-preserving verification isn’t just promised, but operational. Not “we’ll do private data later,” but a clear path where sensitive inputs can be verified without being exposed to every verifier node. The whitepaper gestures at expanding to private data over time. Until that’s real, the market risk is simple: usage tops out in the shallow end of the pool. And I want retention signals that feel like workflow gravity. If you’re eyeing this as a trade, don’t get hypnotized by a nice headline about “trust.” Track whether the network becomes a default receipt layer teams keep using because the alternative is worse. Watch for on-chain patterns consistent with repeated activity rather than one-off bursts. Watch whether the product can be fast enough that people don’t bypass it under deadline pressure. Watch whether privacy constraints force the use case into irrelevance. If you take one action after reading this, make it practical: pull up the on-chain contract activity, then compare it against the kind of usage that would need verification in the first place, and ask yourself if that demand can persist without bribery. If Mira can solve privacy without watering down verification, retention gets easier and the token has a reason to exist beyond narrative. If it can’t, “trust” stays a slogan, and slogans don’t compound.