Imagine you have access to super smart artificial intelligence, but every time that AI gives you an answer, you can’t be sure if it’s truly correct or if it’s just making stuff up in a confident tone. That’s the classic problem with modern AI systems right now they’re amazing, but they can also hallucinate and make serious mistakes, especially in high-stakes situations like healthcare or financial planning.

Imagine you have access to super smart artificial intelligence, but every time that AI gives you an answer, you can’t be sure if it’s truly correct or if it’s just making stuff up in a confident tone. That’s the classic problem with modern AI systems right now they’re amazing, but they can also hallucinate and make serious mistakes, especially in high-stakes situations like healthcare or financial planning.

Mira Network was created to solve that problem. It’s a decentralized verification network that makes AI outputs trustworthy and verifiable using blockchain technology. Instead of relying on one central authority to judge AI responses, Mira spreads the work across many independent participants, and then uses consensus to decide what’s “true” and what isn’t. That means the network doesn’t trust a single AI model or single server it trusts the consensus agreement of many.

That in itself sounds bright and compelling, and it’s also why Binance announced Mira tokens on a HODLer airdrop program and why KuCoin chose to list MIRA on September 26, 2025 at noon UTC on their platform (trading against USDT). Deposits were opened earlier, which is usual for exchange listings so people can transfer tokens in advance.

If you’re new to this world it’s perfectly normal to pause and say I’m curious but skeptical, and that’s a good mindset it keeps you thoughtful about both opportunity and risk.

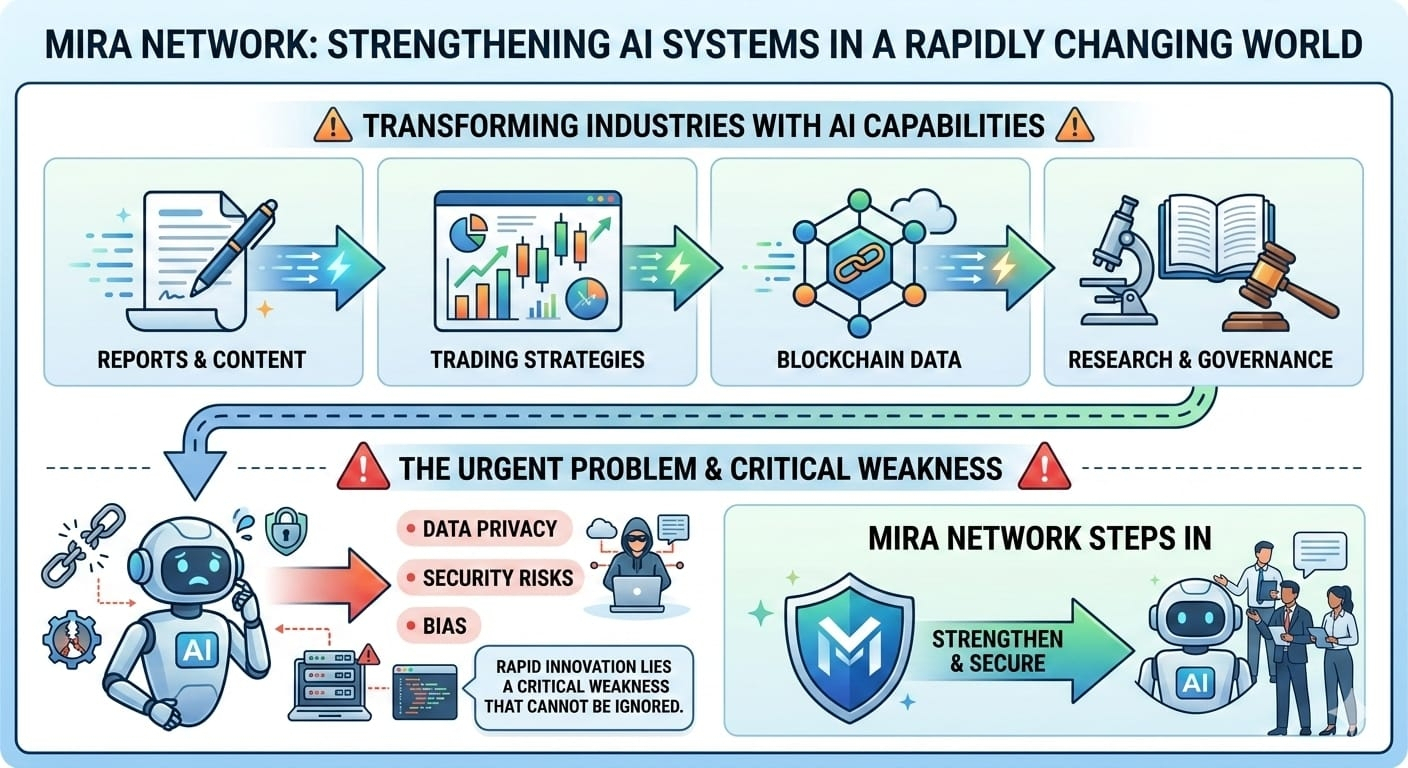

The Core Vision: Fixing AI’s “Black Box”

AI systems today are seen as “black boxes” — they take in prompts and give back answers, but you can’t easily check how or why those answers are correct. They might be wrong or biased, and sometimes the mistakes are subtle but serious. Mira’s vision is to transform that black box into something you can inspect and verify.

Here’s how that works at a fundamental level:

Mira breaks down AI outputs into tiny pieces, called claims, and then independently verifies each claim across many nodes in a decentralized network. Those nodes don’t rely on one AI model or one company’s secret math. They run verification using different participants that independently confirm the same piece of output before it is accepted as “true.” That consensus is recorded in a blockchain, so the outcome is verifiable and tamper-proof by anyone after the fact.

In simple terms: it’s like having many trusted teachers check an essay line by line, and you count only the parts they all agree on instead of trusting one teacher who might be tired or biased. This is the heart of Mira’s decentralized verification approach.

They also create building blocks APIs and development tools so developers can embed these verification systems into real applications. Then those apps can offer AI outputs that are verifiably accurate, which matters if you’re building something people rely on for money or health advice.

Why did the team choose this design? Because they believe that for AI to be truly “autonomous” — meaning it can operate without constant human oversight — it needs a trust layer that’s transparent and decentralized.

How It Works Step by Step

Let’s break down this unusual vision into steps you can easily follow.

1. AI Output Comes In

A user or program asks an AI system something for example, “What are the safest heart monitoring practices for seniors?”

2. Break Into Claims

Mira splits the AI’s answer into small declarative statements (these are the “claims”). This makes each idea easy to verify.

3. Distributed Verification

These claims go to a network of validators each one makes an independent check against other models or criteria without seeing all of the rest of the claims (this protects privacy).

4. Consensus Agreement

If enough validators agree on each claim, it becomes part of the verified answer. If they disagree, that claim gets flagged or rechecked.

5. Blockchain Record

The final verified claims are written into a blockchain ledger, making them tamper-proof and auditable by anyone later on.

6. Use in Real Apps

Developers build apps or services on top of this network like verified education tools, AI chat apps, financial decision aids and so on that use only verified outputs instead of raw, possibly untrustworthy answers.

That’s the basic architecture in a nutshell, without getting lost in techno-jargon. And because blockchain is public and secure, anyone can check the history of every verification that ever happened. That transparency is what gives Mira’s system its strength.

Why This Design Makes Sense

There are several big ideas behind this design choice that are worth understanding:

First, centralized AI verification still leaves risks. If only one company or single machine decides what’s “trustworthy,” you are trusting that company’s hidden models, data, and policies. That could be biased or manipulated. Decentralizing that function reduces single points of failure.

Second, many real problems like medical diagnosis or legal advice need high confidence before decisions are made. Mira tries to provide assurances that those decisions have been checked by many parties, not just one.

Third, this system allows developers to use this verification layer as a building block. Instead of building their own trust systems from scratch, they can plug into Mira’s APIs and smart modules.

All of this is designed to help AI move from a tool you supervise to a system that can make trusted decisions by itself when high accuracy is needed. That’s a huge leap if it works reliably.

The MIRA Token: What It Does in the Network

To make all of this work, Mira needed a digital token and that token is called MIRA. Tokens are common in blockchain projects because they serve as incentives, access keys, and governance levers.

Here’s how MIRA functions within the ecosystem:

MIRA is used to pay for access to network services, like running verification tasks or using the API for developers. That means the token is essentially the fuel of the system.

You can stake MIRA if you want to help secure the network (validators stake tokens to put their reputation and resources on the line), and honest verifiers earn rewards. If they try to cheat, they can lose part of their staked tokens this economic penalty enforces honest behavior.

MIRA can also be used for governance, meaning token holders can vote on decisions like upgrades or fee changes in the network. That keeps the system community-driven instead of controlled by a central corporation.

One design choice here is that governance and staking create economic alignment people who hold tokens are naturally motivated to keep the network healthy and reliable.

Real Metrics That Matter for MIRA’s Health

When we’re judging any crypto project, especially one as ambitious as this, we need to focus on real metrics rather than hype.

1. Network Usage How many verification tasks are being processed? A system with real utility will show consistent growth in activity.

2. Validator Participation and Decentralization Are many independent participants running nodes, or is it dominated by a few? True decentralization matters.

3. Token Distribution and Circulating Supply If too many tokens are held by insiders, markets can be manipulated.

4. Partnerships and Integrations Real usage in real apps is better than fancy slogans.

5. Development Progress – Are milestones met on time? Delays without transparency are a risk.

Some sources say Mira was handling millions of users and billions of token tasks daily during its testnet and early launch period that’s a promising sign if verified.

Main Risks and Weaknesses

No project is perfect, and Mira has several risks that are worth understanding without fear:

First, complexity is high. Combining blockchain with AI verification is not simple, and complexity often leads to bugs, security holes, or scaling problems.

Second, consensus verification needs honest participation from many independent actors. If many validators collude or misbehave, the whole trust premise falls apart.

Third, token economics and distribution could be skewed — if too much power is held by early insiders, markets might feel unfair.

Fourth, many real claims about AI accuracy improvements are hard to verify independently. So narratives about “96 percent accuracy” are optimistic projections that could change over time.

Finally, because blockchain incentives depend on token value, if the market price drops sharply, validators might leave, weakening the network.

These are serious risks, but they’re also typical of cutting-edge decentralized tech.

What the Future Could Look Like

If Mira delivers on its promises, we could see a world where AI does not need human oversight to verify accuracy for big decisions doctors, lawyers, and financial analysts could trust autonomous systems that have been checked by many verifiers, not just one.

I’m not saying this will happen overnight. The current reality is that these systems are still early and experimental. They’re more promise than mature product. But projects like this are pushing a boundary they’re designing the trust layer for future AI systems.

We’re seeing interest grow from both developers and users who want verifiable intelligence instead of blind trust that’s meaningful in itself.

Closing: Calm Hope and Thoughtful Reflection

If you’re curious about crypto and AI and feel inspired by the idea of decentralizing trust, Mira Network is a project worth learning about deeply. It tackles one of the hardest problems in technology today: can we decide what’s true in a world where machines think for us?

That’s a lofty question, but asking big questions is how progress happens. Not every experiment succeeds, not every design survives scrutiny, and that’s okay. What matters is thinking critically, evaluating real metrics, and continuing to learn.

In the end, what keeps this space interesting is not blind optimism or fear it’s informed curiosity, a calm willingness to explore new ideas with openness and caution. If you feel motivated to dig deeper, ask questions, and watch how this project evolves, that’s a sign you’re learning in the right way