The distance between a functional AI prototype and a production-grade AI application is, in 2026, measured less in engineering complexity and more in trust. Any developer with access to a modern large language model API can assemble a working prototype in hours. The harder problem — the one that separates experimental demos from applications trusted with financial decisions, patient data, or autonomous execution — is verification. How does a production system guarantee that what an AI model asserts is what is actually true? Mira Network's answer to this question is not a philosophical framework but an operational one: a Unified SDK, a Verified Generate API, and a decentralized verification network that together transform probabilistic AI outputs into cryptoeconomically attested facts.

Understanding why this matters requires first understanding the scale of the hallucination problem in real deployments. From fabricated legal cases used in courtrooms to flawed medical diagnoses and manipulated political content, AI hallucinations have already caused measurable damage, creating economic losses, social instability, and operational disruptions in healthcare, finance, and public policy. CryptoRank.io These are not edge cases or theoretical risks. They are documented failures in production environments where developers shipped AI applications without a verification layer — because, until recently, no credible verification layer existed at infrastructure scale.

Mira Network changes the calculus. The protocol works by deconstructing an AI model's complex response into individual, checkable claims, distributing them to a network of independent verifier nodes each running different AI models, with the nodes evaluating claims independently and using a consensus mechanism — analogous to blockchain validation — to determine whether a claim is accurate. Mira For a developer, the practical implication is significant: rather than shipping an application that trusts a single model's output unconditionally, they can ship an application where every output carries a verification certificate derived from multi-model consensus.

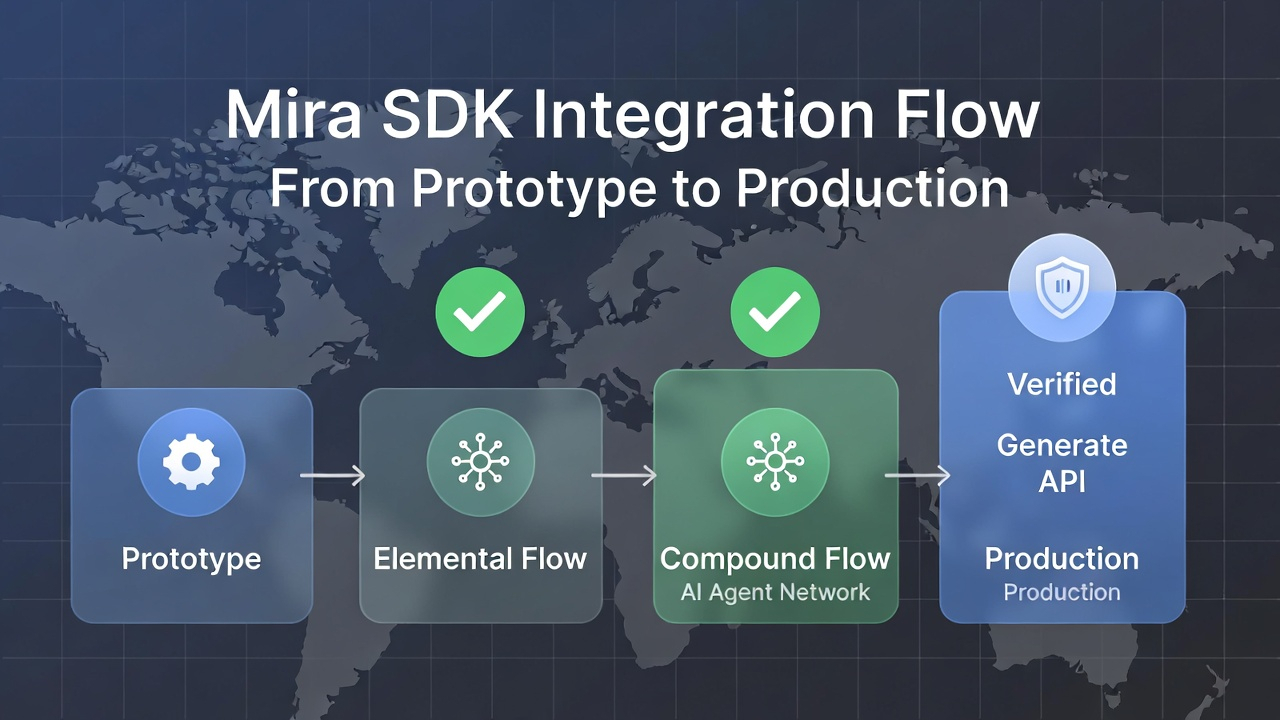

The Developer Entry Point: Unified SDK and Flow Architecture

Mira's developer-facing architecture is organized around two complementary layers. The first is the Unified SDK, which abstracts the complexity of the underlying verification network into a standard interface compatible with existing AI development workflows. The network launched its Voyager testnet in January 2025 with over 250,000 users and institutional adoption of its Dynamic Validator Network, enabling partners including Kernel, Aethir, IONET, exaBITS, Hyperbolic, and Spheron to operate on-chain verification nodes, cross-validate AI outputs in real time, and earn staking rewards via Proof-of-Stake-Authority consensus. Binance The mainnet transition in September 2025 matured this infrastructure into a production-grade system accessible through the SDK.

The second layer is Mira Flow, the structured orchestration framework through which developers compose AI logic into verifiable pipelines. Flows exist at two levels of complexity. Elemental flows handle single-purpose inference tasks — a query, a classification, a data extraction — where verification applies to a discrete, bounded output. Compound flows chain multiple elemental flows together, enabling complex multi-step reasoning pipelines where each stage's output is independently verified before it becomes the next stage's input. This architecture is particularly relevant for DeFi analytics agents, automated research pipelines, and autonomous governance tools where a chain of reasoning must be auditable at every link, not merely at its conclusion.

A practical developer pro-tip emerges from this compound flow architecture: when building agents that perform multi-step financial analysis, it is more effective to explicitly define the boundaries between elemental flows rather than submitting a single compound prompt and relying on Mira's automatic decomposition. Explicit flow segmentation gives the verification layer more precise claim boundaries, which increases the specificity of the consensus signal and reduces ambiguity in edge cases where a partially correct claim might otherwise pass or fail as an atomic unit.

The Verified Generate API: Replacing Trust with Attestation

The Verified Generate API is Mira's core integration point for developers replacing unverified completion calls with verified ones. From an implementation perspective, the API maintains compatibility with standard LLM completion request formats, meaning the integration overhead for an existing application is minimal: the endpoint changes; the verification layer activates. What the developer receives is not merely a text response but a structured output that includes the consensus verdict for each binarized claim within the response.

The mainnet processes up to 300 million tokens of data per day with 96% verification accuracy, serving 4.5 million users and establishing Mira as a trust layer upon which AI depends to guarantee that outputs are not only verifiable but also reliable. Bitget For a developer calibrating their application's risk tolerance, this throughput data is operationally significant: the Verified Generate API is not a low-volume research tool but a production system designed for the latency and concurrency demands of live applications.

Smart Model Routing and Load Balancing underpin this performance. Smart Model Routing ensures that each binarized claim is directed to verifier nodes operating the model configurations best suited to evaluate that specific claim type — financial claims to finance-specialized nodes, factual claims about world events to general-knowledge nodes. Load Balancing prevents verification bottlenecks from forming as query volume fluctuates, maintaining consistent response times even under peak load. Together, these systems ensure that the verification layer operates as a performance-transparent component of the application stack rather than a latency-introducing overhead.

Low-Stakes vs. High-Stakes: Calibrating Verification Depth

Not all applications require identical verification depth, and Mira's SDK architecture accommodates this through configurable verification parameters. Consider the contrast between two deployment scenarios:

• Low-stakes deployment — A content summarization tool for internal team use, where occasional inaccuracies are tolerable and correctability is high. In this context, a developer might configure lightweight elemental flows with single-pass verification, optimizing for speed and throughput over maximum consensus certainty.

• High-stakes deployment — An autonomous DeFi agent authorized to execute token swaps based on on-chain data analysis, where a single hallucinated price datum could trigger a loss event. Here, compound flows with multi-layer verification and explicit claim sensitivity thresholds are appropriate, accepting higher verification latency in exchange for cryptoeconomic assurance on every output before execution.

This calibration flexibility means Mira is not a one-size-fits-all overhead layer but a configurable trust infrastructure whose depth scales with the consequences of failure.

MIRA as the Economic Foundation of Verification Quality

The verification network's reliability is not an engineering property alone; it is an economic one. Node rewards, representing 16% of the fixed 1 billion token supply, are programmatically released to verifiers performing honest reasoning, while the Ecosystem Reserve of 26% funds developer grants, partnerships, and growth incentives. CoinMarketCap Node operators who stake MIRA to participate in verification face slashing penalties for systematically inaccurate outputs, transforming verification honesty from a cooperative assumption into a rational economic equilibrium.

The mainnet enables staking for node operators who are economically incentivized to be honest, and allows developers to access verifiable AI services, creating real-world demand for the network's core function of building trust in AI. CoinMarketCap For developers, this means the verification quality they receive through the SDK is backed not by a service-level agreement from a centralized provider but by the staked capital of a distributed validator set — a fundamentally different and more robust trust guarantee.

Mira's 2026 roadmap includes the conclusion of its Kaito Season 2 community campaign with a $600,000 prize pool, and an Irys partnership for permanent, immutable storage of verification certificates CoinMarketCap — ensuring that every attested output becomes a durable, queryable audit record rather than an ephemeral API response. For regulated industries where output auditability is a compliance requirement, this permanent verification record transforms Mira from a performance enhancement into a compliance infrastructure component.

The trajectory of AI development in 2026 is converging toward a single unavoidable question: at what point does an unverified AI output become an unacceptable operational risk? Mira Network's Unified SDK and Verified Generate API give developers a concrete, implementable answer — not as a theoretical proposition, but as a production infrastructure already processing hundreds of millions of tokens daily with measurable accuracy.

What internal risk thresholds should engineering teams establish before deploying AI agents with autonomous execution authority? As verification certificates become permanent on-chain records through the Irys integration, how will compliance frameworks in finance and healthcare adapt to treat cryptoeconomic attestation as a formal evidentiary standard?

Explore the SDK and Verified Generate API at mira.network. Stake MIRA to participate in the verification network as the ecosystem scales into 2026 and beyond.