Look, AI is getting scary good at a lot of things, but the one thing it still sucks at is knowing when it’s wrong. We’ve all laughed at ChatGPT confidently explaining why 2025 is the year of the flying car or inventing fake court cases with made-up judges. Funny when it’s memes. Not funny when some hospital system relies on it for diagnostics, or a trading bot uses it to move millions, or governments start feeding policy recommendations straight from a model.

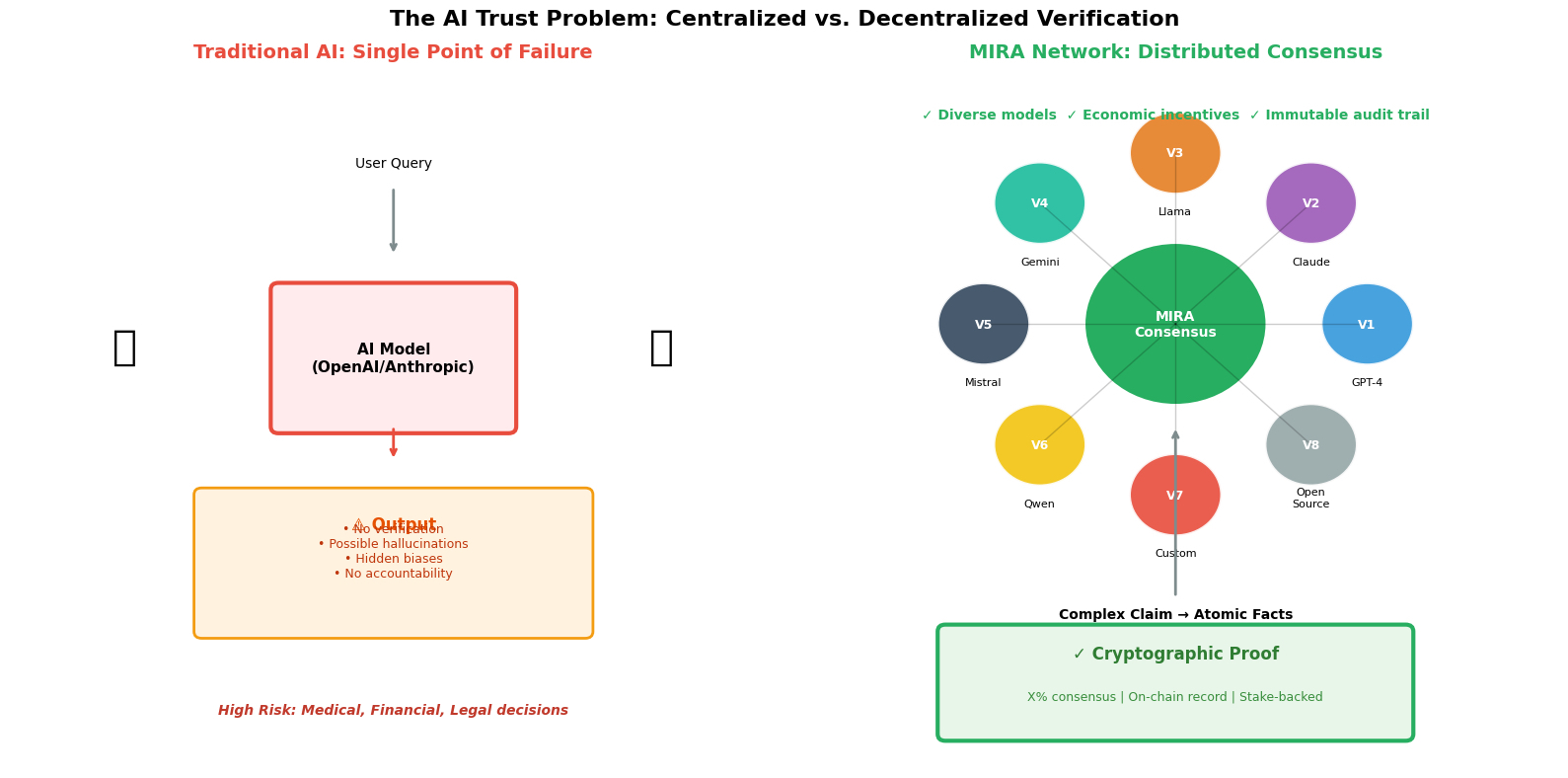

The core problem isn’t the intelligence anymore – it’s the lack of any real way to prove the output isn’t hallucinated garbage or quietly biased toward whatever data slop it was trained on. Prompt engineering and RAG help a bit, but they’re bandaids. You still have one model (or a handful controlled by the same company) deciding what’s true. Single point of failure, massive.

That’s why stuff like @Mira - Trust Layer of AI NETWORK actually feels different. They’re not trying to build a smarter model. They’re building a way to check models against each other in a decentralized, incentive-aligned way. You take a complex answer, break it into small, concrete claims. Send each claim out to a bunch of independent AI verifiers – different models, different training runs, different providers if possible. Each one votes yes/no/maybe with reasoning. Then the network reaches consensus using blockchain to record who said what and stake-based penalties/rewards to discourage lazy or malicious voting.

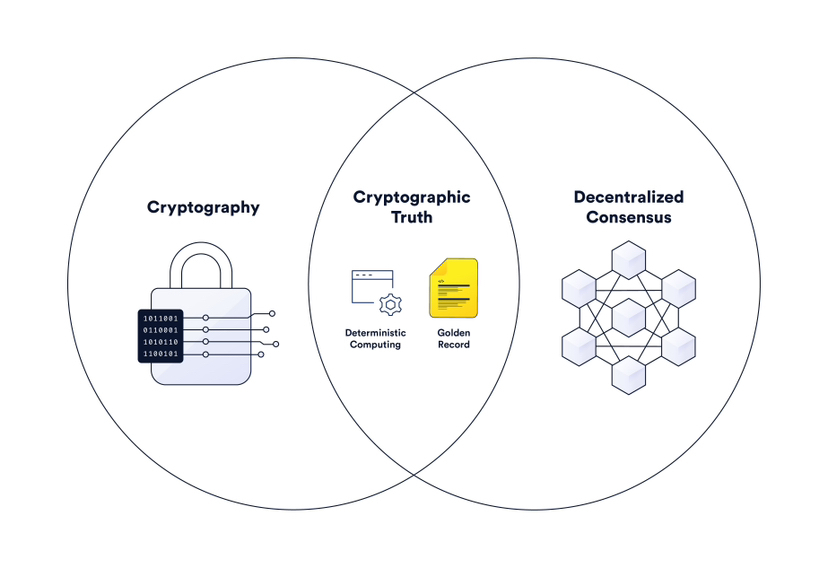

Once enough verifiers agree, you get a cryptographic proof that says “at least X% of diverse models think this specific claim holds up.” That proof lives on-chain forever. No central lab can fudge it later. Developers can set whatever threshold they want depending on how dangerous a wrong answer would be – 60% for casual content moderation, 95%+ for anything touching money or safety.

I like that it’s not pretending AI will suddenly become perfectly truthful. It’s accepting that every model has blind spots and instead turning that into a feature: collective scrutiny catches what individuals miss. Plus the economic layer actually matters – people stake real value to verify, so there’s skin in the game. Mess up too much and you lose money. Get it right consistently and you earn. Classic crypto mechanism design applied to a very non-crypto problem.

Right now most serious AI use cases are still gated behind “human in the loop” because nobody wants to be the company that deployed the confidently wrong medical advice. Mira could shrink that loop or remove it entirely in a lot of places. Imagine autonomous agents that only execute after their plan gets multi-model sign-off. Or news aggregators that tag every AI-summarized article with a verifiable accuracy consensus score. Or even DAOs that use it to fact-check proposals before voting.

$MIRA is the token that makes all the staking, rewards, fees and governance work. Nothing flashy, just functional. As models keep doubling in capability every few months, the gap between “can do it” and “can be trusted to do it” is only getting wider. Someone has to close that gap, and doing it in a decentralized, auditable way feels way more future-proof than hoping OpenAI or Anthropic suddenly figures out perfect truthfulness.

Curious what people here think. Would you trust an AI agent more if every major decision had on-chain consensus from dozens of models? Or are we still years away from that being practical? #Mira