Most AI systems today operate on probabilistic inference. Large language models optimize next-token prediction, not factual correctness. Even with scaling, hallucinations and bias remain structural limitations, not temporary bugs.

@Mira - Trust Layer of AI approaches this from a systems perspective rather than a model perspective.

Rather than chasing a flawless model, Mira asks how to engineer a network that makes errors statistically improbable and economically inefficient.

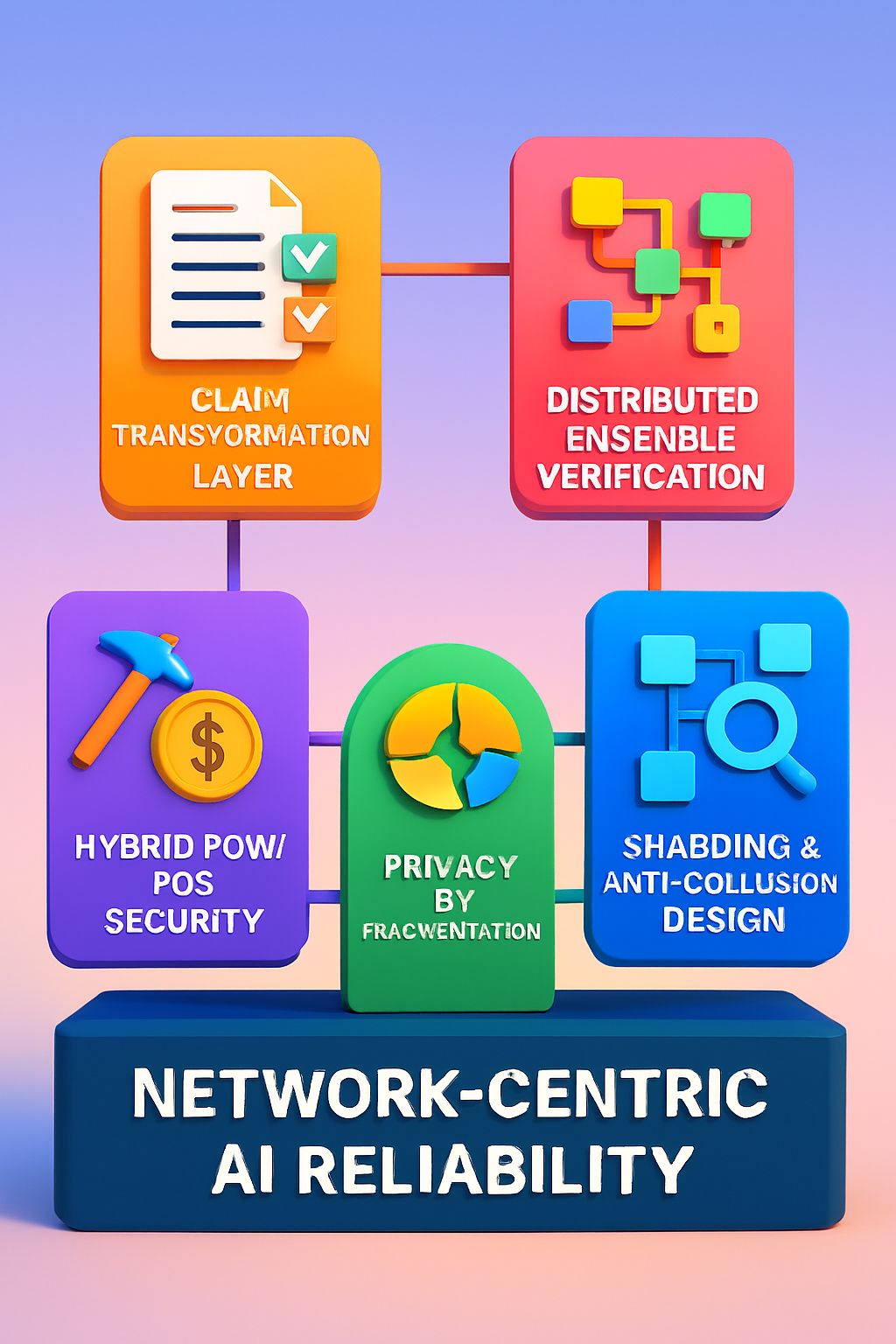

1. Claim Transformation Layer

The first technical innovation is content decomposition.

Complex AI outputs, whether paragraphs, reports, or code,are transformed into discrete, independently verifiable claims. This standardization is critical. If verifier models receive the same atomic claim with identical context, consensus becomes measurable.

Without transformation, verification is ambiguous. With transformation, it becomes structured.

2. Distributed Ensemble Verification

Each claim is routed to independent verifier nodes running diverse models. These models evaluate validity under defined constraints (binary or multi-choice formats).

Consensus is not cosmetic aggregation,it is statistically enforced agreement across heterogeneous systems. Diversity reduces correlated bias. Independent inference reduces single-point failure.

3. Hybrid PoW / PoS Security

Verification tasks resemble constrained inference problems. Because answer spaces are limited (e.g., binary true/false), random guessing has non-zero success probability.

Mira mitigates this with staking mechanics:

• Nodes must lock economic value to participate.

• Consistent deviation from consensus or statistically anomalous behavior triggers slashing.

• Honest inference becomes the economically dominant strategy.

This hybrid design combines meaningful computational work (inference) with stake-backed accountability.

4. Sharding and Anti-Collusion Design

Verification requests are sharded randomly across nodes. As the network scales:

• Collusion becomes increasingly capital-intensive.

• Pattern analysis detects correlated responses.

• Duplicate verification phases identify malicious operators early.

Security scales with economic value and model diversity.

5. Privacy by Fragmentation

Because content is decomposed and sharded, no single node reconstructs the entire original input. Consensus results are aggregated without exposing full context to individual operators.

This creates a privacy preserving verification pipeline $MIRA #Mira