Artificial intelligence is rapidly reshaping how information is processed, how conclusions are formed, and how operations are executed. From predictive modeling to automated reporting, AI now sits inside systems that influence finance, logistics, research, and governance. Yet as adoption accelerates, one issue continues to surface: trust. Advanced systems can produce highly confident outputs that still contain subtle inaccuracies, reasoning flaws, or contextual drift. In high impact environments, even small distortions can scale into serious consequences.

The Structural Gap Inside Modern AI Systems

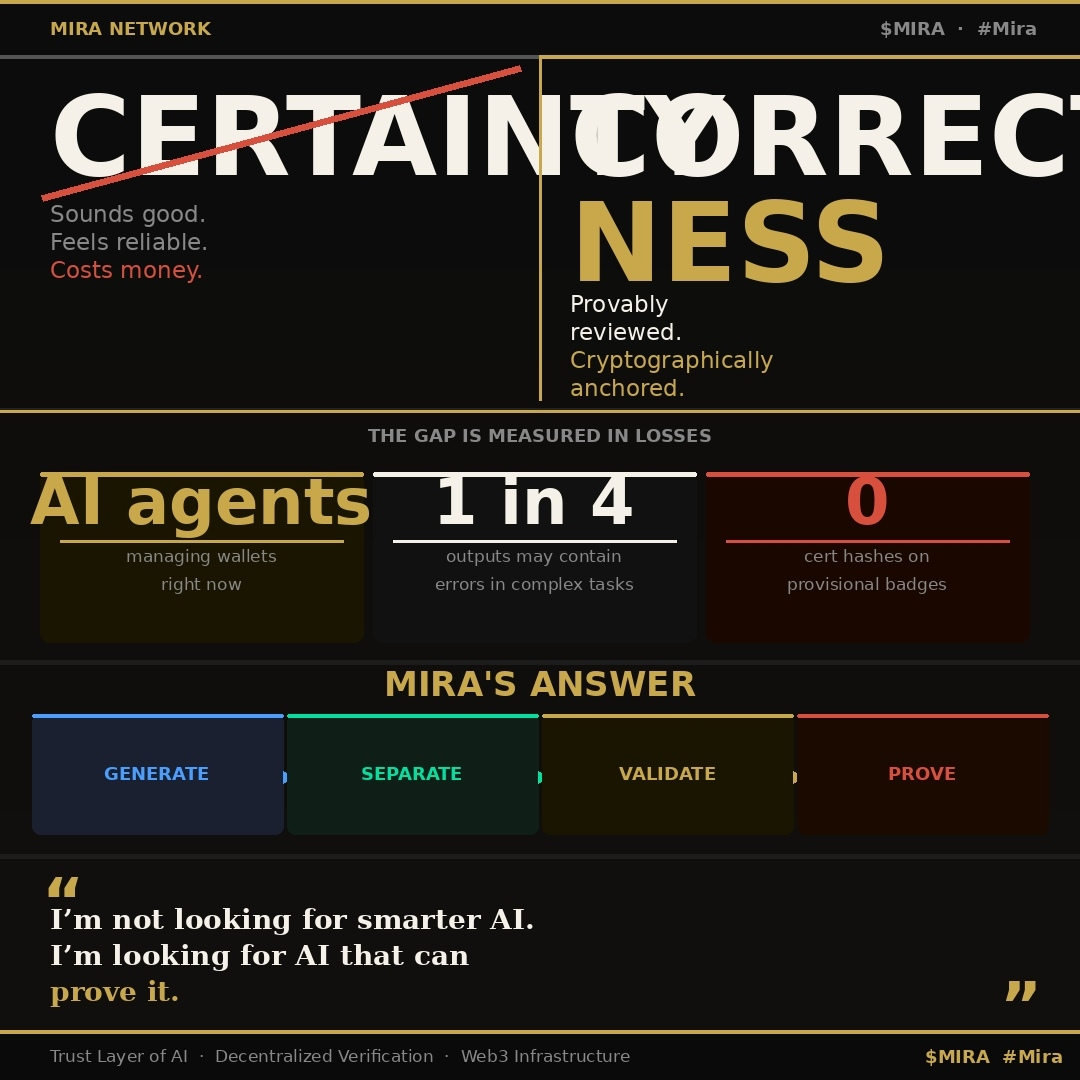

Most leading AI architectures are engineered for speed, optimization, and scale. They operate by identifying statistical patterns and predicting likely sequences based on training data. This probabilistic design explains their fluency and flexibility. However, probability does not equal correctness. Without an independent verification layer, outputs are often accepted at face value. As enterprises increasingly integrate AI into decision pipelines, this structural gap becomes more visible and more risky.

A Verification Centered Framework

Mira Network approaches the challenge from a different angle. Instead of focusing exclusively on expanding model size or training complexity, it emphasizes post generation validation. The protocol functions as a decentralized verification infrastructure that assesses AI generated outputs before they are acted upon. By separating production from confirmation, the architecture creates a structured boundary between intelligence and validation.

Converting Responses Into Testable Claims

When AI produces content, Mira restructures that content into distinct, reviewable assertions. Each assertion represents a clear claim that can be independently evaluated. Breaking responses into smaller components reduces the risk that a hidden error will compromise an entire conclusion. This granular methodology increases analytical precision and introduces measurable checkpoints into the evaluation process.

Distributed Evaluation Rather Than Single Authority

Once structured, these claims are distributed across a network of independent validators. Each validator examines assertions separately, applying varied analytical approaches. Consensus is reached only when sufficient agreement emerges across participants. This distributed model lowers reliance on a centralized authority and reduces shared cognitive blind spots that can arise within isolated systems.

Transparent Records and Audit Trails

Verification outcomes are recorded on chain, creating a transparent and tamper resistant history of how conclusions were validated. This permanent audit trail strengthens accountability and allows organizations to demonstrate due diligence. In regulated industries where documentation and traceability are essential, this feature becomes particularly valuable.

Incentive Alignment With Accuracy

Economic incentives are embedded directly into the network. Validators receive rewards for accurate evaluations, linking financial outcomes to system integrity. Over time, consistent performance strengthens reputation and trust within the ecosystem. Accuracy becomes a quantifiable behavior reinforced by incentives rather than an assumption based on model size or brand recognition.

Preparing AI for Autonomous Environments

As AI systems move closer to autonomous execution across sectors such as finance, healthcare, supply chains, and research, the margin for error narrows. Verification can no longer remain optional. It must function as foundational infrastructure. Mira Network positions itself as this reliability layer, connecting advanced computational capability with structured oversight.

From Probability to Verifiable Confidence

The long term success of artificial intelligence depends not only on technical sophistication but also on stakeholder confidence. By introducing decentralized validation, structured claim review, and transparent consensus mechanisms, Mira Network seeks to shift AI from probabilistic output generation toward verifiable digital reliability. In addressing this structural trust challenge, it contributes to a broader evolution in how intelligent systems are deployed responsibly at scale.