If $ROBO standardized an on-chain Energy Priority Auction for autonomous machines, would electricity markets start pricing robotic demand ahead of human consumption?

Last week I tried booking a late-night EV charging slot through an app I use regularly. The interface froze for a few seconds, then refreshed with a higher tariff. Nothing dramatic. Just a quiet repricing. What caught my attention wasn’t the extra rupees — it was the timing. Demand had ticked up in the background, and the system adjusted before I could confirm. Invisible logic, silent priority.

That small delay felt structurally loaded. Energy allocation today pretends to be neutral, but it’s increasingly predictive. Platforms anticipate consumption spikes and reroute supply in advance. Humans still think in terms of “first come, first served.” Infrastructure no longer does. It optimizes for aggregate efficiency, not individual fairness.

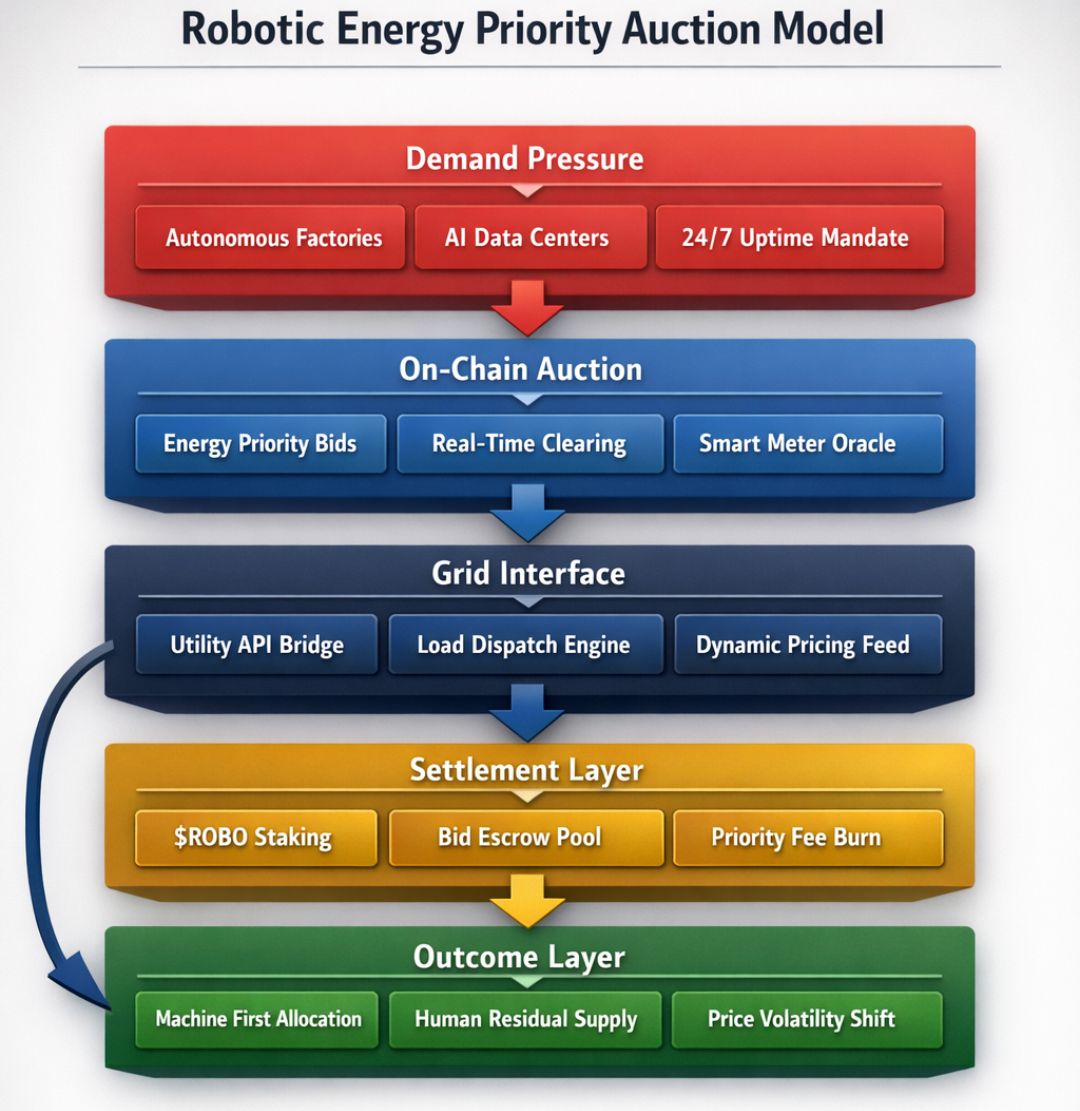

Now imagine that shift extended beyond cars and homes. Autonomous warehouses, delivery drones, robotic manufacturing lines — all bidding for electricity in real time. Machines don’t sleep. They don’t negotiate emotionally. They optimize for task completion windows. If robotic systems begin to represent stable, predictable demand curves, grid operators would logically prioritize them over volatile human consumption.

The mental model that makes this clearer is airport runway allocation. When traffic is light, everyone departs more or less in order. As congestion increases, priority shifts to aircraft with strict schedules, connecting passengers, or fuel constraints. The runway doesn’t care about sentiment. It cares about throughput stability. Electricity markets may evolve similarly: allocating power based on systemic efficiency rather than chronological request.

Only after viewing it through that runway lens does an on-chain Energy Priority Auction start to make sense.

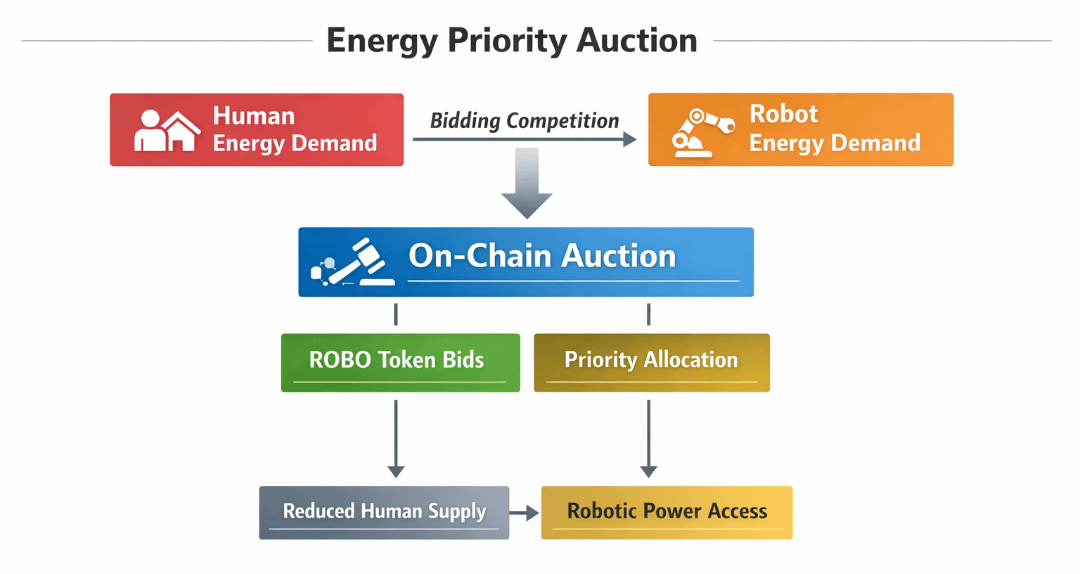

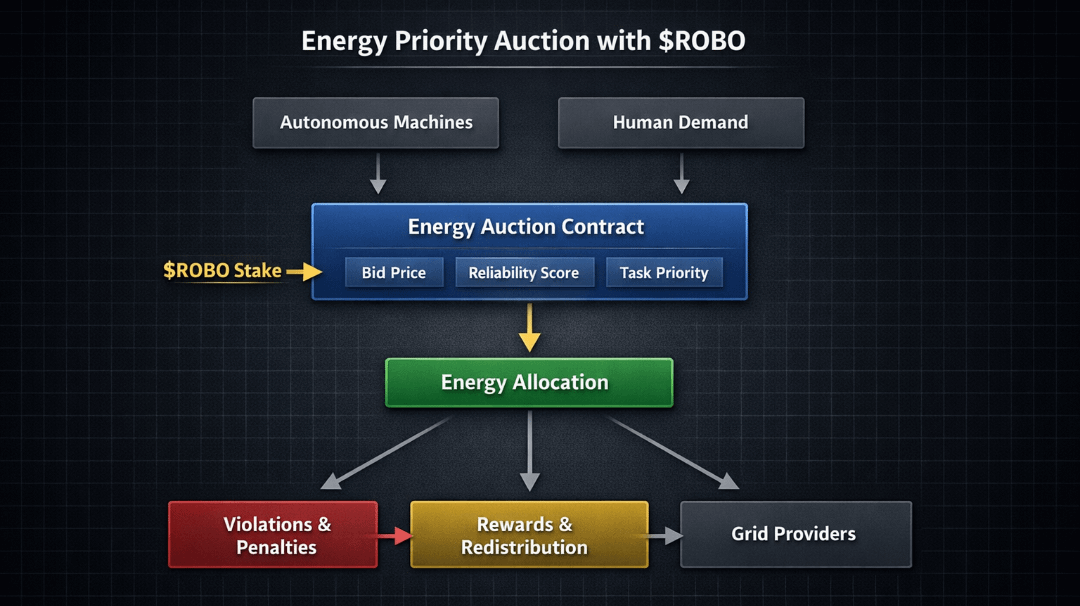

If ROBO standardized such a mechanism, the architecture would not simply be about bidding higher prices. It would involve programmable demand declarations. Autonomous machines would submit verifiable energy forecasts to a smart contract layer — specifying quantity, time window, and task criticality score. These declarations would be cryptographically signed by machine controllers and bonded with ROBO tokens.

The auction layer would clear energy slots based on three variables: bid price per kilowatt-hour, reliability score of the forecasting agent, and historical execution accuracy. Machines that consistently overstate urgency would see their reliability coefficient decay. Understating and failing to execute would similarly penalize future allocation priority.

Token utility in this system goes beyond access. $ROBO would function as staking collateral for forecast integrity. Suppose 10% of each energy bid must be bonded in ROBO. If actual consumption deviates beyond an allowed variance band — say ±3% — part of that stake is slashed and redistributed to grid-balancing participants. This creates a feedback loop where accurate energy modeling becomes economically rewarded.

Picture a simple diagram embedded here: on the left, “Autonomous Machine Operators” submit forecast bids with ROBO stake. In the center, an “Energy Priority Auction Contract” ranks bids using price × reliability coefficient. On the right, “Grid Providers” receive allocation signals and deliver electricity. Beneath the diagram, arrows loop back from delivery outcomes to reliability scores, adjusting future priority weightings. The visual matters because it shows this is not just a price auction — it’s a credibility-weighted system.

Contextually, networks like Ethereum have demonstrated how staking aligns validator honesty with economic risk. Solana has shown high-throughput coordination under strict timing constraints. Avalanche’s subnet architecture illustrates how specialized execution environments can isolate market logic. An Energy Priority Auction would borrow from all three patterns: staking for integrity, low-latency settlement, and domain-specific execution lanes.

The measurable constraint here is grid capacity. If peak supply in a region is 10 gigawatts and autonomous demand accounts for 3 gigawatts with 95% forecast accuracy, operators gain planning certainty. Human consumption, historically more erratic, might represent higher balancing costs. Over time, energy markets could assign discount multipliers to robotic demand because it reduces reserve margin requirements.

That shift changes behavior. Developers building autonomous fleets would invest heavily in predictive modeling because reliability directly lowers their energy costs. Hardware manufacturers would integrate telemetry systems capable of on-chain reporting. Even firmware updates could adjust energy forecasting algorithms based on past slashing events.

Human users would feel this indirectly. Residential tariffs might become more dynamic, with fewer guaranteed peak-hour slots. The assumption underpinning this model is that machines generate higher economic value per kilowatt-hour than average household usage. If that assumption holds, capital will follow efficiency.

However, this design carries structural risk. Prioritizing robotic demand could entrench inequality in energy access. If autonomous systems cluster in industrial hubs, rural or low-income communities may face systematically higher volatility in pricing. Additionally, oracle manipulation or collusion between machine operators could distort reliability scores unless audit mechanisms are robust. Governance must therefore include grid stakeholders, consumer representatives, and independent auditors — not only token holders.

There’s also a failure mode where over-optimization reduces resilience. If too much capacity is pre-allocated to robotic systems, unexpected human demand surges — heatwaves, emergencies — could expose inflexibility. The auction must therefore reserve a non-auctioned buffer capacity, perhaps 15–20%, explicitly ring-fenced for human-critical infrastructure.

Governance within ROBO’s framework would need adaptive parameters: adjustable variance bands, dynamic slashing ratios, and transparent reliability scoring formulas. These could be updated through token-weighted proposals, but with multi-sig safeguards from grid operators to prevent purely speculative governance capture.

Economically, value accrues to ROBO through mandatory staking, slashing redistribution, and participation requirements for machine registration. If each registered autonomous unit must lock a minimum threshold — for example, 5,000 ROBO — and network growth scales into tens of thousands of machines, token demand becomes structurally linked to operational capacity rather than speculative narrative.

Over time, electricity markets might begin modeling robotic demand as the baseline load and treating human consumption as the variable overlay. Not because humans are less important, but because machines provide predictable execution. Markets reward predictability.

The runway doesn’t ask who deserves takeoff. It allocates based on systemic flow.

An on-chain Energy Priority Auction standardized by ROBO would formalize that logic at the grid level, converting forecast accuracy into economic priority. If that architecture takes hold, electricity would no longer simply follow demand — it would follow reliability.$ROBO @Fabric Foundation #ROBO