You ask an AI for medical advice, investment guidance, or even code to run a critical system. It responds with polished confidence — but deep down, you hesitate. Is this fact, fiction, or a clever hallucination? Today, that doubt is the invisible ceiling holding back the AI revolution. We’ve built machines smarter than ever, yet we still need humans in the loop to babysit them. Enter Mira Network — not another flashy model chasing benchmarks, but the quiet, rigorous infrastructure making AI trustworthy by design.

At its heart, Mira solves what the team calls the “AI reliability gap.” Single models, no matter how large, are probabilistic by nature. They trade precision for creativity and accuracy for breadth. Hallucinations creep in when they invent plausible details; bias sneaks through when training data reflects human blind spots. Fine-tuning helps in narrow domains but crumbles on new information or edge cases. The result? AI remains a brilliant intern — impressive, but never left unsupervised in high-stakes rooms like hospitals, courtrooms, or trading floors.

Mira flips the script with decentralized collective intelligence. Here’s the elegant magic: any AI output — text, code, analysis — gets transformed into bite-sized, independently verifiable claims. These claims are sharded across a global network of nodes, each running diverse verifier models (different architectures, training data, perspectives). No single model decides truth. Instead, they reach consensus through transparent, cryptographically sealed votes. The outcome? A tamper-proof certificate you can audit on-chain, proving exactly which models agreed on what, and at what confidence level.It’s like assembling a jury of brilliant, independent experts — each seeing the world slightly differently — and letting blockchain be the impartial courtroom. Random guessing or collusion? Crushed by a hybrid Proof-of-Work/Proof-of-Stake model. Nodes stake Mira to participate; honest inference earns rewards from user fees, while deviations trigger slashing. The economic design is ruthless yet fair: scale brings more diversity, lower costs, tighter consensus, and stronger security.

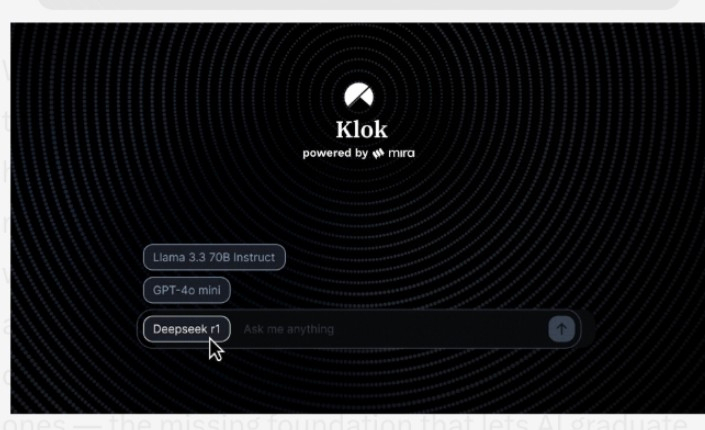

The flagship experience is already here. Klok, Mira’s decentralized AI chat app, delivers verified answers in real time. Early partnerships — with Kernel on BNB Chain, Hyperbolic for GPU infrastructure, and real-world pilots in education (Learnrite), crypto research (Delphi Oracle), and beyond — show the network isn’t theory. It’s shipping. Mainnet launched last year, Mira (1 billion fixed supply, ~24% circulating) powers staking, governance, API access, and value accrual. No endless unlocks, no insider-heavy vesting drama — just clean alignment between users, builders, and verifiers.

Why does this matter beyond crypto circles? Because trustworthy AI is the prerequisite for the next era of human progress. Imagine autonomous agents negotiating contracts, robots coordinating in factories with verifiable decisions, or scientific discovery accelerating without constant fact-checking. Mira doesn’t promise god-like models. It promises reliable ones — the missing foundation that lets AI graduate from helpful tool to true collaborator.

In a world drowning in generated content, Mira is building the watermark of truth. Not by centralizing power, but by distributing it intelligently. Not by shouting louder, but by verifying deeper.If you’re building, investing, or simply dreaming about an AI-native future, Mira deserves your attention. The models will keep getting smarter. But only the ones we can trust will truly matter.The verification layer is live. The age of autonomous intelligence is closer than it feels.

@Mira - Trust Layer of AI #Mira #mira #USCitizensMiddleEastEvacuation #GoldSilverOilSurge #XCryptoBanMistake $MIRA $MYX