I remember sitting in a windowless server room back in 2010 feeling like I was babysitting a temperamental toddler while trying to scale legacy databases. Fast forward to today and we are basically doing the same thing with AI models that hallucinate with the confidence of a venture capitalist on his third espresso. We have these massive foundation models that can write poetry but cannot reliably tell you if a legal citation is real or a fever dream. The industry is currently stuck in this awkward phase where we treat AI like a magic black box and then act surprised when it breaks. The reality is that human oversight is the ultimate bottleneck and if we do not find a way to make verification intrinsic to the generation process then we are just building very expensive toys. Mira is essentially my bet that we can stop babysitting these models by moving toward a world where truth is not just an afterthought but a baked in feature of the silicon itself.

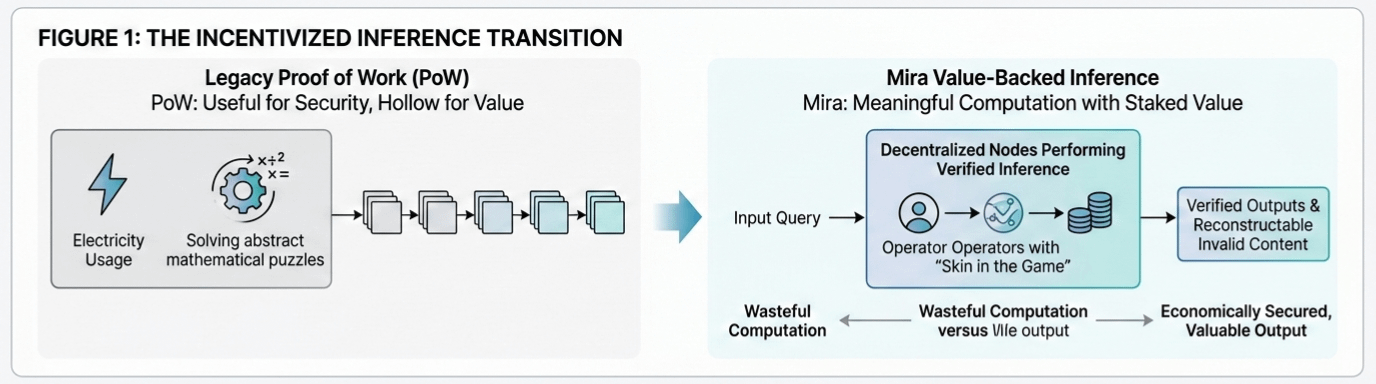

The old way of doing things relied on traditional proof of work where machines burned electricity solving useless puzzles just to keep a ledger straight. It was secure but ultimately hollow because all that computation did not actually produce anything of value for the real world. Mira flips that script by requiring meaningful inference computations backed by staked value which ensures that every node in the network has skin in the game. We are moving from simple validity checks in high stakes fields like healthcare and law toward a future where the network can actually reconstruct invalid content and eventually generate verified outputs directly. It is a massive technical leap that aims to kill the trade off between speed and accuracy because once you have a decentralized network of incentivized operators you are no longer relying on a single fallible central authority to tell you what is real.

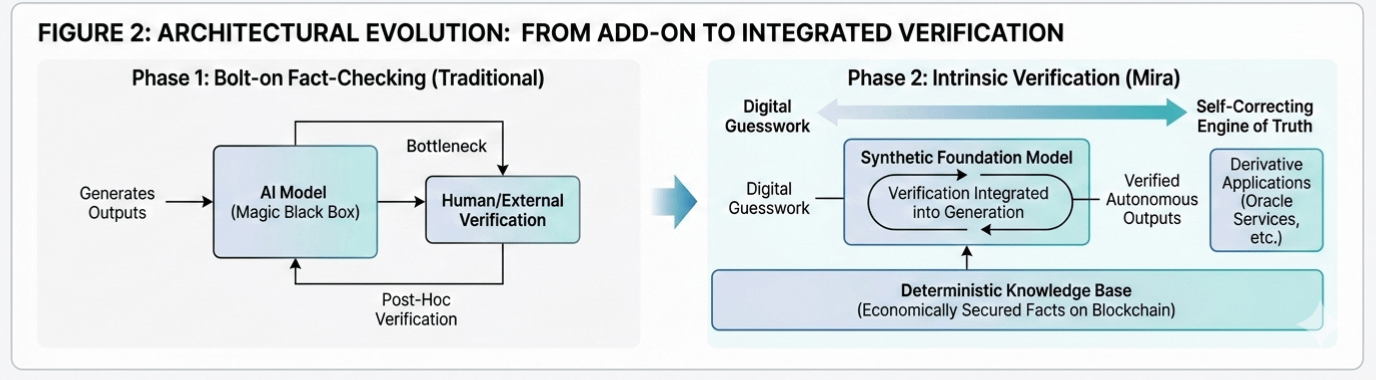

I have seen plenty of projects claim they can solve the hallucination problem with a simple layer of fact checking but that is like putting a band aid on a structural crack in a dam. You cannot just bolt verification onto the side of a model and expect it to handle the complexity of private data or multimedia content without the whole thing bloating into oblivion. The bone deep reality is that building a synthetic foundation model where verification is integrated directly into the generation process is the only way to achieve actual autonomy. We are accumulating economically secured facts on the blockchain to create a deterministic knowledge base that can actually support derivative applications like oracle services. It is about converting raw data into value backed facts so that we can finally let these systems run without a human in the loop constantly double checking the math.

If we look at the trajectory of the internet we went from static pages to a global hive mind but we lost the ability to discern intent along the way. Right now we are essentially trying to build a city on top of shifting sand and wondering why the buildings keep leaning. Mira is trying to provide the bedrock by creating a system where manipulation is not just technically difficult but economically ruinous for anyone who tries it. By the time this evolution is complete the distinction between generating a thought and verifying its truth will disappear entirely. It reminds me of the difference between a compass and a GPS system. A compass tells you where north is but a GPS understands the entire terrain and your place within it. We are moving away from the era of digital guesswork and toward a future where AI is a self correcting engine of truth.