I didn’t start paying attention to Mira because I thought we needed another AI protocol.

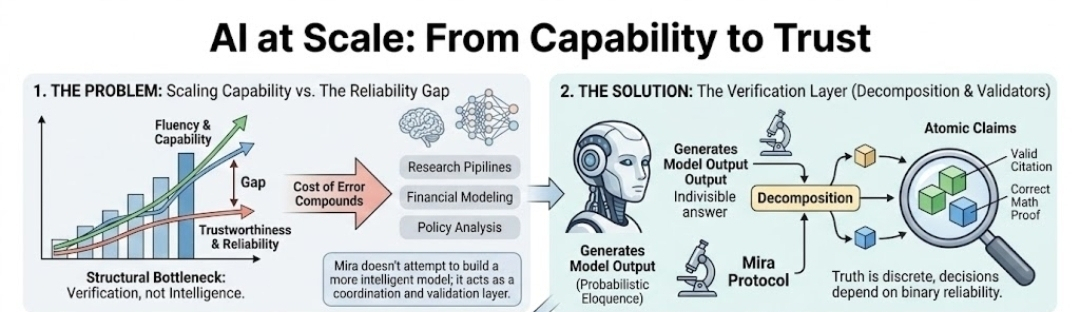

I started paying attention because I realized something uncomfortable: AI already feels smart enough. What it doesn’t feel is reliable enough.

There’s a difference.

When I use AI today, I don’t question whether it can generate content. It obviously can. The real question is whether I can trust that content without personally auditing it. And right now, the honest answer is no.

That’s where Mira Network fits in.

Mira isn’t trying to compete with model builders. It isn’t another LLM. It’s a decentralized verification protocol designed to sit between AI output and trust. That positioning is subtle, but it changes the entire conversation.

Instead of assuming a model’s answer is a single, indivisible truth, Mira breaks outputs into discrete claims. Those claims are then distributed across a network of independent validators. Each validator — which can itself be an AI system — evaluates the claim separately. Consensus is reached through blockchain coordination and economic incentives.

So the trust model shifts.

You’re no longer relying on a single model’s confidence. You’re relying on distributed agreement under stake-backed conditions. Validation is recorded on-chain. Incentives reward accurate assessment and penalize careless approval.

Truth becomes economically enforced rather than reputationally assumed.

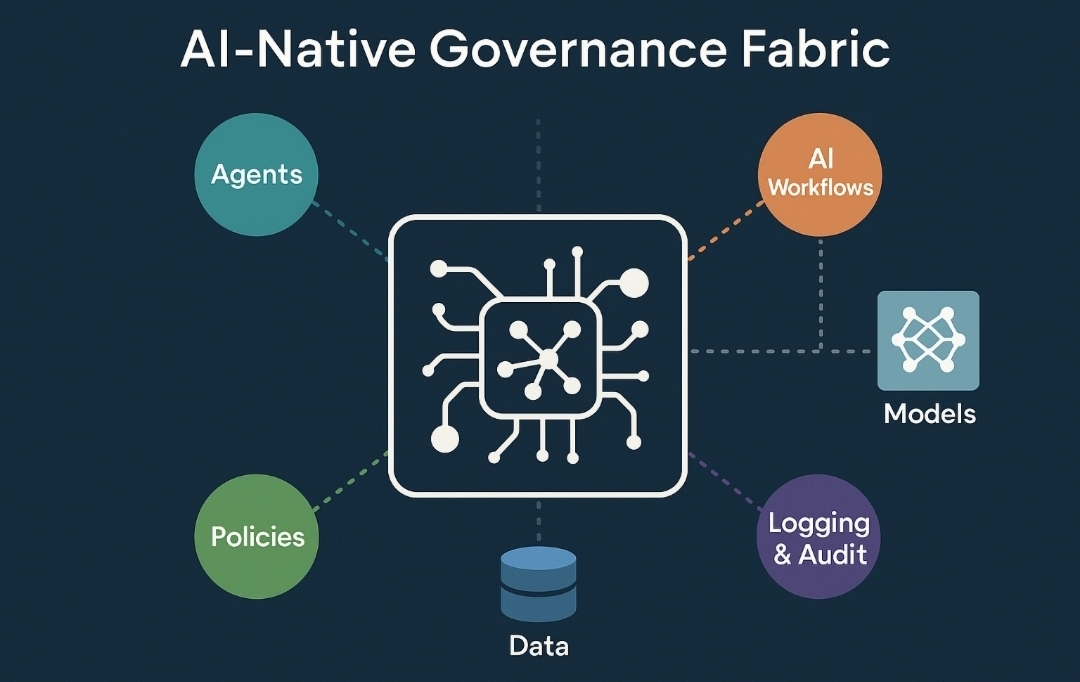

What makes this more than theory is the direction AI is moving. We’re already seeing early forms of autonomous agents managing portfolios, interacting with DeFi, automating workflows, and generating research. As soon as AI moves from suggestion to execution, “probably correct” stops being good enough.

Mira doesn’t assume hallucinations will disappear with larger models. It accepts that probabilistic outputs are inherent to current architectures. Instead of fighting that at the model layer, it builds a reliability layer around it.

That feels realistic.

Of course, this architecture introduces complexity. Decomposing reasoning into verifiable claims isn’t trivial. Verification adds latency. Validator diversity must be maintained to prevent correlated bias. Collusion risks need to be mitigated carefully.

But the underlying thesis is hard to ignore:

Intelligence without verification does not scale safely.

Centralized moderation won’t be sufficient if AI becomes infrastructure. Reputation won’t be enough once systems operate autonomously across financial, legal, and industrial domains.

Mira positions itself as the trust layer for AI — converting probabilistic model outputs into consensus-backed information.

It’s not flashy. It’s not chasing model benchmarks. It’s solving a structural weakness in how AI is currently deployed.

And if AI continues moving toward autonomous execution, verification protocols like Mira won’t feel optional.

They’ll feel necessary.