The next AI race is not only about intelligence

The more I study Mira Network, the more I feel the real opportunity here is being misunderstood. A lot of people still talk about AI as if the only thing that matters is model quality, speed, or raw reasoning power. But I do not think the next major bottleneck is intelligence alone. I think it is trust. Mira’s own positioning is centered on that exact idea: building a trust layer for AI by verifying outputs and actions through collective intelligence rather than asking users to blindly trust a single model or provider.

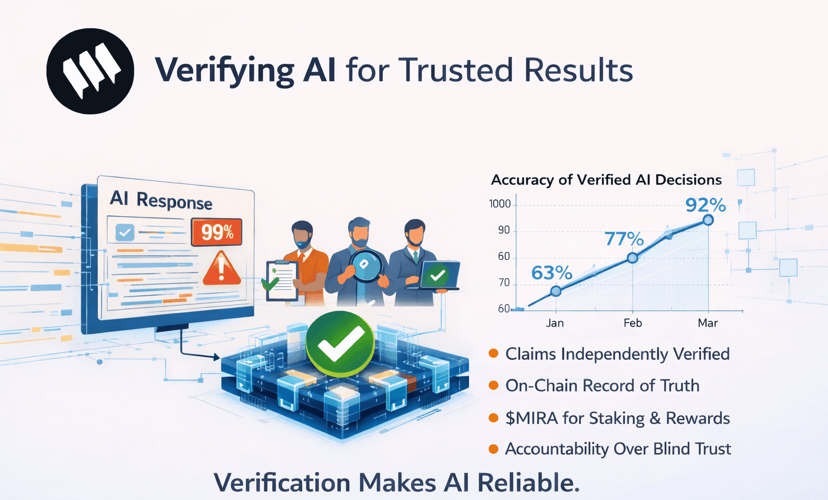

That matters to me because we are moving into a phase where AI is no longer just drafting text or answering casual questions. It is being embedded into workflows, products, decisions, and eventually into autonomous agents that can trigger actions in live systems. At that point, an impressive answer is not enough. It has to be dependable. Mira’s whitepaper describes a decentralized verification protocol that transforms complex AI-generated content into independently verifiable claims, then checks those claims through distributed consensus across diverse models and incentivized participants. That is a much more serious foundation than simply hoping a powerful model gets things right often enough.

I do not think the biggest AI winner will be the model that sounds smartest. I think it will be the system that makes intelligence reliable enough to trust.

What Mira is actually solving

The central problem Mira is targeting is simple to describe and hard to solve: AI can sound confident while being wrong. That is not just a branding issue for the industry. It becomes a real infrastructure problem the moment AI is used in financial logic, developer tooling, research pipelines, automated execution, or any environment where errors carry downstream cost. Mira explicitly says it is making AI reliable by verifying outputs and actions at every step using collective intelligence, and its research materials focus on reducing hallucination and bias through distributed verification rather than single-model authority.

What I like about this framing is that it treats trust as a protocol problem instead of a public-relations problem. Most centralized AI systems still operate like black boxes. A company trains the model, runs the inference, and asks everyone else to accept the result. Mira flips that structure. According to the whitepaper, it breaks outputs into smaller claims, has those claims reviewed by multiple independent models and validators, then aggregates the results into a consensus outcome. In other words, it is trying to make verification native to the system instead of optional after the fact.

Why this matters more in the age of AI agents

I think Mira becomes much more important when you stop viewing AI as a chatbot category and start viewing it as an agent category. AI agents are increasingly being designed to retrieve information, call tools, execute workflows, route decisions, and eventually manage actions inside digital economies. Once that happens, hallucination is no longer just “bad output.” It becomes operational risk. Mira’s official materials repeatedly frame the network as infrastructure for trustless AI execution and verified intelligence, including partnership announcements that position it as a verification layer for onchain and ecosystem-level AI applications.

This is exactly why I think Mira has stronger long-term relevance than many AI narratives that are only focused on generation. If an agent is going to move funds, analyze risk, submit a governance action, or help coordinate multi-step logic, then reliability has to be part of the architecture. Mira is trying to become that architecture. Not the part that writes the answer, but the part that checks whether the answer deserves to be trusted before it moves further downstream.

The verification model is the core of the thesis

What stands out to me most is that Mira is not just claiming to “improve AI.” It is proposing a specific design for how trust can be produced. The whitepaper says the protocol transforms content into independently verifiable claims, routes those claims through distributed consensus among diverse AI models, and uses economic incentives plus technical safeguards to encourage honest verification. That structure matters because it is not relying on one dominant intelligence source. It is relying on cross-checking, disagreement resolution, and incentive alignment.

I think that diversity angle is one of the most underrated parts of the project. Different models have different blind spots, different biases, and different failure modes. When one system is wrong, another may catch it. When one model overreaches, another may be more conservative. Mira is trying to turn that diversity from a nuisance into a verification engine. To me, that is much closer to how real trust works in complex systems: not through a single authority, but through layered review and structured challenge.

In high-stakes AI, the question is not only “can the model answer?” It is “who checked the answer before it mattered?”

Why the network design feels more practical than people assume

Another reason I take Mira seriously is that it is not only a theory paper. The project has live developer-facing infrastructure around the network. The docs describe an SDK that provides a unified interface to multiple language models, with routing, load balancing, and flow management capabilities. The platform also has API token creation, usage tracking, and developer tooling, which tells me Mira is trying to make this verification layer usable, not just conceptually elegant.

That practical layer matters a lot. Many projects in AI x crypto sound interesting until you ask the simple question: can a builder actually integrate this into a product today? Mira at least appears to be building toward an answer that is more concrete than most. The docs show working pathways for API access and model operations, while the main site and writing portal keep reinforcing the same thesis around verified intelligence and trustless AI systems. That consistency between research, messaging, and tooling makes the project feel more coherent to me.

The token makes more sense when I view it as network infrastructure

I also think $MIRA becomes easier to understand when I stop looking at it as a typical AI token and start looking at it as a trust-network asset. Mira’s MiCA notification document says the token is intended for staking in the verification process, governance participation, rewards, and payment for API access to the network. Node operators running AI models for verification are expected to stake the token, and developers can use it as the payment method for decentralized AI verification capabilities. That gives the token an infrastructure role rather than a purely narrative one.

This part matters because I always ask the same question with these projects: is the token actually tied to network behavior, or is it just attached to a story? In Mira’s case, the official documentation at least presents a direct link between staking, validation, governance, and usage. That does not automatically guarantee long-term success, but it does make the design more credible. If the network grows, the token is meant to sit inside the core trust loop rather than outside it.

Why the delegator and ecosystem angle matters

One thing I find interesting about Mira is that it is not only talking to model builders or protocol designers. It is also opening participation pathways around its verification network. Mira’s own writing on the Delegator Program frames the network as a foundational verification layer for trustless AI systems and positions broader community involvement as part of securing and scaling that infrastructure. Even without running a full verification node, people can still become economically and socially connected to the growth of the network.

To me, that is strategically smart. A trust layer becomes stronger when it is not only technically sound but also socially embedded. The more developers, validators, delegators, and ecosystem partners rely on the same verification rails, the more defensible the network becomes. Mira’s recent writing around partnerships, grants, and ecosystem campaigns suggests it is trying to create that broader flywheel rather than remain a niche research experiment.

The bigger idea: Mira is trying to make context trustworthy

When I zoom out, I think the deepest thing Mira is building is not just output checking. It is trustworthy context for autonomous systems. The real weakness of many agents is not that they cannot execute logic. It is that they execute logic on top of unreliable or poorly grounded information. If Mira can become a layer where claims are verified, traceable, and economically secured, then agents stop acting in informational fog and start acting on something closer to shared ground truth. That is my interpretation of the architecture described in the whitepaper and product materials.

That shift is much bigger than it sounds. It moves AI systems from “probabilistic tools that may be useful” toward “auditable systems that can be trusted in more serious environments.” I think that distinction will become increasingly important as onchain agents, financial automation, and AI-powered applications mature. Mira is positioning itself right at that intersection.

The real edge for autonomous AI may not come from more confidence. It may come from better verification.

The risks are still real

That said, I do not think this thesis should be read uncritically. Verification at scale is difficult. Latency matters. Cost matters. Consensus systems can add friction. And there is always the question of whether developers will choose decentralized verification over faster, simpler centralized options. Mira has a compelling thesis, but the market will still test whether trust is important enough for builders to pay for it consistently. This is my inference from the nature of the product and adoption challenge rather than a claim Mira makes directly.

There is also the broader category risk around AI x crypto. Plenty of projects talk about decentralization, verifiability, and infrastructure, but only a smaller number manage to become indispensable. For Mira, the long-term question is whether verification becomes a default layer that many apps and agents plug into, or whether it remains a specialized feature used only in certain high-trust settings. I think that is the key adoption question from here. This is an inference based on the network’s stated role and current developer tooling.

My final view on Mira

My honest view is that Mira is one of the more intellectually solid projects in the AI infrastructure category right now because it is focused on a problem that will only get more urgent with time. The industry already has plenty of systems that can generate. What it still lacks is a robust, scalable way to verify, audit, and economically secure what gets generated before it is trusted in critical flows. Mira is trying to build exactly that.

That is why I do not see $MIRA as just another AI narrative asset. I see it more as a bet on the idea that verified intelligence becomes a real category of infrastructure. If that happens, Mira’s role could become much bigger than people expect today. Because once AI agents and AI-powered applications start moving real value, trust will stop being a nice extra. It will become part of the base layer.

@Mira - Trust Layer of AI #Mira $MIRA