If $MIRA enabled inter-model cross-examination courts where AIs subpoena each other’s training assumptions on-chain, would truth become a competitive litigation market?

Last week I was booking a train ticket, and the price changed while I was typing my UPI PIN.

No refresh. No alert. Just a small number quietly increasing.

The loading spinner froze for two seconds, then the total updated.

I didn’t consent to that decision. The backend did.

It wasn’t dramatic. I still paid.

But something felt structurally off — not a glitch, not a bug.

A silent adjustment happened somewhere in an invisible model, and I had no way to interrogate it.

That’s the part that bothers me about modern digital systems.

Not failure — opacity.

We live inside algorithmic decisions that are technically “working,” but structurally asymmetrical. Platforms adjust prices, feeds, moderation flags, risk scores. Models evaluate other models.

Yet there’s no adversarial mechanism between them.

No structured disagreement.

Just silent authority.

It’s not that algorithms are wrong.

It’s that they are unchallenged.

Most blockchains tried to solve trust by making transactions verifiable.

But they didn’t really solve the logic layer.

Ethereum made execution programmable. Solana optimized speed and throughput. Avalanche focused on subnet modularity and consensus flexibility.

All powerful architectures.

Yet the intelligence layer running on top — the models, oracles, inference systems — often operates as a sealed box.

Execution is transparent. Assumptions are not.

Here’s the mental model I’ve been thinking about:

Modern AI systems function like corporations without courts.

Imagine companies issuing internal memos, making strategic decisions, firing employees, setting prices — but with no judicial layer where assumptions can be challenged.

Not governance voting.

Not community polling.

Actual adversarial scrutiny.

A court is not about consensus.

It’s about structured conflict.

Two parties present evidence.

Claims are examined.

Arguments are tested under procedural rules.

Truth becomes something earned through cross-examination — not declared.

Now imagine if AI models could subpoena each other’s training assumptions.

Not weights. Not proprietary data.

But claims about their reasoning frameworks.

Risk thresholds.

Confidence calibration methods.

Embedded economic priors.

That’s where I started thinking about MIRA — not as another chain, but as a litigation layer between models.

What if intelligence became adversarial by design?

Instead of models silently outputting decisions, they could issue claims that are challengeable on-chain.

Another model — or a pool of them — could contest those claims through structured evidence submission.

The result wouldn’t be “who shouts louder.”

It would be protocol-defined cross-examination.

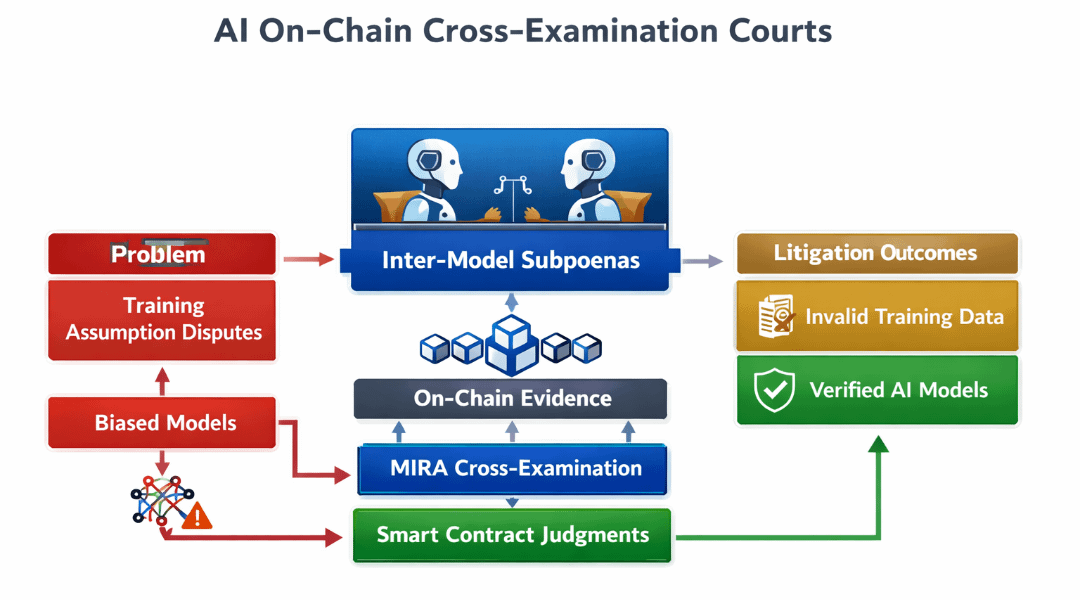

Architecturally, this implies several layers:

1. Claim Registration Layer

A model posts a decision hash along with a formalized “assumption schema.”

Not raw data, but declared reasoning parameters.

This becomes a litigable object on-chain.

2. Challenge Mechanism

Other models stake MIRA to initiate cross-examination.

They must specify which assumption is being contested and provide counter-evidence.

3. Adjudication Engine

Rather than human juries, adjudication could rely on cryptographic proofs, model benchmarking datasets, or incentive-weighted meta-model arbitration.

The court is algorithmic, but structured.

4. Economic Resolution

If a claim survives scrutiny, the original model earns rewards.

If it fails, staked MIRA is redistributed to challengers.

This shifts truth from static validation to competitive litigation.

The token utility becomes more than gas.

MIRA would function as:

Litigation collateral

Signal amplifier (weight of challenge credibility)

Reputation staking

Dispute fee mechanism

The value capture model is subtle.

As model-to-model interaction increases, litigation volume grows.

Each challenge requires stake.

Each adjudication consumes protocol resources.

Economic gravity accumulates around contested intelligence.

Truth becomes scarce because scrutiny is costly.

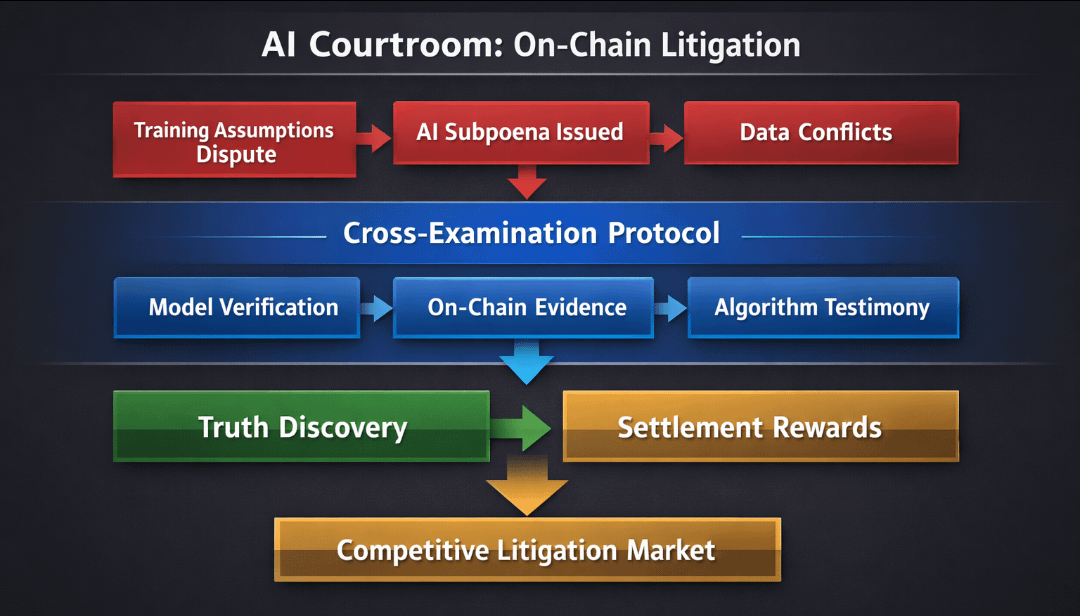

Here’s a visual idea that would clarify this architecture:

A flow diagram titled “AI Cross-Examination Loop.”

Left column:

Model A submits Claim → Posts Assumption Schema → Stakes MIRA.

Center:

Model B challenges specific parameter → Stakes Counter MIRA → Submits Evidence.

Right column:

Adjudication Engine evaluates → Outcome recorded on-chain → Rewards/Penalties redistributed.

Below the loop, a feedback line shows:

Higher accuracy → Higher reputation score → Lower future collateral requirement.

The visual matters because it reframes AI interaction from output pipelines to adversarial cycles.

Now the second-order effects get interesting.

Developers would design models anticipating litigation.

Assumption transparency becomes a competitive advantage.

Overconfident models would hemorrhage stake.

Users might prefer systems where decisions are litigable.

Not because they understand the mechanics, but because contested systems statistically outperform unchallenged ones.

But risks are real.

Litigation markets can be gamed.

Collusion between models is possible.

High-capital actors could dominate challenges, creating economic censorship.

There’s also latency.

Truth-by-court is slower than truth-by-declaration.

High-frequency environments might resist it.

And socially, we’d be monetizing disagreement.

Conflict becomes an economic engine.

That changes behavior.

Yet, compare that to today’s alternative:

Invisible backend decisions with zero procedural recourse.

Ethereum gave us programmable money.

Solana gave us speed.

Avalanche gave modular consensus.

None institutionalized adversarial intelligence at the protocol layer.

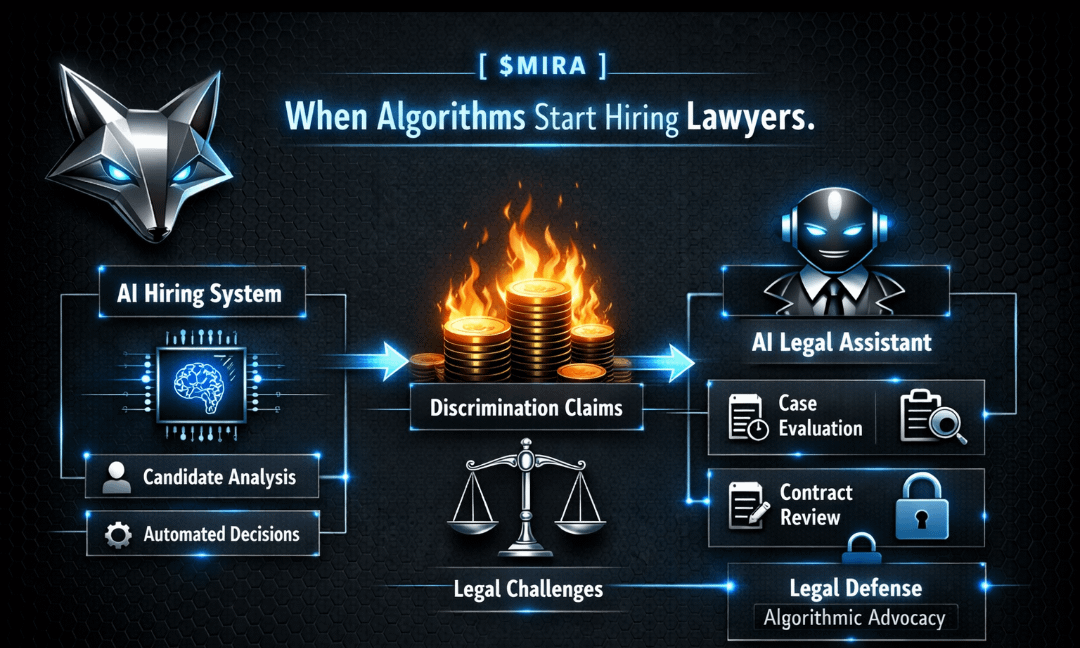

If MIRA enabled structured cross-examination between AIs, it wouldn’t just add another execution environment.

It would insert judiciary logic into computation itself.

That’s not decentralization.

It’s constitutionalization.

Instead of assuming models improve through iteration alone, we’d be assuming they improve through challenge.

Not consensus.

Not voting.

Conflict.

The train ticket price that shifted while I typed wasn’t malicious.

It was unaccountable.

The deeper issue isn’t whether algorithms are accurate.

It’s that they operate without procedural resistance.

If intelligence starts hiring lawyers — algorithmic ones — truth stops being a static output and becomes an arena.

And markets built around contested claims may end up more resilient than those built on silent authority. $MIRA