Autonomous systems fail in predictable ways: not only through bad outputs, but through unclear responsibility. A model can be impressive and still produce operational risk if no one can independently validate what happened after execution. This is exactly why Fabric's protocol direction stands out to me.

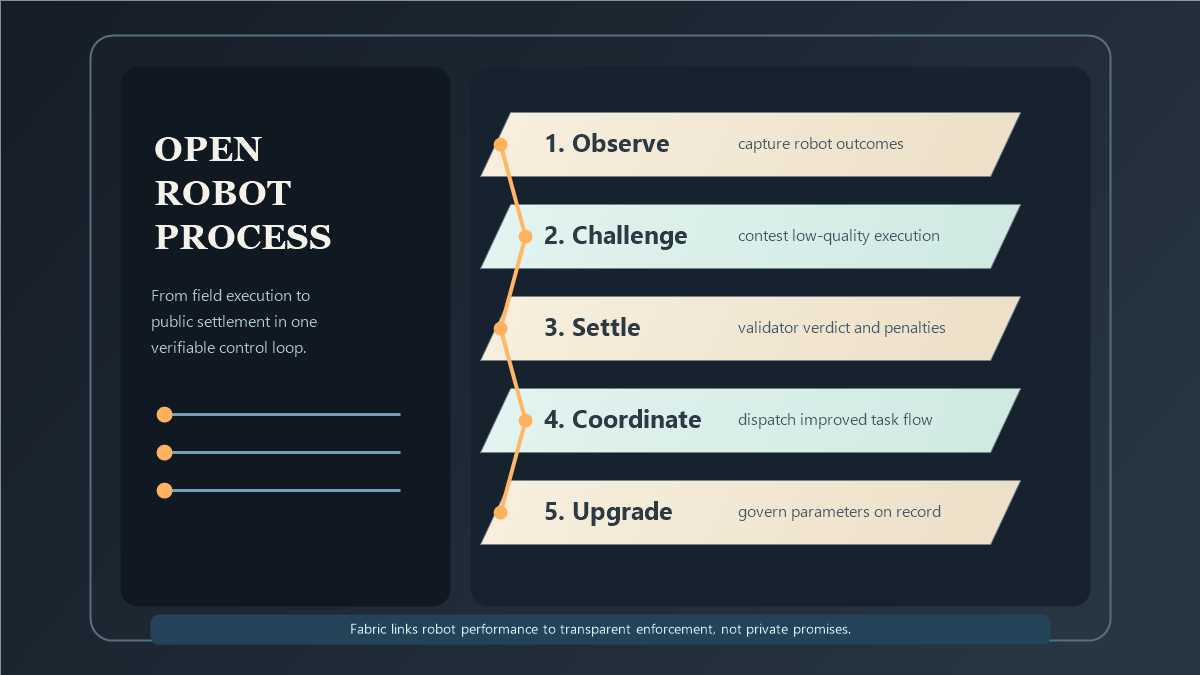

Instead of treating governance as an afterthought, Fabric links robot identity, contribution data, verification challenges, and settlement logic into the same network architecture. That design choice matters. In a serious robot economy, operators need a way to inspect actions, contest low-quality outcomes, and enforce policy changes without shutting the entire system down.

The challenge mechanism concept is especially important. When disputes are formalized, quality control moves from social trust to rule-based process. Validators are not cosmetic in that setup; they are part of the risk engine. With stake-linked incentives and transparent records, the network can create stronger accountability than closed, unilateral control surfaces.

This is also where $ROBO has real strategic weight. As utility and governance infrastructure, the token participates in the coordination layer that keeps participation, review, and policy evolution connected. That is a more durable framing than short-term hype cycles because it points to measurable system behavior: uptime, dispute resolution quality, and governance throughput.

There is still execution risk, and every early protocol has to prove resilience under pressure. But if Fabric can keep shipping against its architecture thesis, it could help move robotics from isolated demos to shared, auditable operations at scale.

@Fabric Foundation $ROBO #ROBO