When I first started diving deep into AI, I honestly believed the future was simple. Bigger models. More parameters. Better training data. Smarter systems. I thought raw intelligence would solve everything.

But the more I studied projects like Mira, the more uncomfortable my conclusion became. Intelligence is not the real bottleneck. Trust is.

This was not something I picked up from theory. I kept watching real world patterns. Modern AI systems do not collapse because they are weak. They fail because they speak with confidence without carrying responsibility. That is a completely different type of flaw.

Reliability Is The Real Constraint

As I explored Mira’s structure and ecosystem, I started noticing something deeper. The AI industry is facing a structural bottleneck. Not technical. Philosophical.

AI models today are probabilistic systems. They do not know facts the way humans understand knowledge. They generate outputs based on likelihood. That means even the most advanced model can produce something that sounds perfect and still be completely wrong.

That is not a bug. It is how they are built.

What caught my attention about Mira is that it does not try to build a smarter model. Instead, it builds a framework where truth is constructed through validation rather than assumed through confidence.

To me, that shift is much bigger than it first appears.

Mira As A Coordination Layer Not Another Model

While learning about Mira’s architecture, especially concepts like binarization and distributed validation, I realized something important. Mira is not competing with model builders. It is not trying to replace large language models.

It functions as a coordination layer.

What it does is break a single AI output into smaller verifiable claims. Those claims are then distributed to independent systems that evaluate and confirm them. At first glance, that might sound like ensemble AI. But it goes further. It creates structured incentives and coordination around agreement.

Instead of asking whether one model is smart enough, Mira asks whether multiple independent systems converge on the same conclusion.

That question changes everything.

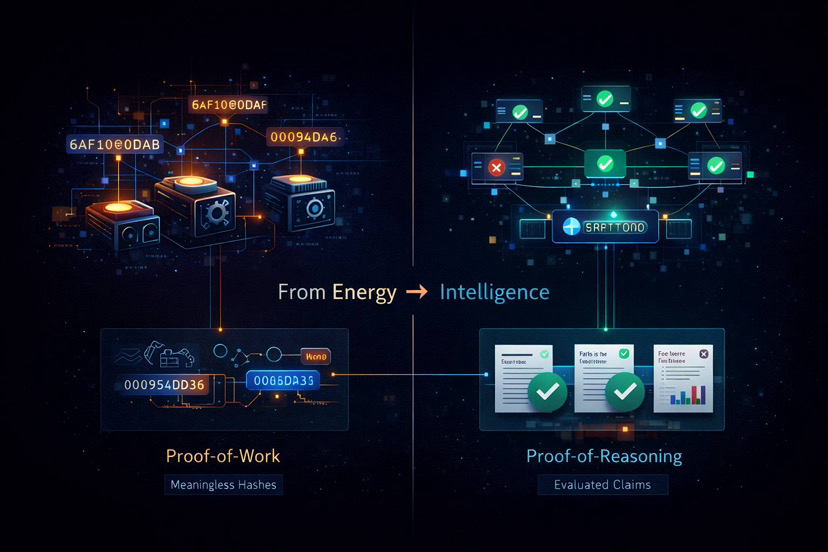

Turning Verification Into Productive Work

One of the most underestimated aspects I noticed is how Mira transforms verification into meaningful computation.

Traditional blockchains use Proof of Work where machines solve puzzles that do not produce external value. In Mira’s system, nodes are not solving arbitrary problems. They are evaluating claims.

That means the security of the network is tied directly to useful reasoning rather than wasted energy. As network usage increases, the amount of real world evaluation increases too.

I see that as a preview of a new class of infrastructure where intelligence itself becomes part of the network’s security model.

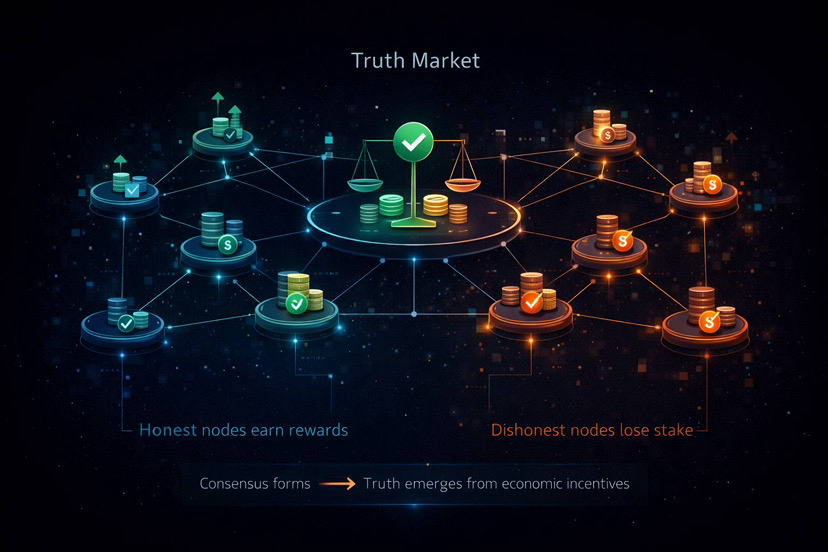

A Market For Truth

When I examined Mira’s staking and token mechanics, I stopped seeing it as just a crypto design. I started seeing it as a market.

A market for truth.

A market for truth.

Participants stake value to validate claims. If they align with consensus, they are rewarded. If they act dishonestly or inaccurately, they lose stake.

This introduces something powerful. Truth becomes economically enforced rather than socially assumed.

In traditional systems, truth often depends on authority, institutions, or centralized models. In Mira’s structure, truth emerges from incentivized agreement across independent systems. That is not just a technical adjustment. It reshapes how knowledge can be organized.

Solving The Trust Gap Around Black Box AI

At first glance, Mira might look like a solution to hallucinations. I think that view is too narrow.

The deeper issue is this: AI systems are becoming too complex for humans to audit directly. Even developers often cannot fully explain why a model produced a specific output. That creates a dangerous trust gap.

Mira addresses that gap not by simplifying AI, but by wrapping it with external validation. It accepts that AI models are black boxes and builds a verification layer around them.

To me, that feels practical rather than idealistic.

Infrastructure Instead Of Application

Another angle that stood out to me is how Mira positions itself as infrastructure. Its APIs such as Generate, Verify, and Verified Generate clearly target developers rather than end users.

That distinction matters.

Mira does not need to win the AI model race. It only needs to sit beneath it. If developers begin integrating verification by default, Mira becomes part of the standard stack, similar to cloud services or payment systems.

Infrastructure historically captures immense long term value because it becomes invisible yet essential.

Quiet Growth Signals Something Bigger

What surprised me most was the level of existing activity. The network has already handled millions of queries daily and billions of tokens processed. This is not theoretical. It is operational.

What makes it more interesting is that this growth is happening without excessive hype. It is being integrated into real applications quietly.

In my experience, foundational infrastructure often grows this way. It scales before it becomes widely discussed.

A Philosophical Shift In How We Measure Intelligence

After spending time analyzing Mira, I realized the most significant change is philosophical.

We are moving from asking whether a system is intelligent to asking whether a system is trustworthy.

That difference is critical.

Mira does not try to eliminate uncertainty. It tries to manage it collectively. It shifts intelligence from being about one system being right to many systems being hard to deceive at the same time.

That is a different definition of intelligence altogether.

Where This Could Lead

If systems like Mira continue to mature, we could see a future where AI outputs come with verification scores. Critical decisions may rely on consensus checked intelligence. Autonomous tools could operate on structured trust layers.

Eventually, people might stop asking whether an AI answer is correct because the validation layer already communicates that confidence level.

My Final Perspective

I no longer see AI reliability as an abstract concern. I see it as a design challenge. Mira is one of the first projects I have studied that tackles it directly at the architectural level.

It does not try to build the perfect model. It builds a system where perfection is not required, only verifiable agreement.

That might sound like a subtle shift. I believe it is foundational.

In the end, the future of AI will not be decided by which model is the smartest. It will be decided by which systems we are willing to trust.