The more I use AI tools in real decision-making workflows, the less impressed I am by how polished they sound. Fluency is no longer rare. What remains rare is certainty.

Modern AI can write persuasively, summarize efficiently, and construct logical arguments. But would you allow it to execute something irreversible without review? Most people hesitate. That hesitation reflects a deeper structural issue.

AI models generate probabilistic outputs. They predict patterns; they do not inherently verify truth. When errors occur, they often appear confident. That isn’t a minor interface flaw — it’s a limitation of the architecture.

When I explored Mira Network, what stood out wasn’t an attempt to build a more powerful language model. Instead, the focus is on verification. Mira positions itself as a decentralized layer that evaluates AI outputs before trust is assumed.

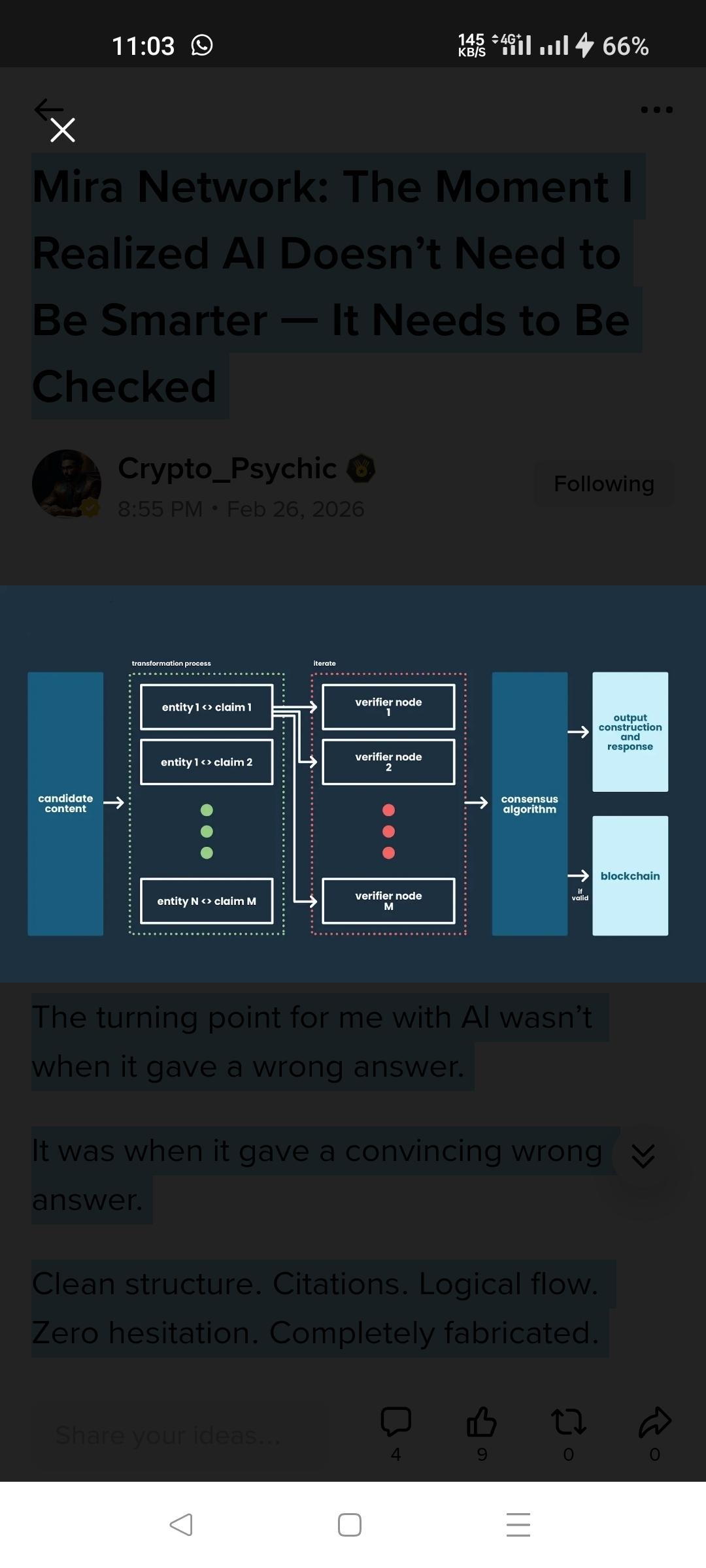

Rather than treating an AI response as one indivisible answer, the system breaks it into smaller claims. These claims are then assessed by distributed validators. Consensus mechanisms and economic incentives are used to coordinate validation outcomes.

This changes the trust model. Instead of relying on a single provider’s authority, validation becomes distributed and stake-aligned. Validators have incentives to assess claims carefully, since outcomes affect them economically.

That distinction becomes important as AI systems move toward greater autonomy. In contexts like financial analysis, enterprise workflows, or automated execution systems, “mostly accurate” may not be sufficient. Outputs may need to be contestable and auditable.

Mira’s design suggests that hallucinations will not disappear entirely — and instead builds mechanisms around verification. That approach appears pragmatic rather than idealistic.

There are open questions. Claim granularity, validator alignment, and coordination incentives are complex design challenges. Distributed systems are rarely simple. But the core thesis is clear: intelligence alone does not guarantee reliability.

As AI becomes more embedded in critical systems, accountability infrastructure may become increasingly important.

Mira is exploring that layer.

Not by promising perfect intelligence — but by focusing on verifiable trust.

@Mira - Trust Layer of AI #mira $MIRA