Artificial intelligence is now part of everyday life. It helps write emails, answer questions, summarize research, and even guide financial decisions. But there’s a major problem.

AI can make mistakes, and it often sounds very sure of itself even when it’s wrong.

Sometimes, an AI provides an answer that appears accurate but isn’t entirely correct. In fact, studies have shown that large language models can generate incorrect or misleading information in up to 15% of cases, depending on the complexity of the task.

This happens because AI models predict responses based on patterns, rather than a true understanding. In simple use cases, that might not matter much. But in more serious environments, reliability becomes important.

That’s where @Mira - Trust Layer of AI comes in.

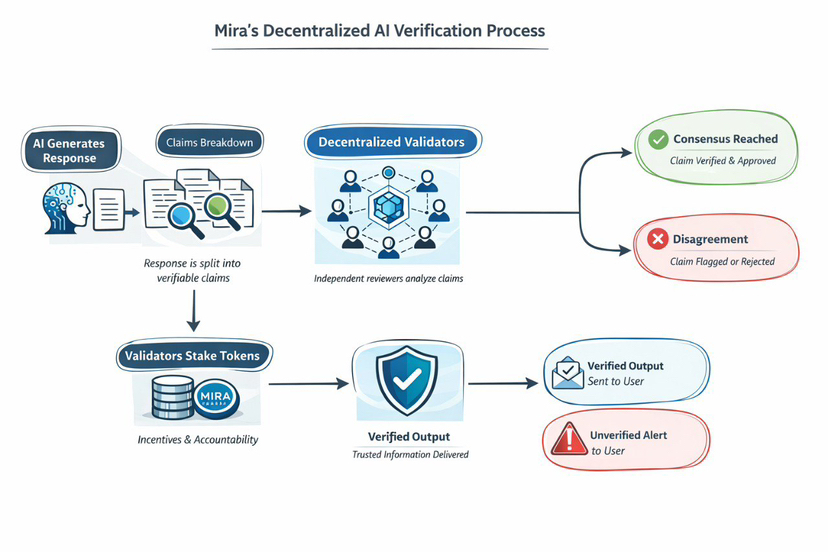

Mira Network is building a system that checks AI outputs before they’re trusted. Instead of depending on one model to give the final answer, Mira uses multiple independent validators to review the response.

Here’s how it works in simple terms.

First, an AI generates an answer. Mira then breaks that answer into smaller statements. These statements are sent to different validators in the network. Each validator checks whether the statements appear accurate or not. After that, the system looks at the overall agreement between them.

If most validators agree the answer is correct, it passes verification. If there is disagreement, the output can be flagged or rejected.

The idea is straightforward, instead of trusting one source, trust the agreement between many.

This approach is inspired by how decentralised systems work. In blockchain networks, transactions aren’t confirmed by one authority. They’re validated by many participants. Mira applies a similar idea to AI responses.

The network also uses incentives to encourage honest participation. Validators must stake $MIRA tokens to be part of the system.

If they act honestly and provide accurate checks, they earn rewards. If they act carelessly or try to manipulate results, they risk losing part of their stake.

This creates accountability. Validators have something at risk, so they’re motivated to be accurate.

It’s important to understand that Mira is not trying to build a better AI model. It’s not competing with large AI companies. Instead, it focuses on adding a verification layer on top of existing AI systems.

Think of it as a review system for AI outputs. The goal isn’t perfection. The goal is to reduce errors and add an extra layer of trust before decisions are made based on AI responses.

As AI becomes more common in different industries, the need for reliability increases. Mira Network is focused on solving that specific problem, how to make AI outputs more dependable without relying on a single centralised source.

In simple terms, Mira is building a system that checks AI before you rely on it.

#Mira #AI #MiraNetwork #ArtificialInteligence #DecentralisedAI