I’ve been watching the intersection of AI and crypto for a few years now, and one pattern keeps repeating itself. Every cycle there’s a wave of projects promising to combine artificial intelligence with decentralized infrastructure, but most of them focus on compute markets, data marketplaces, or model training. What caught my attention with Mira Network wasn’t another attempt to sell “AI on the blockchain.” It was the focus on something much less glamorous but probably more important: verification.

The problem Mira is trying to address is something anyone who has used modern AI tools has already experienced. AI models are powerful, but they make things up. Hallucinations, bias, and inconsistent outputs are still common, even with the latest models. In casual situations that might not matter much, but once AI starts touching areas like finance, legal advice, or healthcare, reliability becomes a completely different conversation. According to various reports, Mira’s idea is to treat AI output almost like a transaction that needs validation, rather than something you simply trust because a single model produced it.

When I first read about how the system works, it felt familiar in a very crypto-native way. Instead of relying on one AI model to produce an answer, Mira breaks that answer into smaller factual claims and distributes those claims across a network of independent verifier nodes running different models. Each node evaluates the claim and votes on whether it appears true, false, or uncertain. The final output only passes if a supermajority of verifiers reach consensus.

That structure immediately reminded me of how blockchains themselves operate. In a blockchain, no single participant defines the state of the ledger; consensus emerges from multiple actors validating the same data. Mira is essentially trying to apply that logic to artificial intelligence outputs. Instead of trusting one model, the network aggregates judgments across many models and records the process transparently on-chain.

I’ve seen similar concepts discussed before, but what stood out here was the attempt to integrate incentives directly into the verification layer. Node operators stake the network’s token and earn rewards for correctly validating claims, while incorrect or malicious behavior can lead to penalties. This hybrid system combines elements of proof-of-stake with other mechanisms to secure the verification process economically.

That economic design matters more than it might seem at first glance. In theory, verification only works at scale if there’s a reason for people to run nodes and dedicate compute resources. Mira seems to be approaching this through staking incentives, token rewards, and delegation models where GPU providers contribute resources without necessarily running the verification nodes themselves.

From a technical perspective, the idea makes sense. The reliability of AI output improves when multiple models cross-check each other, and early research around the project claims accuracy improvements compared to relying on a single model alone. Some reports suggest hallucination rates can drop significantly when outputs are verified through multi-model consensus.

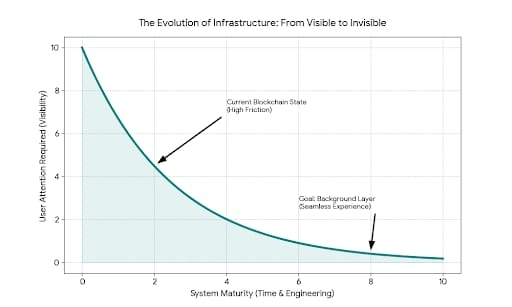

But the longer I’ve been in crypto, the more I’ve learned that technical elegance doesn’t automatically translate into long-term adoption.

What I’m usually looking for with projects like this isn’t just the whitepaper mechanics. I pay attention to where real activity might come from. Infrastructure protocols live or die based on developer integration and ecosystem gravity. If developers actually start routing AI outputs through a verification network like Mira’s APIs, then the system could quietly become a piece of backend infrastructure that users never notice but rely on every day.

From what I’ve seen so far, Mira has already released APIs that allow developers to generate, verify, and cross-check AI outputs through a unified interface connected to multiple language models. That’s an interesting direction because it lowers the barrier for integration. Developers don’t need to manage multiple models individually; the network handles routing and verification.

I’ve noticed another pattern as well. Projects that succeed in infrastructure tend to hide their complexity behind simple developer tools. If the SDKs and APIs work well, developers might adopt them simply because they improve reliability, not because they care about the underlying token economics.

That’s where the token side of things becomes a bit more nuanced. The MIRA token is designed to power verification requests, staking, governance, and ecosystem incentives. In theory that means every verified AI output would generate some level of economic activity within the network. If real usage grows, the token becomes the economic layer securing and coordinating the system.

But again, I’ve seen this pattern before. Token utility always looks clear on paper. The real test is whether applications actually generate enough demand to sustain those token flows over time.

What’s somewhat encouraging is that the project has already attracted a fair amount of developer and enterprise curiosity. The ecosystem reportedly includes integrations and collaborations across various AI and Web3 platforms, which suggests there’s at least some early experimentation happening around the network.

Still, early recognition doesn’t necessarily translate into staying power. The AI narrative itself is extremely crowded right now. Every few months a new “AI + crypto infrastructure” project appears, and attention tends to rotate quickly.

Another aspect I’m quietly watching is user behavior. Many crypto infrastructure projects rely heavily on incentive programs, leaderboards, or points systems to bootstrap participation. Mira has experimented with community engagement through verification tasks and point-based systems that reward participation before token distribution.

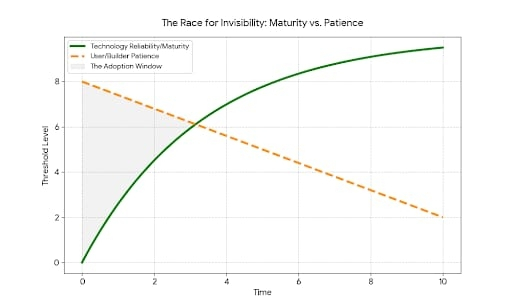

I’ve seen this strategy work before, but it’s always a delicate balance. Incentives can attract early users, but those users often disappear once rewards shrink. The real signal will be whether developers and companies continue using the network even when the initial incentive phase fades.

What keeps the idea interesting to me is that the core problem Mira is trying to solve isn’t going away. If anything, AI reliability is becoming more important as models become embedded in everyday systems. Governments, enterprises, and developers are all starting to ask the same question: how do you verify that an AI system’s output is actually trustworthy?

Blockchains were originally designed to answer a similar question for financial data. Mira seems to be exploring whether that same verification philosophy can extend to information itself.

Whether that becomes a meaningful infrastructure layer or just another experimental protocol is still an open question.

For now, it’s one of those projects I’m keeping in the category of “interesting architecture, but still early.” The idea fits naturally into the broader trend of decentralized AI infrastructure, but real traction will depend on developer adoption, sustained network participation, and whether verified AI outputs actually become something people demand rather than something the industry simply talks about.

So I’m watching it the same way I watch most early infrastructure projects in crypto — quietly, without rushing to conclusions, and waiting to see what happens once the initial narrative fades and real usage either shows up or doesn’t.