When I think about Fabric Protocol, I’m not thinking about tokens or hype. I’m thinking about something much more basic. I’m picturing a robot doing real work in the real world. Maybe it’s moving boxes in a warehouse. Maybe it’s inspecting equipment in a factory. Maybe it’s delivering something across a city. And then something small goes wrong. Not a disaster, just a mistake that matters. Now I’m asking simple questions: What exactly did the robot do? When did it do it? What rules was it following? Who approved those rules? And if there’s damage, who is responsible? Fabric is trying to build a system where I don’t have to rely on trust or vague explanations to get those answers.

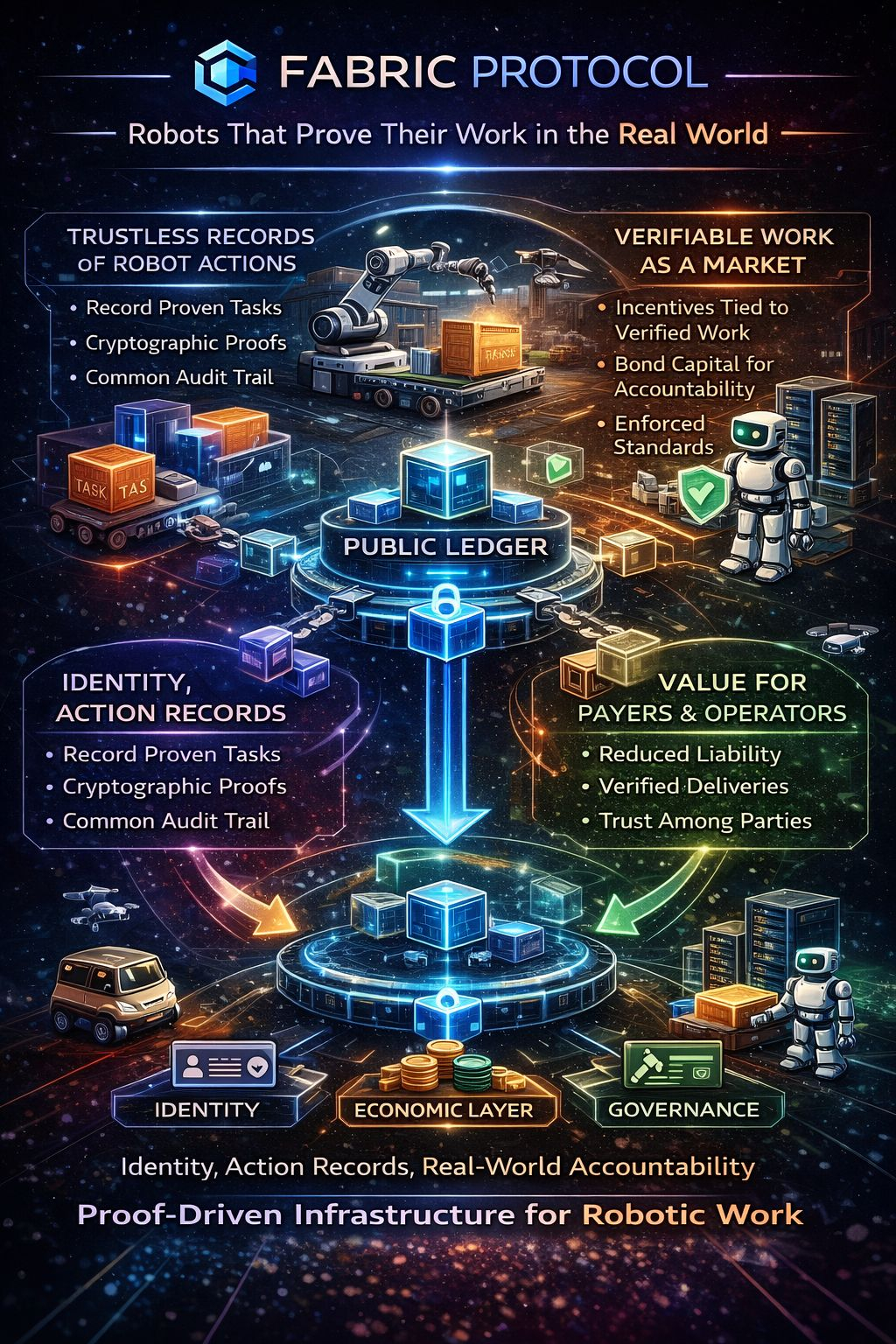

I’m watching Fabric make a very specific bet. It’s betting that if robots are going to do serious work, we need a way for them to prove what they did. Not just log it privately. Not just say, “Trust us, the system worked.” I’m working toward a setup where robot actions can be recorded, verified, and enforced in a way that different parties can rely on. That means giving robots identities, giving operators identities, creating records of actions, and building a way to verify those records. It also means adding an economic layer so that verification is paid work, not volunteer effort. And on top of that, I’m seeing a governance layer that can adjust rules over time without turning everything into a private database controlled by one company.

What stands out to me is that this isn’t a “token first” story. I’m not being told to buy into a narrative. I’m being asked to imagine a world where certain robot deployments naturally route through a shared protocol because the alternative is messy. I’m thinking about disputes between operators and clients, insurance claims with weak audit trails, and partnerships that rely on fragile trust. If Fabric works, I’m not using it because it’s trendy. I’m using it because it reduces risk and makes accountability clearer.

The big phrase Fabric keeps using is that robots can prove their actions. I’m trying to unpack what that really means. A robot in the physical world is not like a simple computer program. Sensors can be noisy. Environments change. Sometimes whether a task was “completed” depends on interpretation. So I’m not assuming that just because something is logged, it’s proven. Logs can be edited. Data can be cherry-picked. If I want real proof, I have to make lying expensive and detectable. That’s where the hard work begins.

When I strip this down in my own mind, I see Fabric needing to define a small set of claims that can actually be verified. I’m talking about statements like, “This route was taken,” “This inspection happened,” “This package was delivered to this location,” or “This tool was used under these constraints.” Each claim needs some form of evidence. I’m not going to store every raw sensor feed on a blockchain. That would be too heavy and too messy. Instead, I’m likely committing summaries, hashes, or structured attestations that reference underlying data. And then I need someone to check that evidence.

That’s where the idea of verification as real work comes in. I’m watching Fabric try to turn verification into a paid service. If someone is going to review evidence and confirm that a robot followed the approved rules, that person or entity needs to be compensated. If verification is too cheap and shallow, it becomes a rubber stamp. If it’s too expensive and slow, nobody will use it for frequent tasks. I’m seeing a delicate balance here. Fabric’s long-term survival depends on getting that balance right.

What really matters to me is the unit of value. Fabric talks about “verified work” as the core unit. I like that framing because it connects rewards to actual activity. If a robot performs a task and that task is verified under clear standards, that verified work becomes meaningful. But I’m also realistic. If any measurable signal can generate rewards, people will try to game it.

If quality scores lead to payments, someone will try to bribe the scoring system. If participation is cheap, fake identities will appear. If disputes are painful and costly, people will avoid raising them. I’m not assuming cooperation. I’m assuming incentives shape behavior.

So I’m asking myself: is Fabric designing for adversarial conditions, or is it assuming everyone plays fair? If it’s serious about becoming infrastructure for autonomous labor, it has to assume attacks will happen. It has to design penalties that are actually enforced. It has to require bonded capital or stake that can be lost if someone lies or bypasses the rules. Otherwise, I’m just watching a nice-looking system that collapses when real money is on the line.

I also find myself thinking about the difference between passive yield and paid service. In many crypto systems, I’m used to the idea of staking tokens and earning rewards mostly from emissions. But that doesn’t make sense for a robotics accountability network. If verification is supposed to mean something, it has to be tied to real service demand. Someone has to pay for the verification because it reduces their risk. If rewards mainly come from token emissions rather than real fees, I’m going to attract farmers instead of serious operators. Fabric has to anchor its economics to work, not to hype.

Governance is another area I’m watching carefully. If Fabric is going to coordinate real-world robotic activity, governance isn’t just a community vote about cosmetic upgrades. It becomes a risk surface. I’m thinking about who sets the standards for what counts as verified work. Who decides how much stake is required? Who defines what triggers penalties? If governance is too weak, the system can’t adapt to new attacks or edge cases. If governance is too concentrated, it becomes vulnerable to capture. Large operators might become untouchable if the network is afraid to penalize them. And once a system can’t punish its biggest players, it stops being an enforcement system.

The role of a foundation structure can help in the early days. I’m imagining a disciplined steward maintaining standards, coordinating upgrades, and handling emergencies. But I’m also asking: what happens when there’s a real incident? If a robot causes damage and evidence suggests misconduct, does the system actually execute penalties? Or does it quietly avoid confrontation to protect metrics? I’m watching for signs that governance looks more like critical infrastructure management than like a casual internet forum.

Another thing I keep coming back to is the idea of a wedge. I’m not convinced that “general-purpose robots” is the starting point. I’m thinking instead about narrow environments where verification is clear and disputes are common. Maybe regulated inspections. Maybe insured deliveries with defined handoff points. Maybe industrial tasks with strict compliance rules. In those environments, I can clearly define what completion looks like. I can clearly define what evidence is required. And I can clearly define penalties. If Fabric proves itself there, I can imagine it expanding outward.

So when I evaluate Fabric in my own mind, I’m not looking at roadmaps or token charts first. I’m looking for a full loop. I’m picturing a robot performing a task. That task generates structured evidence. A third party verifies that evidence under a known standard. The verifier gets paid. If the claim is false, the responsible operator loses bonded capital or privileges. Disputes are raised and resolved in the open. Metrics reflect real service demand, not just incentive farming. If I see that loop working in a constrained environment and holding up under pressure, I start to take the system seriously.

If I don’t see that loop, I worry that Fabric becomes a ledger of intentions. I’m watching events get recorded, but I’m not seeing strong ground truth. I’m seeing incentives that drift toward gaming rather than enforcement. And in robotics, that risk feels heavier than in purely digital systems.

When machines operate around people and property, trust breaks differently. Financial loss is one thing. Physical harm is another.

At the end of the day, I’m not looking at Fabric as a flashy robot narrative. I’m looking at it as an attempt to build accountability rails for autonomous machines. I’m watching a system try to make verified robotic work into something enforceable, something that can be priced, something that can be bonded, and something that can be audited across organizations that don’t automatically trust each other. If it succeeds, I’m not just getting better logs. I’m getting a new market primitive: work that can prove itself. And that changes how robots are deployed, paid, and governed in the real world.