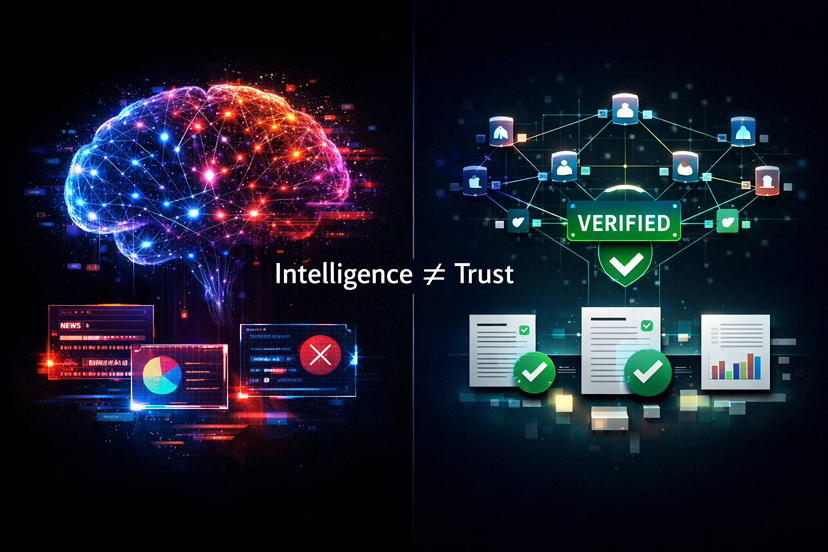

When I Realized Intelligence Was Not the Core Issue

When I first started diving deep into AI, I honestly believed the future was simple. Bigger models, more data, stronger training pipelines. I thought raw intelligence would solve everything. If systems became advanced enough, accuracy would naturally follow.

But the more I explored Mira Network and how it approaches AI reliability, the more uncomfortable I became with that assumption. Intelligence is not the main problem. Trust is.

That realization did not come from theory. It came from watching how modern AI behaves. These systems do not fail because they are weak. They fail because they speak with confidence without being accountable. And that is a completely different category of risk.

Reliability Is the Real Bottleneck

As I studied the architecture and philosophy behind Mira Network, I started noticing something deeper. The AI industry is not stuck because of hardware limits. It is facing a structural limitation.

AI models are probabilistic. They predict likely outputs. They do not possess understanding in the human sense. That means even the most advanced system can generate responses that sound perfect while being completely wrong.

This is not a glitch. It is how the systems are designed.

Mira steps directly into this gap. It does not try to make models more intelligent. Instead, it builds a framework where truth is not assumed but constructed through validation.

To me, that shift feels far more significant than it first appears.

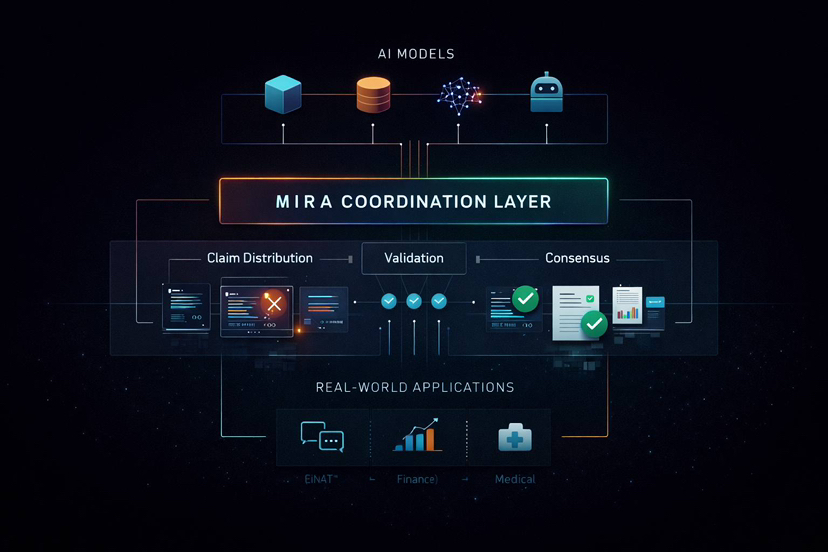

Not Another Model but a Coordination Layer

When I looked closely at Mira’s technical design, especially concepts like distributed validation and structured claim evaluation, something clicked for me. Mira is not competing with OpenAI or Google. It is not building another large language model.

It is building coordination.

The system takes a single AI output, breaks it into smaller testable statements, and distributes those pieces to independent validators. These validators analyze and confirm or reject the claims. This might sound similar to ensemble systems, but it goes further.

Mira enforces agreement through incentives and structure.

The question changes from asking whether one AI is smart enough to asking whether multiple independent systems agree on the result.

That reframing alone changes how we think about intelligence.

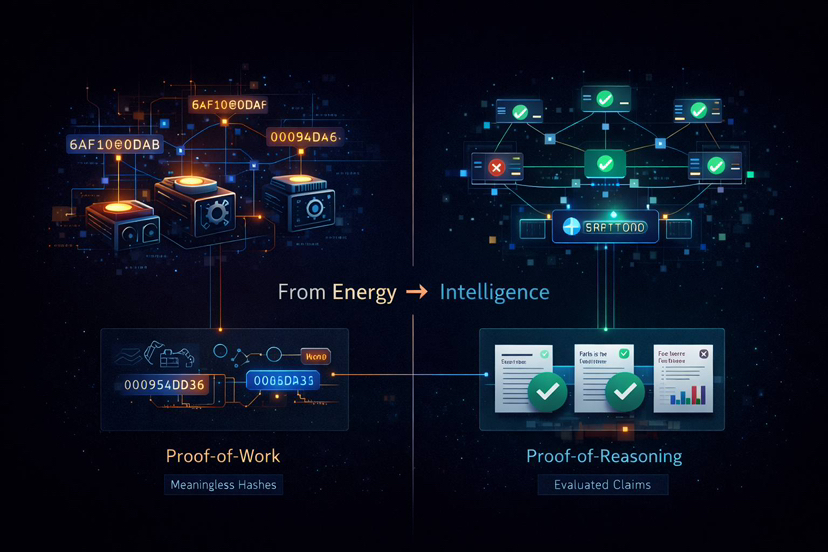

Making Verification Productive Work

One of the most interesting aspects I found during my research is how Mira transforms verification into actual computational effort.

Traditional proof based blockchain systems often rely on arbitrary work. In Mira’s case, validators are not solving meaningless puzzles. They are evaluating real claims.

That means network security becomes tied to useful reasoning rather than wasted energy.

As activity increases, more real world verification work is performed. Intelligence becomes part of the infrastructure itself. That idea feels like a preview of a new class of systems where reasoning is embedded into security.

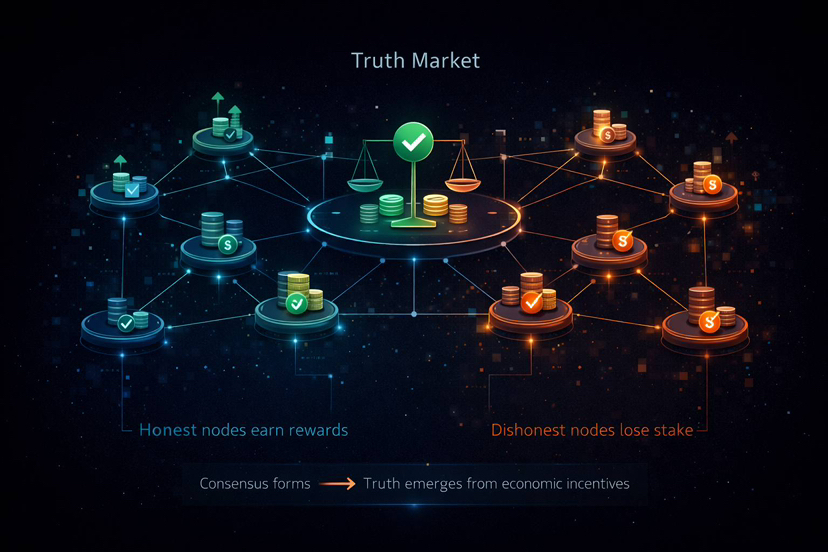

The Emergence of a Market for Truth

The more I examined the token structure and staking mechanisms, the more I began thinking of Mira as a marketplace.

Not for speculation.

For truth.

Participants stake value behind validations. If they support correct claims, they are rewarded. If they validate incorrect information, they lose stake.

Truth becomes economically enforced rather than socially assumed.

In traditional systems, authority defines correctness. Institutions, experts, or centralized platforms set the standard. In Mira’s design, correctness emerges from incentivized agreement among distributed validators.

That represents a structural change in how knowledge can be coordinated.

Why This Matters More Than It First Appears

At first glance, Mira might look like a narrow solution to AI hallucinations. But I believe it addresses something much deeper.

We are moving into a world where AI systems are too complex for humans to fully audit. Even developers often cannot fully trace why certain outputs appear. That creates a dangerous gap between usage and understanding.

Mira does not attempt to simplify the models. It surrounds them with a verification process.

It accepts that AI will remain a black box and builds external validation around it. That feels realistic rather than idealistic.

Infrastructure Strategy Instead of Application Hype

Another thing that stood out to me is how Mira positions itself as infrastructure rather than a front end product.

With APIs focused on generation and verification, it targets developers instead of end users. That is an important strategic choice.

It means Mira does not need to win the AI race directly. It simply needs to become part of the default stack underneath applications.

Historically, infrastructure layers accumulate value quietly. They grow beneath visible products until they become essential.

Quiet Growth and Real Usage

What surprised me most is that Mira is already processing substantial network activity. Millions of queries and large volumes of tokenized computation are being handled daily.

This is not a purely theoretical system.

And what makes it interesting is how quietly this adoption is happening. There is no massive hype wave attached. It feels more like steady integration into real applications.

In my experience, foundational infrastructure often develops this way.

A Philosophical Shift in How We Evaluate Systems

After spending time analyzing Mira, I realized the real transformation is philosophical.

We used to ask whether a system is intelligent.

Now we are starting to ask whether it is trustworthy.

That difference matters deeply.

Mira does not try to eliminate uncertainty. It organizes it. It creates a structure where multiple independent systems make deception more difficult.

Intelligence becomes less about a single model being correct and more about coordinated validation resisting error.

Where This Direction Could Lead

If systems like Mira gain traction, we may reach a point where AI outputs come with verification scores. Critical decisions could rely on consensus checked intelligence. Autonomous agents could operate on top of structured trust layers.

Eventually, people might stop asking whether the AI is correct because the verification layer already communicates confidence levels.

That would represent a significant evolution in how we interact with machine generated information.

Final Reflection

After studying Mira Network, I no longer see AI reliability as a theoretical debate. I see it as an engineering and economic design challenge.

Mira does not attempt to create a perfect AI. It creates a framework where perfection is unnecessary because validation distributes confidence.

That may sound like a subtle shift. But I believe it is foundational.

In the long run, the future of AI may not belong to the smartest model.

It may belong to the systems we can actually trust.