@Fabric Foundation I was at my kitchen table before sunrise and the coffee maker kept clicking while a robot dog video looped on my laptop. It paid to recharge itself and that small ordinary detail stayed with me because it made autonomy feel less like a concept and more like a daily fact. If machines can act on their own then I keep coming back to one quiet concern. How do I know what they were actually allowed to do?

That question feels more urgent to me now because autonomous systems are no longer sitting at the edge of the conversation. Over the past year I have watched major AI companies move toward agent tools with more serious attention to tracing tool use evaluation and production monitoring while the EU AI Act has also moved into a real implementation schedule that companies cannot treat as a distant policy exercise anymore. I take that as a sign that accountability is leaving the conference stage and moving into product decisions where it belongs because once software starts making decisions moving money or taking actions in the world a private log file and a promise stop feeling sufficient.

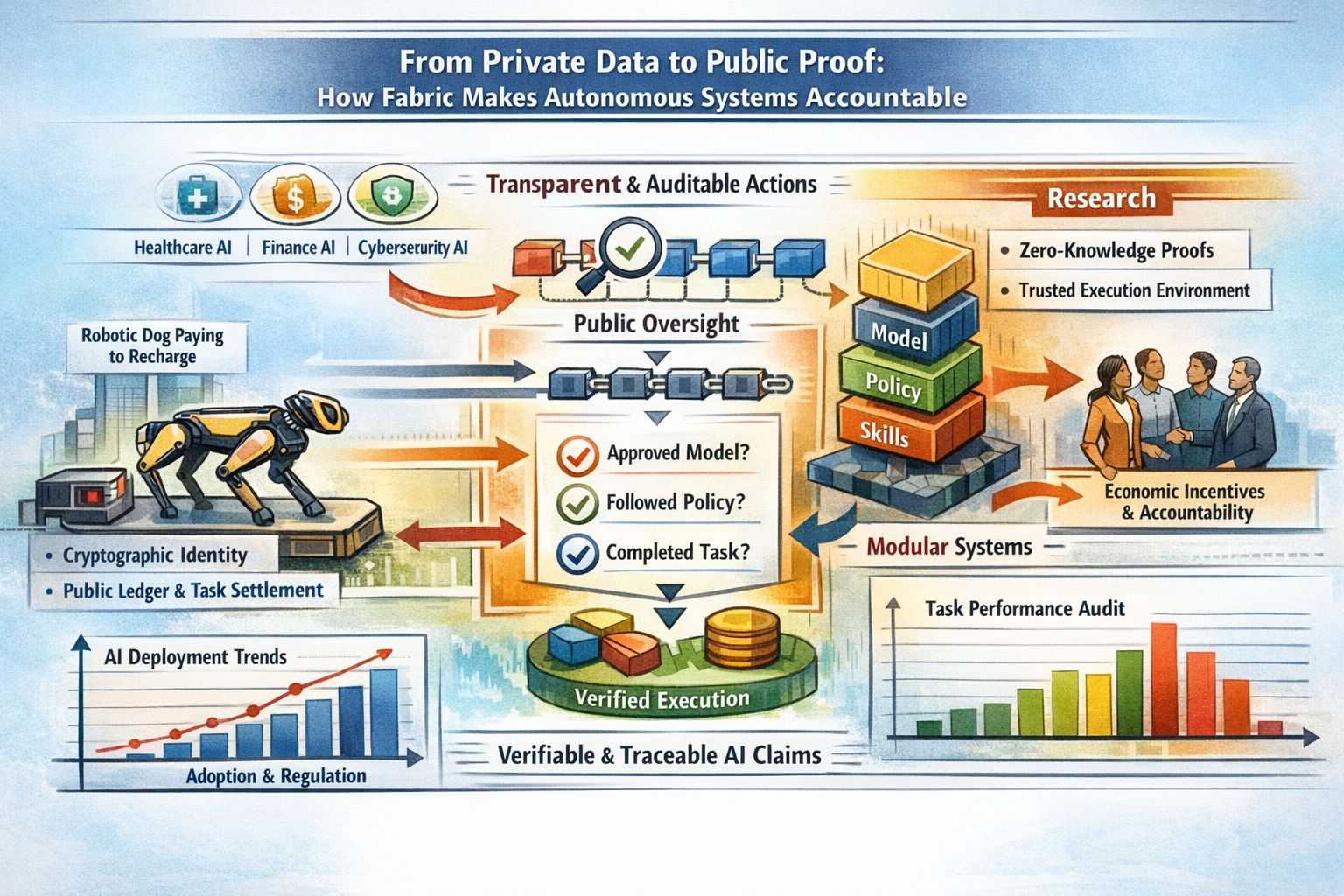

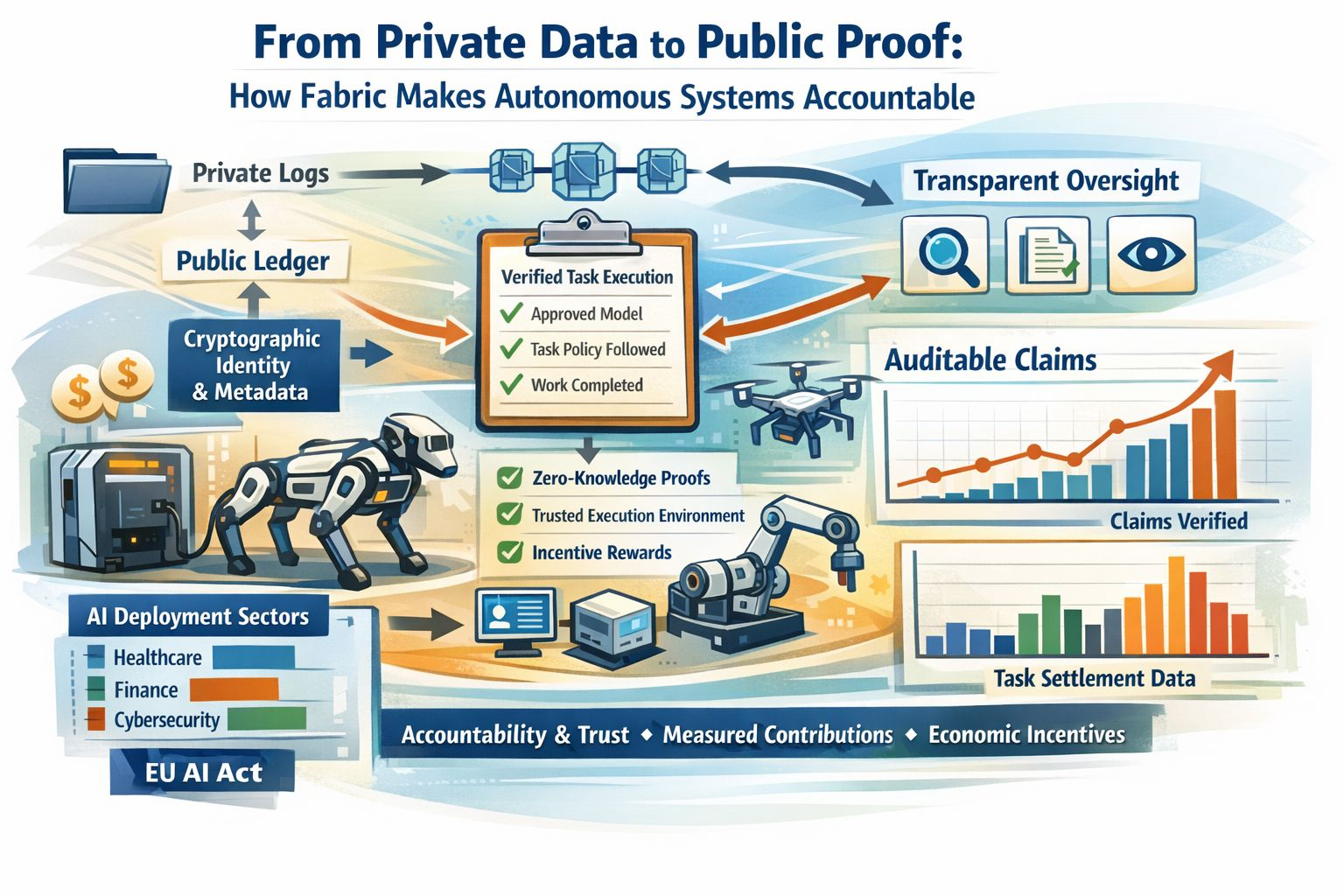

That is why Fabric caught my attention. In its own terms Fabric is trying to build a public coordination layer for robots and other autonomous systems that replaces closed control systems with a structure that is easier to inspect govern and challenge. I do not see that as a perfect answer and I do not think Fabric presents it that way either. What I see is a serious architectural claim. If autonomy is going to matter in the physical world then the system needs some durable memory outside the company that deploys it. Fabric argues for cryptographic identity public metadata around capabilities and rules and a modular stack that can be examined piece by piece instead of a sealed end to end system that hides too much when something goes wrong.

What keeps me interested is the move from private data to public proof because that is a cleaner and more realistic standard. I do not want every sensor reading personal detail or internal record exposed just so a machine can be audited. I do want claims that can be checked in a credible way. I want to know whether the system used an approved model whether it followed the task policy and whether it actually completed the work it is being credited or paid for. Fabric’s roadmap points in that direction through robot identity task settlement and structured data collection while the broader research world is also spending more time on verifiable and auditable AI systems that preserve privacy through tools such as zero knowledge cryptography trusted execution environments and stronger delegation controls. That broader trend matters to me because it suggests Fabric is not a strange side road. It sits inside a wider shift toward evidence that other researchers are trying to formalize as well.

I also find Fabric interesting because it treats accountability as an economic design question and not only as a compliance exercise. That may sound dry at first but it feels practical to me because systems usually move in the direction their incentives push them. Fabric’s whitepaper ties rewards to measured contributions and verified work and it openly argues against models that reward passive holding without meaningful participation. I am still cautious whenever tokens sit near the center of a system like this and I do not think skepticism is out of place. Even so the underlying instinct makes sense to me. If an autonomous system is supposed to earn trust then the incentives around it should favor traceable work instead of vague symbolic participation.

There is also real progress behind the current attention which is one reason the topic is getting harder to ignore. Fabric Foundation has published its whitepaper and laid out a 2026 roadmap. OpenAI has introduced agent building tools with built in observability and Anthropic has written more concretely about production tracing evaluation and multi agent system behavior. Circle is also showing what agentic payments can look like in practice including the OpenMind robot dog that used USDC to pay for its own recharge. None of that solves accountability by itself and I would not pretend otherwise. What it does show is that the problem has moved out into public view and once that happens infrastructure starts to matter a great deal more.

My hesitation is still simple. Fabric is early and its own documents make clear that some of the most important governance choices are not settled yet including how validators should be chosen and which measures of success are hard enough to fake. Oddly that honesty makes me take the project more seriously because it reads like an admission of real design work rather than a claim of easy certainty. I do not need a flawless blueprint right now. I need systems that start from the premise that trust has to be earned in public. That is the part of this story that stays with me because it turns accountability from a policy slogan into a design principle. Keep sensitive data where it belongs and make decisions permissions and proofs visible enough that nobody has to accept autonomous behavior on faith alone. That feels overdue and practical to me.