Some mornings I scroll through a long stream of posts before I even get out of bed. News headlines, technical threads, people explaining some new AI tool that supposedly understands everything. It all arrives quickly, and most of it sounds confident. That part always stands out to me. Confidence has become the default tone of machines. Whether the answer is correct or not almost feels secondary.

Anyone who has spent time using modern AI systems has probably noticed this. You ask a model something complicated and it responds immediately, often in very polished language. Sometimes the explanation is surprisingly helpful. Other times it quietly invents details that never existed. The difficult part is that both responses can look almost identical on the surface. The machine rarely signals uncertainty in a natural way.

This problem is starting to attract attention in the crypto and AI infrastructure space. A few projects are experimenting with the idea that truth itself might need a coordination system. That sounds philosophical at first, but the reasoning is fairly practical. If machines are generating an enormous number of claims every day, someone needs to check them. Not occasionally. Constantly.

Mira Network sits somewhere inside that conversation. The system is built around a simple observation: verification takes effort, and effort usually requires incentives. Instead of assuming that people will fact-check AI outputs voluntarily, the network tries to turn that process into an economic activity.

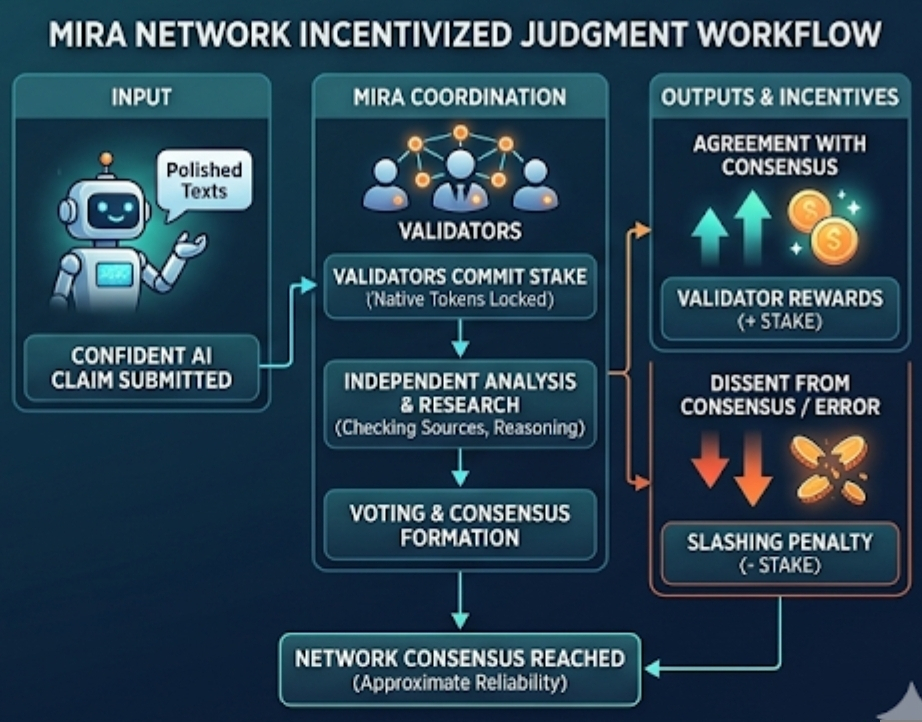

The structure is easier to understand if you imagine a claim appearing inside the network. An AI model might produce a statement about a dataset, a research summary, or even a prediction about something measurable. That claim doesn’t immediately become “truth.” Instead it becomes something closer to a proposal that the network needs to evaluate.

Participants known as validators step in at that point. Their role is not glamorous. They read the claim, look at supporting evidence, and try to decide whether it holds up. Sometimes that means checking sources. Sometimes it means testing the reasoning itself. In simple terms, they are doing the kind of careful reading that most people skip when information arrives quickly.

The interesting part is that validators have something at stake. The network uses tokens, which are digital assets native to the protocol, as a way to create incentives. Validators lock a portion of those tokens when submitting an evaluation. If their judgment eventually aligns with the network’s consensus, they receive rewards. If they consistently make incorrect evaluations, they can lose part of their stake.

At first glance this looks like another example of tokenized incentives, something crypto has experimented with for years. But here the resource being coordinated is unusual. The network is not coordinating computing power or financial liquidity. It is coordinating judgment.

And judgment is messy.

Markets can be efficient when the objective is clear. Trading electricity, allocating storage space, distributing bandwidth to those systems have measurable outputs. Truth is different. Determining whether a statement is correct often involves interpretation, incomplete information, or expertise that only a few people possess. Even humans disagree about facts sometimes.

That tension is one of the more fascinating parts of Mira’s design. The system assumes that if enough independent validators examine a claim, their combined judgment might approximate reliability. Not perfect truth. Just something closer to it.

Whether that assumption holds is still an open question.

One thing that seems likely is that validator behavior will evolve over time. People respond to incentives in subtle ways. If rewards depend on matching consensus, some participants might begin predicting what others will decide rather than carefully analyzing claims themselves. Anyone who has watched prediction markets or governance voting has probably seen similar patterns emerge.

There is also the influence of reputation. Networks like this rarely stay anonymous forever. Over time, dashboards appear. Rankings appear. Certain validators gain recognition for consistent performance. Suddenly the system contains visible signals of credibility.

I have noticed something similar on content platforms like Binance Square. Visibility metrics quietly shape behavior there. Creators watch engagement statistics, leaderboard rankings, and audience responses because those numbers influence reputation. Even when no one explicitly tells participants what to write, the metrics create subtle incentives.

A verification network could develop comparable dynamics. Validators with strong track records may become influential voices. That can help the system to experienced evaluators often notice problems others miss. But reputation can also create gravity. When respected participants lean in one direction, others may hesitate to disagree.

The irony is that disagreement is often essential for discovering errors.

Another layer of complexity appears once AI models begin interacting with these verification systems more directly. Machines are very good at optimization. If models learn what kinds of explanations tend to pass validation, they may begin shaping outputs around those patterns. The result might look persuasive without necessarily becoming more accurate.

This is not a new phenomenon. Students sometimes learn to write essays that satisfy grading rubrics rather than genuinely understanding the subject. Algorithms that can behave in similar ways.

Because of this, the design of verification is incentives become extremely important. This network needs a validators who can examine claims independently rather than simply that following the visible trends. Some claims may require specialized expertise. Others might need multiple evaluation stages before consensus emerges.

Still, there is something quietly appealing about the broader idea behind Mira. For a long time the internet focused mostly on producing information. Faster publishing tools, bigger datasets, larger AI models. Output kept increasing.

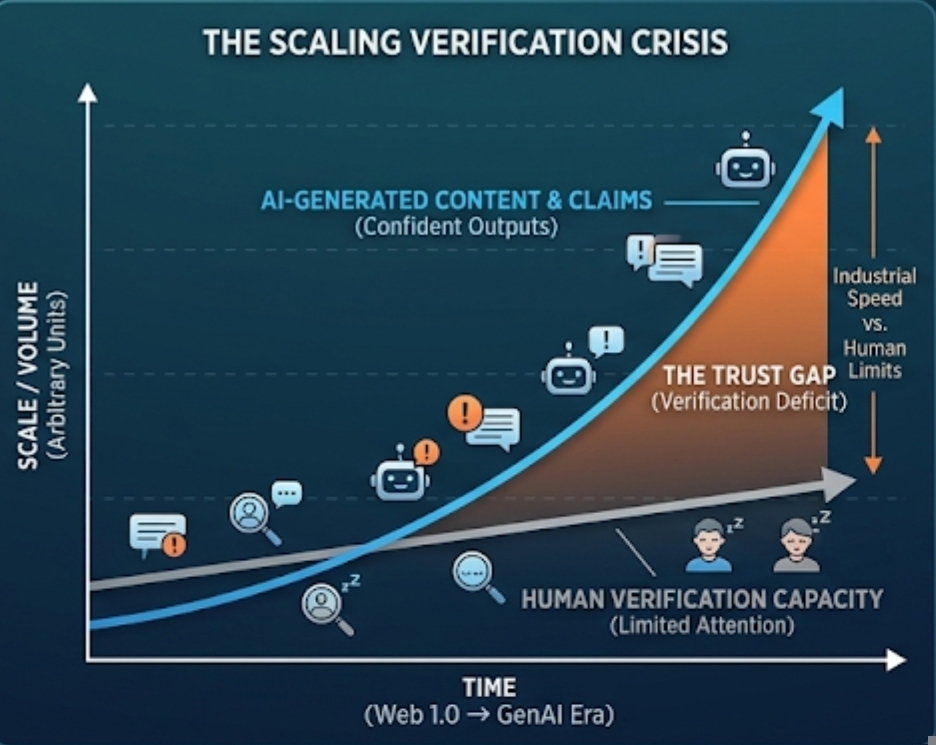

Verification did not scale at the same pace.

Human attention is limited. Careful reading takes time. When machines began generating text at industrial speed, the gap became obvious. Information exploded outward, while trust mechanisms remained slow and fragmented.

Mira Network tries to narrow that gap by treating verification as infrastructure rather than an afterthought. Instead of assuming that trustworthy knowledge appears naturally, the system acknowledges that evaluation requires coordination.

Whether token incentives are the right coordination mechanism is still uncertain. Some people believe markets can discover reliable signals if incentives are aligned correctly. Others worry that financial incentives might distort judgment rather than improve it.

Personally, I suspect the outcome will land somewhere between those extremes. Economic systems rarely behave exactly as designers expect. Participants adapt. Incentives interact with human psychology in unpredictable ways.

Yet the experiment itself feels relevant to the current moment. AI systems are becoming prolific narrators of reality. They summarize research papers, explain historical events, and offer technical guidance. Sometimes they do it remarkably well. Other times they produce confident illusions.

If machines are going to speak this often, it makes sense that someone is trying to organize how their statements get evaluated. Not by a single authority, but by a network of participants who have reasons to pay attention.

Truth has always required effort. What is changing now is the scale of the problem.

Artículo

Mira Network and the Economics of Machine Truth

Aviso legal: Contiene opiniones de terceros. Esto no constituye asesoramiento financiero. Es posible que incluya contenido patrocinado. Consultar Términos y condiciones.

0

5

659

Descubre las últimas noticias sobre criptomonedas

⚡️ Participa en los debates más recientes sobre criptomonedas

💬 Interactúa con tus creadores favoritos

👍 Disfruta del contenido que te interesa

Correo electrónico/número de teléfono