@Fabric Foundation I was still at my desk after 7 p.m., listening to the radiator click and scrolling through Fabric Protocol notes with a cold mug beside me, because I keep seeing the same question in different forms: who checks the machines when they start doing real work, and can anyone verify it?

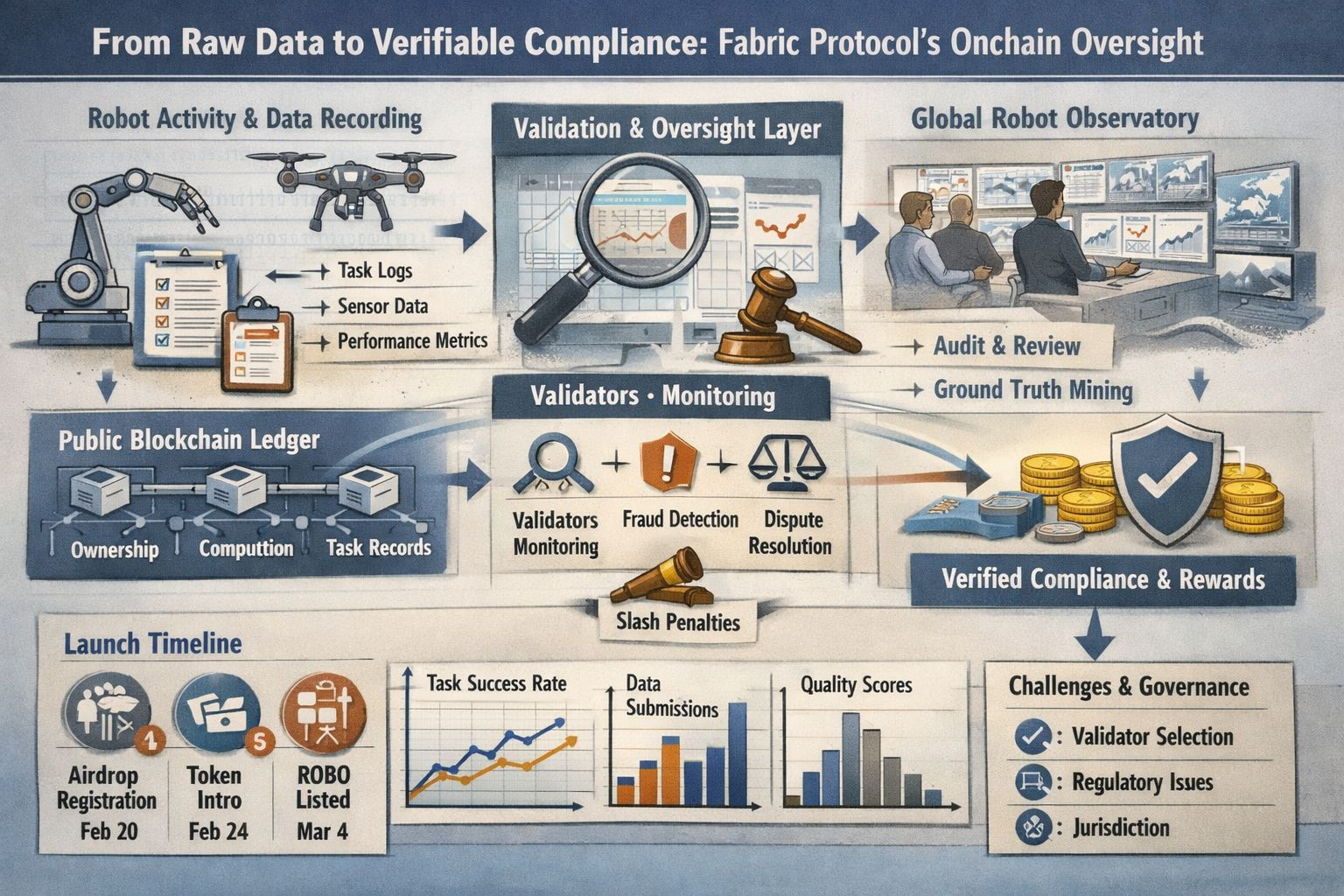

I care about that question because I’m tired of systems that ask for trust before they offer evidence. Fabric Protocol caught my attention for that reason. In its whitepaper, it describes a network for building, governing, and evolving general-purpose robots on public ledgers, with computation, ownership, and oversight recorded so outsiders can inspect them. That framing matters more than the robot-economy language around it. I’m personally interested in whether raw operational data can become something outsiders can audit.

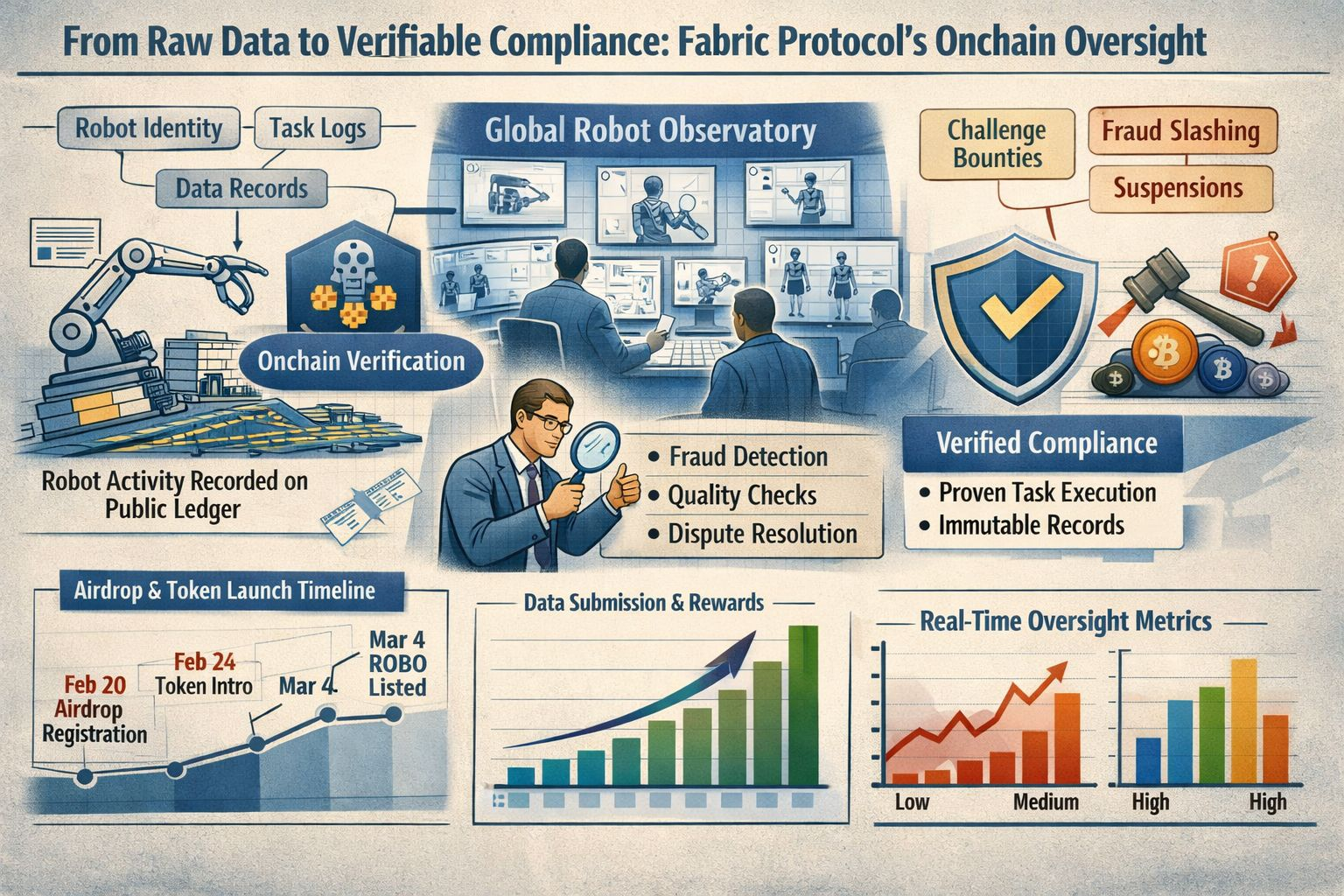

Part of the reason Fabric is trending now is timing. The project moved from concept paper to public launch quickly: the airdrop registration portal opened on February 20, the foundation published its token introduction on February 24, and Binance listed ROBO on March 4 with a Seed Tag. At the same time, the broader market is arguing about real-time supervision as tokenized systems spread and regulators demand visibility across chains, not months later. Fabric arrives inside that conversation.

What makes the idea more than branding is the attempt to turn oversight into protocol behavior. Fabric’s 2026 roadmap starts with robot identity, task settlement, and structured data collection in early deployments, then moves toward incentives tied to verified task execution and data submission. That reads like an admission that compliance does not begin with a legal memo. It begins with records: what machine acted, what task was attempted, what data supports the claim, and who can challenge it.

I notice that Fabric does not describe rewards as passive yield for holding a token. Its materials say rewards are paid for verified work, including task completion, data contributions, compute, and validation, and the whitepaper contrasts that model with proof-of-stake systems that reward delegation. That distinction is not a full answer to compliance, but it matters. If a network wants its records to mean anything, it helps when compensation depends on measured activity instead of possession.

The sharper oversight mechanism sits in the verification layer. Fabric proposes validators who monitor quality and availability, investigate disputes, and earn challenge bounties when they prove fraud. The whitepaper also outlines slashing for proven fraud and suspensions when quality scores fall below a threshold. I find that more interesting than the token itself. A compliance system becomes believable when someone has authority, economic incentive, and a clear way to say, “This record is false.”

There is also a less flashy angle in the project’s language around observation. Fabric talks about a “Global Robot Observatory,” where humans observe and critique machine behavior, and about “mining immutable ground truth” in a world of synthetic media and contested facts. I don’t read that as a solved product. I read it as the bottleneck. Compliance is not just storing logs forever. It is deciding which facts deserve to become the log in the first place.

That wider idea lines up with a trend I keep seeing outside Fabric. Chainlink’s writing on compliance attestation argues that onchain systems are moving from manual checks toward cryptographic proofs letting smart contracts verify offchain conditions in real time. Elliptic, from a market-surveillance angle, makes a similar point: tokenized markets need reporting and risk visibility at network speed. Fabric’s appeal, for me, is that it applies that oversight instinct to autonomous machines instead of financial assets alone.

I still have reservations. Fabric’s own whitepaper says key governance questions remain open, including how validators are selected at the start. Its legal section also makes clear that jurisdiction still matters, that the token’s treatment can vary, and that airdrops may use geo-fencing, IP blocking, sanctions screening, wallet reputation analysis, and anti-Sybil checks. So I don’t see a frictionless machine future here. I see a protocol admitting, in public, that oversight is messy, local, and expensive.

That honesty is why Fabric Protocol deserves attention. Its credibility is not the promise of smarter robots. It is the attempt to make machine activity legible before trust is demanded. In a year when AI safety work is more global and tokenized infrastructure is pushing regulators toward real-time supervision, that feels timely. I’m watching Fabric less as a bet on hype and more as a test of whether raw data can become verifiable compliance in public view.