When people talk about AI, they usually talk as if the main race is about building the smartest model in the room. Bigger model, faster model, more data, better reasoning. That is the part everyone notices. It is flashy. It is easy to understand. But after watching this space for a while, I have started to think the harder problem is not intelligence itself. It is trust. A system can sound brilliant and still be wrong in a way that wastes your time, distorts a decision, or just leaves you with that annoying feeling that something is slightly off.

That feeling matters more than people admit. I have had it many times with AI tools. You read an answer and, for a second, it feels clean and complete. Then one sentence catches your eye.

Maybe a date looks odd. Maybe the logic jumps too quickly. Maybe the confidence feels borrowed rather than earned. You go check it somewhere else, and suddenly the whole thing becomes shaky. Not useless. Just unstable. And once that happens a few times, you stop asking only whether the model is smart. You start asking who, or what, is checking it.

That is why Mira Network is interesting to me. Not because it promises some dramatic AI future.

Honestly, the space has enough dramatic promises already. Mira seems to be looking at a quieter issue. It treats verification as its own layer.

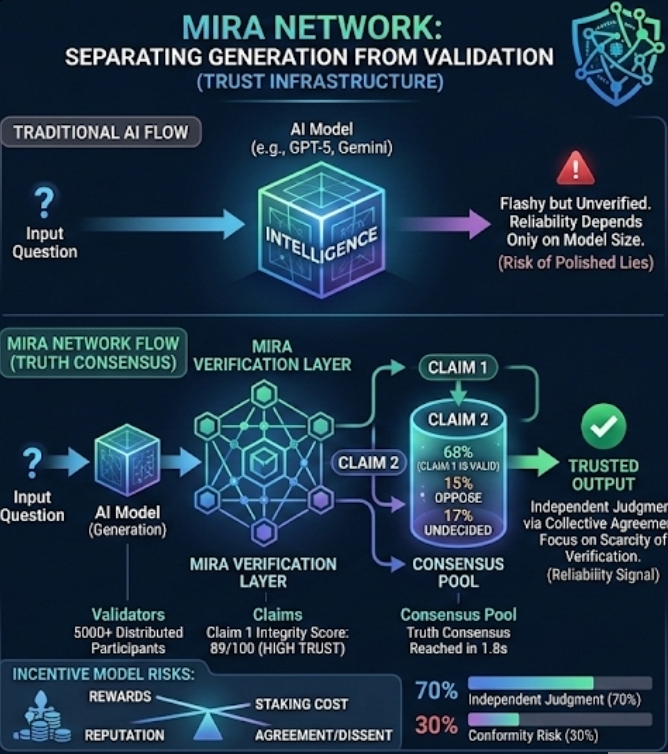

In plain words, that means it cares less about generating the answer and more about building a system that can judge whether the answer deserves trust. I think that distinction is bigger than it first appears.

A lot of AI projects still assume reliability will improve naturally if the models become powerful enough. Maybe that happens to a point. But I am not fully convinced. Intelligence and reliability are related, sure, but they are not the same thing. A model can generate a polished lie, or just a polished mistake. We have all seen that by now. So Mira’s direction feels like a small break from the usual script. Instead of saying, “let’s build a smarter machine,” it seems to ask, “what kind of network is needed to verify machine output at scale?”

That is where the phrase truth consensus starts to make sense. A single AI answer, on its own, is just a claim. Mira Network appears to build around the idea that claims should be checked by a wider group of validators rather than accepted at face value. Validators, here, are the participants who examine outputs and judge whether they seem accurate, consistent, or supported. The word consensus comes from blockchain logic, where a network reaches agreement on what is valid. Mira seems to apply a similar instinct to information.

I would not call that truth in some grand philosophical sense. That is where people get carried away. Networks do not magically discover perfect truth just because multiple participants agree. Humans agree on wrong things all the time. Markets do it. Institutions do it. Social media definitely does it. Still, there is something useful in the attempt. Consensus may not produce truth itself, but it can produce a stronger reliability signal than one model speaking alone.

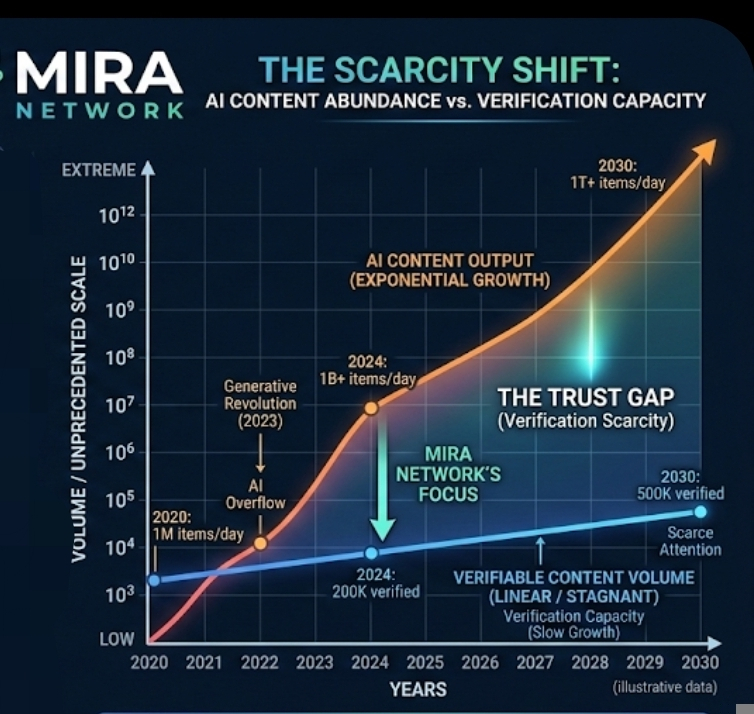

That difference matters more now because AI output is becoming cheap. Almost too cheap. Words, images, claims, summaries, explanations that machines can produce endless amounts of them. The internet was already messy before this.

Now it is getting crowded in a new way. I think that is the real background to Mira Network. Not just AI progress, but AI overflow. When content becomes abundant, verification becomes scarce. Scarcity shifts value.

And then the economic side enters. Mira is not only for checking information. It also tries to organize incentives around checking. That part is important. People do not verify things for free forever, especially when verification takes time, computing resources, or careful attention. So the network introduces rewards, staking, and reputation. In simple terms, participants are pushed to behave honestly because accuracy has value and bad judgment has a cost.

In theory, that sounds clean. In practice, it probably gets messy fast. Incentive systems always do. The uncomfortable question is whether validators will actually search for truth or just learn how to align with whatever outcome the network rewards. That is not a minor detail. It is probably the whole game.

You can even see a softer version of this on Binance Square. Writers quickly learn that visibility is never neutral. Rankings, dashboards, engagement metrics, all of it shapes behavior.

Some people start writing faster because speed helps visibility. Others lean into certainty because strong claims travel better than careful ones. After a while, the platform is not just measuring content. It is quietly training people how to produce it. I suspect verification networks could create similar behavior in validators. If reputation and rewards are tied to agreement, some people may optimize for consensus instead of independent judgment.

That, to me, is one of the biggest risks in Mira Network’s model. A truth network can slowly become a conformity network if the incentives are badly tuned. And once that happens, the language of decentralization does not save it. You just end up with a distributed version of the same old herd instinct.

Still, I would not dismiss the idea. Separating AI generation from AI validation feels like one of the more serious directions in this space. Maybe even overdue. For years, too much attention has gone to model capability while trust was treated like a side effect that would improve later. Mira Network seems to start from the opposite end. It treats trust as infrastructure.

I think that is why the project stays in my mind more than some louder AI narratives. It is not really selling intelligence. It is wrestling with doubt. And that feels closer to the world we actually live in, where the problem is often not lack of answers, but not knowing which answer deserves to stay standing after the noise settles.