A few weeks ago I asked an AI tool to summarize a long research paper. The summary looked convincing at first glance. Clean sentences. Confident tone. But when I compared it with the original paper, a few details were slightly off. Nothing dramatic. Just small distortions that slowly changed the meaning. It reminded me of something simple: AI is becoming very good at producing information, but we still struggle with confirming whether that information deserves trust.

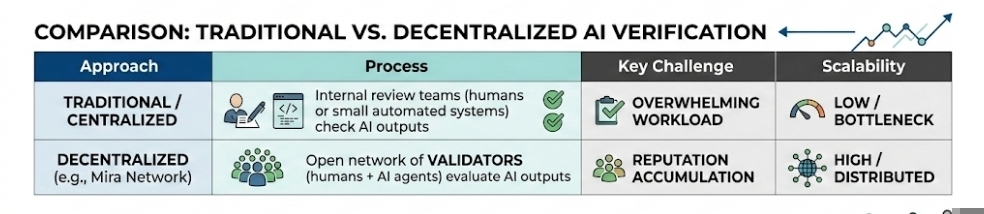

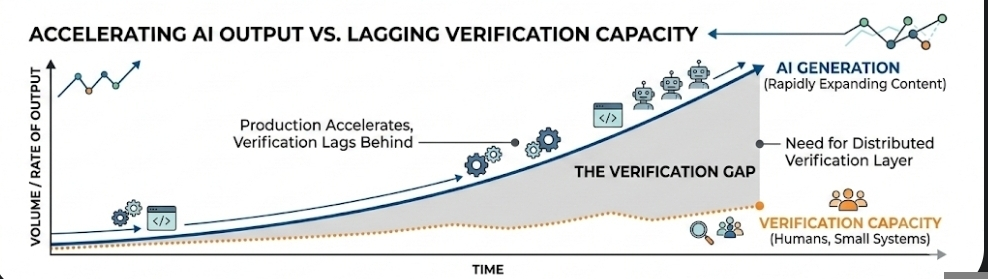

That gap between generation and verification has quietly become one of the most interesting problems in the AI space. Systems can now produce text, images, research summaries, code, and analysis at enormous speed. Yet checking the results still relies heavily on humans or small internal review systems. The imbalance keeps growing. Production accelerates, verification lags behind.

Mira Network seems to be exploring a different direction. Instead of trying to make a smarter model, the project looks at verification itself as an open network. The idea sounds slightly unusual at first: treat truth checking almost like a marketplace. Some participants generate AI outputs, others verify them, and the network records how accurate those evaluations turn out to be over time.

In practical terms, the system introduces something called validators. Validators are participants who review AI outputs and judge whether they are correct, misleading, incomplete, or uncertain. That sounds straightforward, but the interesting part is how the system measures reliability. If a validator consistently provides judgments that align with the broader network consensus, their reputation grows. If their decisions frequently diverge from what the network later determines as accurate, their influence fades.

It reminds me a little of how trust develops in online communities. Nobody formally assigns credibility at the beginning. It slowly accumulates. A person writes thoughtful posts, others find value in them, and eventually their voice carries more weight. Something similar could happen inside verification networks.

In the crypto world there is already a somewhat related idea called oracle networks. Oracles bring outside information into blockchains. For example, a smart contract might need to know the current price of Bitcoin or the result of a sports event. Since blockchains cannot see the real world directly, multiple participants provide that data and the network compares their answers to reach a reliable result. Mira seems to be applying that same logic to AI outputs rather than price feeds.

What makes this interesting is how quickly AI content is expanding. A single AI system can produce thousands of responses every minute. Articles, research summaries, financial analysis, social media posts. If verification remains centralized, the workload becomes overwhelming. A distributed verification layer at least attempts to spread that responsibility across a network.

But systems like this are not just technical designs. They also shape human behavior. Once incentives and rankings appear, people begin adjusting how they participate. I see a small version of this dynamic on platforms like Binance Square. Writers gradually become aware of dashboards, engagement signals, visibility rankings. Over time those metrics subtly influence how people write and what topics they choose. Sometimes the effect is positive. Sometimes it pushes people toward safer, more popular opinions.

A system like this could slowly create its own kind of pressure. When people know their reputation is on the line, they naturally become more careful about the judgments they make. Over time some validators might start avoiding decisions that feel too controversial. Not necessarily because they think the majority is right, but because disagreeing with everyone else carries a risk. If the network mostly rewards alignment with the crowd, it becomes tempting to follow the safer path. And once that habit forms, independent evaluation can quietly fade into the background. It is a familiar tension in any reputation-driven environment.

Another challenge sits quietly in the background: scale. AI models are already producing huge volumes of output. If verification networks cannot keep up, they risk becoming bottlenecks. Some projects that suggests the letting AI agents assist in the verification of process. In that scenario, one AI system checks the output of another. Efficient, maybe. Though it also introduces a strange philosophical loop. AI verifying AI while humans supervise the edges.

Sometimes I wonder whether the most valuable part of networks like Mira will not be the verification results themselves, but the credibility layer they create. A long-term track record of accurate evaluation could become a kind of digital reputation asset. Validators who repeatedly demonstrate good judgment might become trusted reviewers across many systems.

That idea feels small at first. But credibility has always been one of the rarest resources online. Anyone can publish information. Far fewer people consistently demonstrate that they can judge information well.

Of course, there is still a possibility that these systems remain experimental. Crypto infrastructure often explores ideas that sound promising but struggle with real adoption. Verification markets might turn out to be too slow, too complex, or simply unnecessary if other approaches emerge.

Still, the underlying question does not disappear. AI systems are becoming more capable every year. They generate knowledge faster than any group of humans could. And somewhere behind all that output sits a quieter task that rarely gets attention.

Someone or something that has to check the answers.

Artículo

Mira Network and the Idea of a Global AI Verification Market

Aviso legal: Contiene opiniones de terceros. Esto no constituye asesoramiento financiero. Es posible que incluya contenido patrocinado. Consultar Términos y condiciones.

0

8

803

Descubre las últimas noticias sobre criptomonedas

⚡️ Participa en los debates más recientes sobre criptomonedas

💬 Interactúa con tus creadores favoritos

👍 Disfruta del contenido que te interesa

Correo electrónico/número de teléfono